- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Scaling an Array of Numbers

Solved!02-18-2019 10:53 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello!

So I have a 2D array of values from 0 to 1023 that represent readings from a sensor. I need to scale these to -200 to 200. Any ideas on how to easily do this? Thanks!

Example of array:

514 578 233

513 555 122

513 789 514

Solved! Go to Solution.

02-18-2019 11:05 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Math.

Take array, divide by 1024, multiply by 401. Subtract 200.

02-19-2019 08:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@RavensFan wrote:

Math.

Take array, divide by 1024, multiply by 401. Subtract 200.

Doesn't that scale between -200 and 200.608 (1023/1024*401-200=200.608)?

AFAIC, divide by 1023, multiply by 400, subtract 200.

Or, more general subtract the min input, divide between max input - min input, multiply by output range, add output offset.

And of course if the array gets big, divide 400 by 1023 first, then multiply it by the array. That will trait two expensive array multiplications for one scalar multiplication and one array multiplication.

02-19-2019 08:33 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

wiebe@CARYA wrote:

@RavensFan wrote:

Math.

Take array, divide by 1024, multiply by 401. Subtract 200.

Doesn't that scale between -200 and 200.608 (1023/1024*401-200=200.608)?

AFAIC, divide by 1023, multiply by 400, subtract 200.

Or, more general subtract the min input, divide between max input - min input, multiply by output range, add output offset.

And of course if the array gets big, divide 400 by 1023 first, then multiply it by the array. That will trait two expensive array multiplications for one scalar multiplication and one array multiplication.

Hard to say. 0-1023 consists of 1024 integer values.

-200 to 200 consists of 401 integer values +/- 1-200 and zero in the middle.

The answer should get the OP close enough. If it doesn't quite work the way they want, then perhaps they need 400 instead of 401. Or 1023 instead of 1024. Or perhaps both. The two ranges aren't easily divisible with each other. So answer may never be exact.

02-19-2019 08:37 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

wiebe@CARYA wrote:

And of course if the array gets big, divide 400 by 1023 first, then multiply it by the array. That will trait two expensive array multiplications for one scalar multiplication and one array multiplication.

Do you know if compound arithmetic is smart enough to do this internally?

02-19-2019 09:11 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Alexander_Sobolev wrote:

wiebe@CARYA wrote:

And of course if the array gets big, divide 400 by 1023 first, then multiply it by the array. That will trait two expensive array multiplications for one scalar multiplication and one array multiplication.

Do you know if compound arithmetic is smart enough to do this internally?

Not without testing.

It would be silly if it didn't, so my bet would be that it is smart enough.

02-19-2019 12:51 PM - edited 02-19-2019 12:53 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Another option would be to use linear evaluation (offset and slope have been discussed).

Also works for 2D arrays, of course.

02-20-2019 02:43 AM - edited 02-20-2019 02:52 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

wiebe@CARYA wrote:

@Alexander_Sobolev wrote:

wiebe@CARYA wrote:

And of course if the array gets big, divide 400 by 1023 first, then multiply it by the array. That will trait two expensive array multiplications for one scalar multiplication and one array multiplication.

Do you know if compound arithmetic is smart enough to do this internally?

Not without testing.

It would be silly if it didn't, so my bet would be that it is smart enough.

Seems that I was wrong...

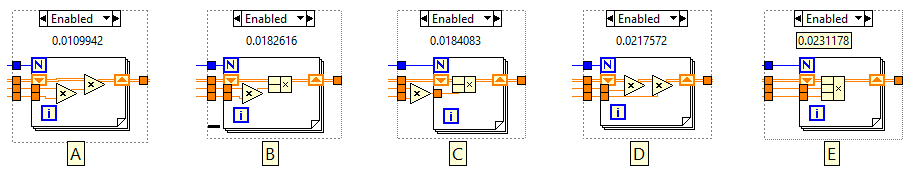

Here's my test bench. And it's tricky, Sometimes any change makes the time double, and a random change makes it go back. I feel like these values are "realistic" though, after giving each option several go's. I used a median before the mean, as strong outliers added about 20% noise on the results.

The results:

Definitely some unexpected weirdness going on here!

A being (much) faster then B? Weird!

A being (much) faster then C? Weird!

B being faster then C? Weird!

E being the slowest? Sad. A factor 2.3 is quite a bit slower... This could\should be just as fast as A.

DISCLAMER: Tested in 2013... 2017SP1 is a bit faster for all cases, but the order and ratios are pretty much the same.

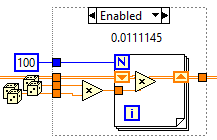

EDIT: It get worse:

5.5 times slower then the fastest way... ![]()

And, just for the record:

02-20-2019 05:31 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

wiebe@CARYA wrote:B being faster then C? Weird!

They are within the noise. I would expect them to actually compile to the same thing due to optimizations that the compiler does such as moving that first multiply outside of the loop.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

02-20-2019 05:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

That it's slower when you wire the scalars on top isn't that surprising. There's some threads on LV memory management, and if you wire an array on top it usually work in place, while a scalar on top forces it to create a new array.

/Y