- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Continuous sampling method get unreasonable values

Solved!01-19-2014 06:27 PM - edited 01-19-2014 06:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Would like to measure the amplitude of a signal as attached file 'Ori.jpg', if using N samples method (1k samples, 239kHz rate) for data acqusition, the result was perfect as file '1k_N.jpg'. But the speed was too slow.

Though the continuously sampling method carried out much faster, the values were not usable. As files '1k_continuous.jpg' . Do not know what cause these outliers, and it not occured occasionally. The 10.5537 should be the maxmum reading value of NI 6361.

Btw, is that impossible to accelerate the N sample method? Even tried 20 samples instead of 1000, the loop still cost similar time. The DAQ was not stopped in each loop.

Helps with either decreasing N sampling time or taking correct readings from continuously sampling method are greatly appreciated.

Pem

Solved! Go to Solution.

01-19-2014 06:30 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Values like attached.

01-19-2014 07:00 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Your pictures really do not give much of a clue. Please run your VI until some data has been gathered. Then from the Edit menu select Make Current Values Default. Save. Post the VI.

Lynn

01-19-2014 07:05 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Some posters have problems with attachments. It seems mostly related to IE. Zip files seem to work.

01-21-2014 03:10 PM - edited 01-21-2014 03:12 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks for replies, sorry for missing the VI. They are attached now. These two VI are exactly the same program except the sampling method in DAQ. Default values were set as Lynn mention. Input signals were 500kHz sine wave with noise (Amplitude from 0.4 to 1.2), and digital output is to control switches (Leading different signals to NI 6361). Maybe the big values not the data to save, but the continuous sampling method still provided unstable and bigger values.

Thanks,

Pem

01-21-2014 07:07 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Pem,

Charts do not save data as default so your VIs have not raw data. Place a graph (NOT a chart) on the data output line of the DAQ Assistant, run, make default, save, post.

Lynn

01-22-2014 11:44 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks Lynn, it is updated now.

Pem

01-22-2014 04:07 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Pem,

Buffer overflow.

Do you get any errors? You do not have any error wires connected.

It appears that on each iteration of the loop you read 200 samples and the sampling rate is 59000 Hz. To get all the data you would need to read every 3.4 ms. Running the Amplitude and Levels VIs, the Mean VIs, and the Array to Spreadsheet function with your data takes about 20-30 ms according to the Profiler. Write to File also takes some time, possibly tens or hundreds of milliseconds if the OS needs to fragment the file. Thus you eventually will overflow a buffer in the DAQ process. There are several buffers and I have no way to tell which will be the critical path for your application.

Regardless, the fix is the same: 1. Read more samples at a time. 2. Use a Producer/Consumer architecture so that data processing/saving does not delay acquisition.

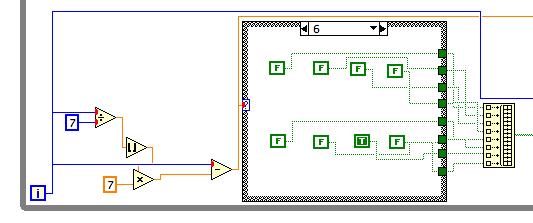

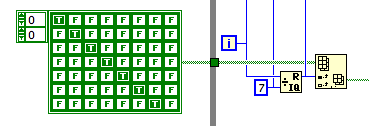

Although it has negligible effect on the data issue, the way you get the binary data for the DAQ outputs definitely qualifies as Rube Goldberg. the way you do it is shown below.

A much simpler way is to use Quotient&Remainder and Index Array with an array constant.

Lynn

01-22-2014 04:37 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks Lynn. I did not get any error if I just ran 70 loops each time. If for a longer period, the buffer would overflowed. That is not a issue in this case, I just wonder why continuously samping would provide larger values than N samples. Possibly that it is fast and capture some noise which N samples cannot? When I looked at the source signal, it never jumped up to a big value.

Appreciate for your opinion, maybe the signal problem. Nothing to do with the samping issue.

01-22-2014 07:01 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

In the N-samples version the DAQ Assistant only acquires 200 samples each time it is called and it returns oll of them. In the continuous sample version samples are acquired at 59000 per second, whether they are read or returned to the main program or not. So at a loop rate of 20 ms per iteration about 900 more samples are acquired than read on each iteration.

When an error occurs the DAQ Assistant stops the task and Unreserves it. The next time the loop iterates the DAQ device will be reconfigured. I have no way to know for sure, but it is possible that some spurious values get into the buffer during the stop/reconfigure process.

Lynn