- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Timing with an FPGA programme

11-11-2019 04:36 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

I am currently developing a LabVIEW programme that will be ran on an FPGA. The programme has to be able to sweep from a minimum frequency up to a maximum frequency in a set time. The operator can decide whether or not the the frequency sweeps back down, from max to min, or resets and sweeps back up from min to max. The way i plan to do this is by using cosine. When x>0... T, when x<0...F.

I have been able to programme this so that it runs on my computer, but not on my FPGA as I have been struggling with the timings.

Any help would be greatly appreciated.

11-11-2019 06:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I doubt that a version of the program you show would work on your PC. You seem to be misunderstanding dataflow. The timed loop at the top is the only thing that is going to run where it constantly reads the value of i at the rate of the timed loop. That value gets stuck at the tunnel of the timed loop because the timed loop never ends to allow the outputs to pass on to the next part of your code which is the big while loop at the bottom.

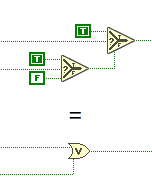

You also have a Rube Goldberg at the lower left where you wire a boolean into a select statement that outputs either a true or a false. Guess what happens if you eliminate the Select statement and the constants!

I would recommend looking at the online LabVIEW tutorials

LabVIEW Introduction Course - Three Hours

Learn LabVIEW

11-11-2019 08:44 AM - edited 11-11-2019 08:50 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@RavensFan wrote:

I would recommend looking at the online LabVIEW tutorials

LabVIEW Introduction Course - Three Hours

Learn LabVIEW

Looking at those Selectors, I'd say that is good advice.

EDIT: Ops, spoiled your spoiler, @RavensFan