- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Stumped on basic problem with floating point math

Solved!06-08-2011 11:42 AM - edited 06-08-2011 11:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I can't figure this out! It should be sooooo simple.

Here is the challenge:

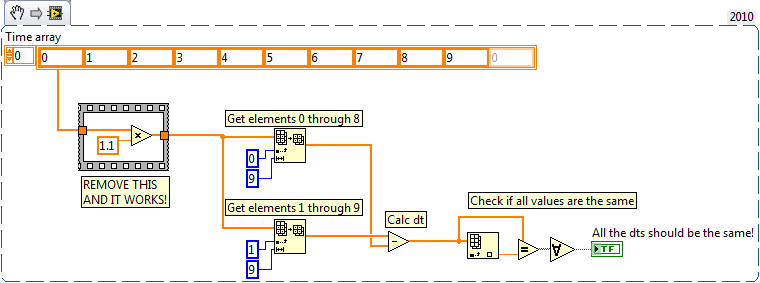

I have an incoming time array. For example: 0, 1, 2, 3, 4, 5, 6, 7, 8, 9

I want to scale this array by a constant (e.g. multiply by 0.1). So the resulting array should be: 0.0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9

Then I want to calculate the difference between each and every subsequent element in the array. In this example, the difference should 0.1 between every element. But my comparison fails.

See the example below. As far as I can see, the resulting boolean should always be TRUE. But its not.

But if I remove the scaling operation, then it works ok!

Please help!

Solved! Go to Solution.

06-08-2011 11:50 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It has been awhile since smercurio has had a contribution to his retirement fund, but once again you have "discovered" that there is no exact binary representation for 0.1.

I'd use one of the current "almost equals" comparisons described here:

http://forums.ni.com/t5/LabVIEW/Darin-s-Weakly-Nugget-2-8-11/m-p/1444262

And vote for this if you haven't already:

06-08-2011 12:16 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Darin.K wrote:

It has been awhile since smercurio has had a contribution to his retirement fund, but once again you have "discovered" that there is no exact binary representation for 0.1.

Yeah, the little piggy was getting a little light.

josborne, you owe me a nickel. ![]()

06-08-2011 01:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@smercurio_fc wrote:

josborne, you owe me a nickel.

Don't you mean two bits??? ![]()