- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Number array to boolean array

Solved!05-13-2020 01:21 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

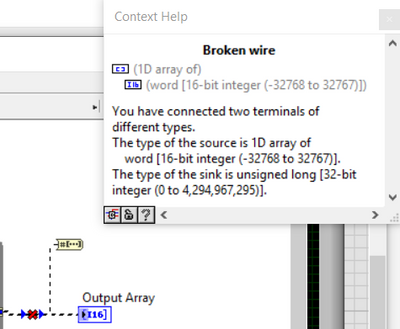

I have a 1D array of 16 bit integers (they are all 0 or 1's). I would like to convert it to boolean array but when I try to use num to array function a broken wire appears:

Solved! Go to Solution.

05-13-2020 01:27 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi RFeng, I think that function you are attempting to use is for converting 1 numeric (not an array) to its binary representation. You can just use the "not equal to zero" function to convert your numbers into booleans.

05-13-2020 01:34 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It looks like you are using the Number to Boolean Array primitive. This converts a *single* number to an array of Booleans. One way of converting an array of integers to an array of Booleans is to just pass it to the Greater Than 0? primitive. Any value in the input array that is greater than zero then results in a TRUE value in the output array.

05-13-2020 02:52 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@PsyenceFact wrote:

It looks like you are using the Number to Boolean Array primitive. This converts a *single* number to an array of Booleans. One way of converting an array of integers to an array of Booleans is to just pass it to the Greater Than 0? primitive. Any value in the input array that is greater than zero then results in a TRUE value in the output array.

I use "greater than zero" just because my mind accepts "greater than zero" a little more readily than "not equal to zero". I guess that's because it's a tiny bit less ambiguous.

(Mid-Level minion.)

My support system ensures that I don't look totally incompetent.

Proud to say that I've progressed beyond knowing just enough to be dangerous. I now know enough to know that I have no clue about anything at all.

Humble author of the CLAD Nugget.

05-13-2020 03:33 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I still (partially) recall some language or environment I used way back in the 90's (maybe even the 80's ?) where booleans were typically signed ints with a -1 value (all bits set high). As I recall, any non-zero integer value would evaluate as True, positive or negative alike. A "greater than 0" comparison would have wrongly missed all the built-in boolean constants.

So my habit would be to check "not equal to 0" so I don't have to check to see whether the int is signed or unsigned. (I'm pretty sure this came up in another thread not all that long ago, but I couldn't find it easily just now.)

-Kevin P

05-13-2020 05:22 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Kevin_Price wrote:

I still (partially) recall some language or environment I used way back in the 90's (maybe even the 80's ?) where booleans were typically signed ints with a -1 value (all bits set high). As I recall, any non-zero integer value would evaluate as True, positive or negative alike. A "greater than 0" comparison would have wrongly missed all the built-in boolean constants.

So my habit would be to check "not equal to 0" so I don't have to check to see whether the int is signed or unsigned. (I'm pretty sure this came up in another thread not all that long ago, but I couldn't find it easily just now.)

-Kevin P

That was something I never knew!

(Mid-Level minion.)

My support system ensures that I don't look totally incompetent.

Proud to say that I've progressed beyond knowing just enough to be dangerous. I now know enough to know that I have no clue about anything at all.

Humble author of the CLAD Nugget.

05-13-2020

05:30 PM

- last edited on

05-05-2025

11:27 AM

by

![]() Content Cleaner

Content Cleaner

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Kevin_Price wrote:

So my habit would be to check "not equal to 0" ...

Exactly. This is the only correct interpretation of the definition:

"LabVIEW stores Boolean data as 8-bit values. If the value is zero, the Boolean value is FALSE. Any nonzero value represents TRUE."

05-13-2020 07:00 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Kevin_Price wrote:

As I recall, any non-zero integer value would evaluate as True, positive or negative alike. A "greater than 0" comparison would have wrongly missed all the built-in boolean constants.

I know that is the case in C/C++. LabVIEW follows the same suite. I can't say about any other language. So, yes, "Not Equal To Zero" is the proper operation.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5