- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Error 1 occurred at Read File+ (string): Read File file size too large?

Solved!08-14-2019 10:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

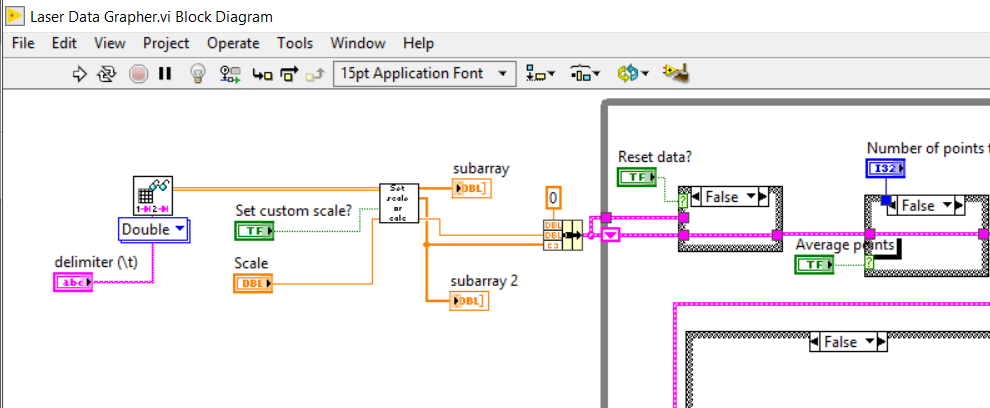

I am getting this error when I try to open a really large file (2.36GB) using the Read Delimited Spreadsheet vi. There are no illegal characters in the file name. I know for a fact because I renamed it to simply "1".

This code works fine with smaller files (still several hundred MB).

Is there a file size limit for this VI and if so, why am I getting an error related to an invalid character in the filename?

Solved! Go to Solution.

08-14-2019 10:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The Read Delimited Text File reads the entire file all at once. This means it will attempt to make a string that has 2.36G elements. This exceeds the limits of arrays and strings (I32 value used for index and size limits you to 2G elements). You really should try breaking up the amount that you read all at once and process it in chunks.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

08-14-2019 12:32 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks! We likely will switch to a lower resolution acquisition but I will limit the file size if I need to.

08-14-2019 01:30 PM - edited 08-14-2019 01:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I ran into that error some time ago with data files over 4G.

I ended up rewriting the read from spread sheet and making sure that an I64 was used instead of an I32 for all of the byte offsets etc.

LV file functions do support I64 offsets and indexes it is just the Read From Spreadsheet that was never updated to support larger files.

For what it is worth...

[Edit]

And just for the record, that error is the result if the I32 used inside the Read from Spreadsheet. It reads like the issue is the file name but it is actually the I32 not being large enough.

Ben

08-14-2019 02:11 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Ben wrote:

I ended up rewriting the read from spread sheet and making sure that an I64 was used instead of an I32 for all of the byte offsets etc.

LV file functions do support I64 offsets and indexes it is just the Read From Spreadsheet that was never updated to support larger files.

Unfortunately, the Read Delimited Spreadsheet retained the File IO VIs in there that are really only there for pre-LabVIEW 8 compatibility. Therefore, we are still stuck with the I32. Doing some digging, and there is a lot of improvement we could make to those VIs if it wasn't for the LabVIEW 7 compatibility VIs.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

08-14-2019 03:09 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I was going to say, I dove all the way down changing the I32 controls to I64 and it stopped at the Read from Binary File vi. That vi will coerce the I64 value to I32 and generate an error.

I will just break the file up into smaller chunks if I need to.

Thanks.

08-15-2019 07:01 AM - edited 08-15-2019 07:08 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@cmilless wrote:

I was going to say, I dove all the way down changing the I32 controls to I64 and it stopped at the Read from Binary File vi. That vi will coerce the I64 value to I32 and generate an error.

That is because the LabVIEW string can not contain more than 2G characters, so you can not read more than that in one go. If your file would contain for instance binary double values you can wire a 2^31 -1 number to the count and it will attempt to read in that many double values into an array which would be 16GB of data (and most likely bail out with an out of memory error unless you are in LabVIEW 64-bit AND on a machine with at least 32GB of memory!!)

LabVIEW arrays (and strings are really just an array of characters too) contain an I32 that defines the number of elements in the array and therefore an array can never contain more than 2^31 -1 elements in LabVIEW.

Current high end hardware is slowly reaching this LabVIEW limit but for all practical purposes you are still most certainly going to run into many other problems when trying to read that much data, so the limit is still mostly a theoretical limitation.

08-15-2019 07:57 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Now to try and tie this up in a nice package and avoid possible conflicts with what Rolf wrote...

Looking back at the file I was using for testing, it was a pseudo-tab-delimited file (pseudo in the sense that it was mostly tab-delimited but also included headers inserted at random intervals) that was 2.7B.

I was able to parse the file byte by byte and convert it into a datalog file and an index file pair. The datalog ended up being 1.8G and I could actually plot the entire thing on one graph. It took a good bit of time to display but it worked.

Enough for now...

Ben