- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Enqueue Timed out no error - ideas?

Solved!12-07-2021 12:25 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I've got a bit of code that has started causing me issues. I'm obtaining a fixed sized (size is determined from runtime params), queue of a specific name, (because I can monitor the size externally if I want if I use a known name).

I'm enqueuing and dequeuing in a fast loop with a time critical priority assigned to it. (I've recent made the enqueue function a subVI to allow me to hopefully run it even faster without having to share resource with the main VI that dequeues).

This is just a function that stream data very fast into my program and as the processing can be laggy I have this resizable queue to allow me to have a portion of memory as a "buffer" between the raw stream and the processing to ensure I don't kill the instrument on the other end with a tiny output buffer that just throws data out as fast as it can.

The Question is this...

If I'm monitoring the Queue size every 1 sec, how can I go from 0 elements to > 6k elements when I can't stream that in 1 sec.

I'm enqueing with a 1ms timeout so I know if the queue is full - then I know my buffer is full and need to stop my process, but I seem to be stopping when I don't need to.

And this is based on the Timeout flag going TRUE.

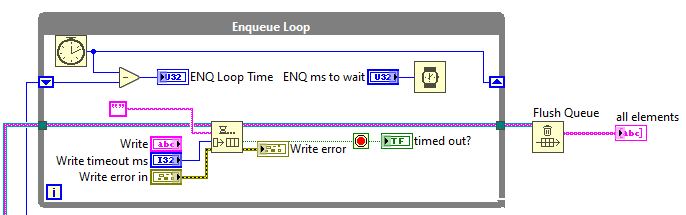

I've made this dummy VI to test this and I can't reproduce this behavior, but all my error logging points to an intermittent fault in this function (I have error traps either side of this that produce different messages and I'm logging the status change but no Error cluster change from default status. (And I know my logging is working)

Any reason why the timeout would go TRUE when it's not a timeout? that anyone's seen?

Thanks

James

Solved! Go to Solution.

12-07-2021 02:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I would set the size of the queue to -1 and leave the name unwired as most of the time that is a bad idea. See if that changes anything. Equeue function returns a timeout only if there was no space available in the timeout specified or if the function returns an error.

CLA

12-07-2021 05:25 PM - edited 12-07-2021 05:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

First, there is nothing wrong with a named queue. Internally it just returns a copy of the reference to the queue exactly the same as branching a queue reference wire.

Double check the help file. I believe timeout is TRUE if either;

- timeout occurs

- OR, Error In is TRUE AND Timeout in is not negative ( Timeout is always false with Timeout in = -1)

Dataflow should prevent timeout during any enqueue operation so be more specific about what is really returning a tmo.

That being said, you should probably be enqueuing an array to avoid data loss with a system that has variable latency and relying on a hardware buffer in the acquisition device. We need more info on the project.

"Should be" isn't "Is" -Jay

12-07-2021 05:43 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Alternately,

You could dump your producer and consumer loops into Timed Loops (which run in their own execution systems "above time critical priority...",) and monitor completed late rather than the queue timeout.

"Should be" isn't "Is" -Jay

12-07-2021 08:28 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@JÞB wrote:

First, there is nothing wrong with a named queue. Internally it just returns a copy of the reference to the queue exactly the same as branching a queue reference wire.

Double check the help file. I believe timeout is TRUE if either;

- timeout occurs

- OR, Error In is TRUE AND Timeout in is not negative ( Timeout is always false with Timeout in = -1)

Dataflow should prevent timeout during any enqueue operation so be more specific about what is really returning a tmo.

That being said, you should probably be enqueuing an array to avoid data loss with a system that has variable latency and relying on a hardware buffer in the acquisition device. We need more info on the project.

While technically there is nothing wrong with a named queue, a named queue runs the risk of interfering with another named queue of the same name. On the other hand, a named queue could be an essential part of a scheme to easily access a queue from a plug-in, for instance.

(Mid-Level minion.)

My support system ensures that I don't look totally incompetent.

Proud to say that I've progressed beyond knowing just enough to be dangerous. I now know enough to know that I have no clue about anything at all.

Humble author of the CLAD Nugget.

12-07-2021 08:48 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Not quite Mr. Ko

Queues and other "Named" things such as:

- Notifiers

- Dynamic events

- I/O Sessions (VISA, DAQmx, RFmx, etc...)

- Timed Loops

- .....

Are Context Aware! So, "Data" on MyComputer will not collide with "Data" on cRIO1 or "Data" on MyCoollvlibp (you need a public method to access a plug-ins Named object ref)

Still choosing a good name is a good idea 💡.

"Should be" isn't "Is" -Jay

12-08-2021 03:24 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Ok, thanks for the feedback...

@Lucian:

you haven't quite understood the my intentions here. I need to allow the producer loop to not hang when the consumer loop has caused the queue to fill up. (Well actually the consumers of the consumer, but that's not important)

The timeout was added to prevent a fatal crash on an external device it's buffer overflows due to not being read my the producer loop due to the queue being full. The queue has a fixed size to prevent a windows memory overflow and system shutdown if processing gets too laggy. I'm dealing with fast data streams of large data sets and can kill a server style PC in 15mins without this. This is a serious memory management tool!

@Jay

I've already looked at the help (hence the example VI to test). I don't log an error when I get a timeout which leads me to believe that this is a true timeout, as otherwise I would have caught an error in my error log on the input to the enqueue function. Technically this is the acquisition device and the acquisition loop as I stream a Raw TCP string in this loop. Not sure how I put a TCP read (with timeout) and enqueue into a timed loop so I can monitor completed late, but maybe I've missed a trick.

I don't want to use lossy enqueue - that defeats the object of knowing if the queue is full and will lose the data I want to process

@Bilko

I have a plugin architecture and have carefully assigned a queue name here that no-one in their right mind will think of😉

But it allows me to open a reference to the queue by name for queue size monitoring externally for debug (I did have it unnamed) but I named it for debug.

I close the queue and force release when not streaming (I'm not monitoring the queue size by name at the moment, so the debug VI if named would be representative) then I reopen the queue for the next stream.

Everything related to this queue sits in one submodule on one program on one PC. (data is pulled in through the producer and passed out through the consumer).

James

12-08-2021 03:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@billko wrote:

@JÞB wrote:

First, there is nothing wrong with a named queue. Internally it just returns a copy of the reference to the queue exactly the same as branching a queue reference wire.

Double check the help file. I believe timeout is TRUE if either;

- timeout occurs

- OR, Error In is TRUE AND Timeout in is not negative ( Timeout is always false with Timeout in = -1)

Dataflow should prevent timeout during any enqueue operation so be more specific about what is really returning a tmo.

That being said, you should probably be enqueuing an array to avoid data loss with a system that has variable latency and relying on a hardware buffer in the acquisition device. We need more info on the project.

While technically there is nothing wrong with a named queue, a named queue runs the risk of interfering with another named queue of the same name. On the other hand, a named queue could be an essential part of a scheme to easily access a queue from a plug-in, for instance.

A named queue is a global.

Actually, it's worse than a global. It's a named global resource.

I've seen this go wrong. Named queues where used by libraries (in this case without any reason), and some used the same name or a dynamic name (e.g. the name of the caller)... These bugs can be very hard to find, as the symptoms are usually described as "weirdness all over".

They can be 'convenient', but I'd definitely wouldn't use them unless I'd have to (and I rarely do).

12-08-2021 04:24 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

A few thoughts in no particular order:

1. Assigning time-critical priority might not be a net advantage. I've mostly found that attempts to micro-manage execution systems and priorities don't tend to pay off overall. There have been exceptions, but often the gain in one area comes with an important loss in another.

2. Is the subvi you made for enqueuing reentrant? Or do you literally only have a single instance and the subvi was only made to allow you to mess with priorities or execution systems?

I'm not a deep expert in this stuff, but I *think* that there would be a potentially significant overhead cost for context-switching if this enqueue call is in a fast loop.

3. Queues are generally an extremely efficient way to transfer ownership of data from one loop to another. But I wonder if there still might be some advantage in limiting the overall "ping rate" at which you interact with the queue via calls to enqueue and dequeue?

I draw from experience with DAQmx where a very common newbie problem is to set up something like a 100 kHz acquisition and then run a "consumer" loop that reads 1 sample at a time. The software loop can't keep up (due to driver call overhead), resulting in a fatal task error for buffer overflow. The solution is to retrieve the same 100 kHz bandwidth of data by making less frequent calls to the driver which each return larger chunks of data. Then it's very easy to support the 100 khz bandwidth.

I picture some kind of double-buffering scheme where you'd enqueue and dequeue larger chunks of data at lower "ping rate". For example - the enqueue subvi wrapper could build up an internal fixed-size array of 50 elements before enqueuing them.

4. You might be truly up against a system bandwidth limit that simply won't yield to these optimization attempts. Right now you use a 1 msec producer timeout to let you know when to shut it all down. Well, that's lossless for a *while*, but ultimately becomes infinitely lossy after shutdown.

Maybe lossy enqueue (along with an indication of said loss) would be a better (though still imperfect) alternative? Here I picture an incrementing count of consecutive times the lossy enqueue function returns a True value in the output 'overflow?', and then including that count as part of the chunk of data being enqueued. Then your system can keep running instead of shutting down entirely, and your consumer can know where and how much data was skipped.

-Kevin P

12-08-2021 04:29 AM - edited 12-08-2021 04:37 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Why not flush the queue in stead of reading it?

I'm not entirely sure what it is you want to do, and the example doesn't reproduce the problem (as you mentioned).

If you want to enqueue until the buffer is full (not sure if this is what you want or if it's the problem you're seeing), and then dequeue the data, why not synchronize the read after the write loop?

Why name this queue? If the queue is filling faster then you expect, could there be another loop with a queue of the same name?