- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Consumer Producer Loops

06-27-2018 11:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi all, Background : Want to stream data to Binary file from Keysight high speed digitizer M9203A being sampled at 200MSPS= 400MBPS from four synchronized channels. Procedure followed: After fetching 200M Samples from all the channels writing them in four individual queues in producer loop. In consumer loop Dequeue all the Queue and create an array and write the array to TDMS or Binary file. Problem Facing/ Optimization requisite : Since the data size is very large any new array occupies the system memory.

In producer loop I have avoided any additional large size array by writing fetched data from Instrument directly to the respective Queue.

However in consumer loop I have to build an array of data from all the four channels before writing it in a TDMS file.

Is there any way I can avoid making this array as well.

Looking forward to your valuable comments.

thanks

jayant

06-28-2018 01:12 AM - edited 06-28-2018 01:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

06-28-2018 04:49 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks GerdW ,

Your suggestion of divide and conquer could work very well if the sampling from the device is continuous . However in my case:

- The sampling is based on a trigger . The time of occurrence of this trigger is random.

- Application requires to capture the signal for 1 sec after the trigger(=200M Samples@ 200MSPS).

- The device acquires and stores the specified number of samples only once every trigger event. Device has internal memory to store the samples.

- From the application all the samples (as specified during acquisition) should be fetched at once . Sequential read of smaller number of samples is not allowed.

Therefore I don't see any way to stuff smaller number of sample data to my queue in producer loop.

Looking forward.

06-28-2018 05:25 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

hi jayant,

in this case maybe you could try to have a dedicated global/fgv/ae that is initialized to the size needed,

and overwrite this array with new data and read from it to write to tdms.

you would have to come up with some logic to ensure your data isn't overwritten before you could write it to tdms.

perhaps a state machine that jumps between the states of acquire and write.

this at least would help with the memory problem.

regards

If Tetris has taught me anything, it's errors pile up and accomplishments disappear.

06-28-2018 05:53 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Okay.

Meanwhile I have noticed a unexpected incremental memory growth during the TDMS advance write operation. Altogether I am exceeding 14GB of RAM while writing (400MB x4 ch)TDMS file.

Have gone through few earlier posts

https://knowledge.ni.com/KnowledgeArticleDetails?id=kA00Z0000019MfNSAU

but no success.

Any help on this.

06-28-2018 06:04 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Maybe I'm missing something, but this sounds kind of straightforward. There's no need to re-build a bigger array in your consumer.

Let the producer stuff the entire dataset into the queue(s) all at once. Over in the consumer, you can pass each single channel's dequeued array directly to TDMS Write (preventing further memory allocation). You could also work your way through a channel's data in smaller chunks using Array Subset -- the compiler may be able to do this without copying the data subset but even if it must make a copy, you can limit the memory size being used to something more readily available than 200M samples.

-Kevin P

06-28-2018 06:22 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

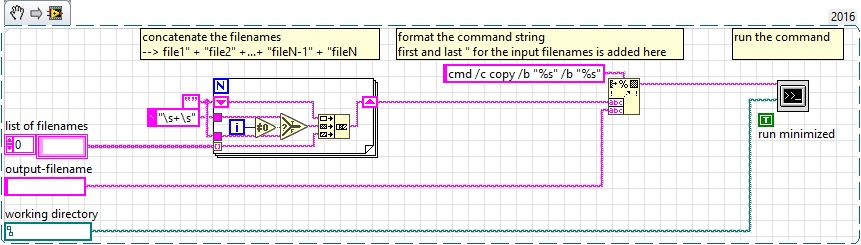

regarding the memory growth when using tdms, you could rotate tdms files, and in the end (or somewhere in parallel) concatenate them into one file.

if you are on windows you could use something like this:

If Tetris has taught me anything, it's errors pile up and accomplishments disappear.

06-28-2018 06:30 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Kevin,

I understand TDMS write can take 1D array ( single channel in my case) or 2D array ( all four channels combined) . Earlier I referred to 2D array when I said I had to combine all the channels and build an new array . Which causes memory allocation.

Now as you suggesting to write individual channel data directly to TDMS i.e 1D array write to TDMS. Does this imply calling TDMS write vi four times(=no of channels.) sequentially.

Yes this method works. However now the problem has shifted to incremental memory growth as I mentioned earlier.

https://forums.ni.com/t5/LabVIEW/Consumer-Producer-Loops/m-p/3810632/highlight/true#M1076077

06-28-2018 06:37 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@jwscs wrote:

regarding the memory growth when using tdms, you could rotate tdms files, and in the end (or somewhere in parallel) concatenate them into one file.

if you are on windows you could use something like this:

Hi,

Sorry but this is something out of my box. Can you pl elaborate more.

thanks

06-28-2018 06:47 AM - edited 06-28-2018 06:49 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

i had a similar problem when writing long time or large amounts of data to one TDMS file.

so i wrote smaller tdms files, closed the file/reference and started a new tdms file.

since you don't want many tdms files but one, you have to combine them somehow.

the binary copy does exactly this, it essentially concatenates multiple tdms files into one.

since this is not complicated, you can try it out easily, e.g. start a new tdms file (and close the old one) for each acquisition.

then somewhere/-time you concatenate the files, et voilà you have one tdms file,

and your memory growth should be more or less alleviated.

EDIT: you can save the .png and then drag+drop it onto a block diagram

the last icon is the "System Exec.vi"

If Tetris has taught me anything, it's errors pile up and accomplishments disappear.