-

Analysis & Computation

305 -

Development & API

2 -

Development Tools

1 -

Execution & Performance

1,027 -

Feed management

1 -

HW Connectivity

115 -

Installation & Upgrade

267 -

Networking Communications

183 -

Package creation

1 -

Package distribution

1 -

Third party integration & APIs

288 -

UI & Usability

5,449 -

VeriStand

1

- New 3,053

- Under Consideration 4

- In Development 4

- In Beta 0

- Declined 2,638

- Duplicate 711

- Completed 338

- Already Implemented 114

- Archived 0

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report to a Moderator

Add full support of 128-bit timestamps.

Hi all,

the 128-bit fixed point fractional format of the timestamp type is missing support in primitives.

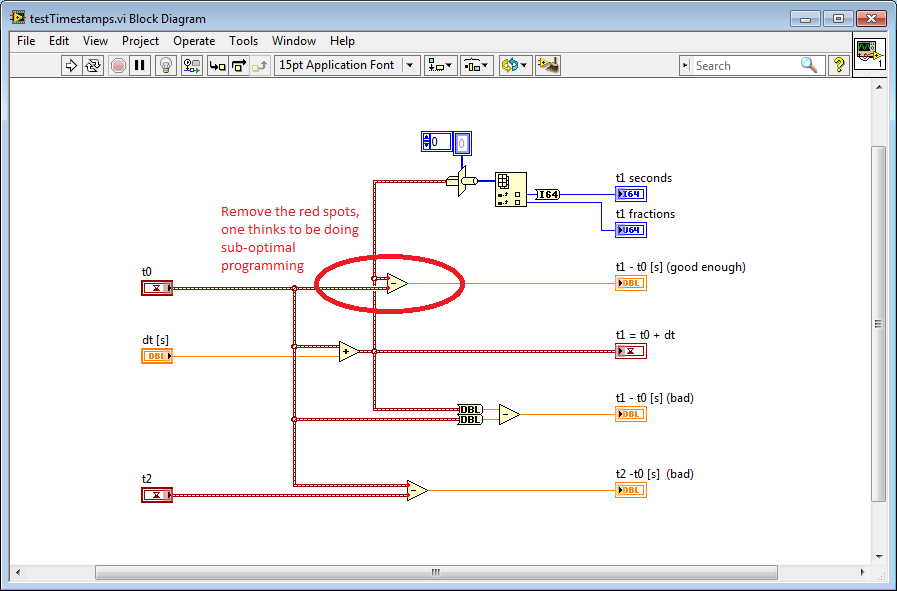

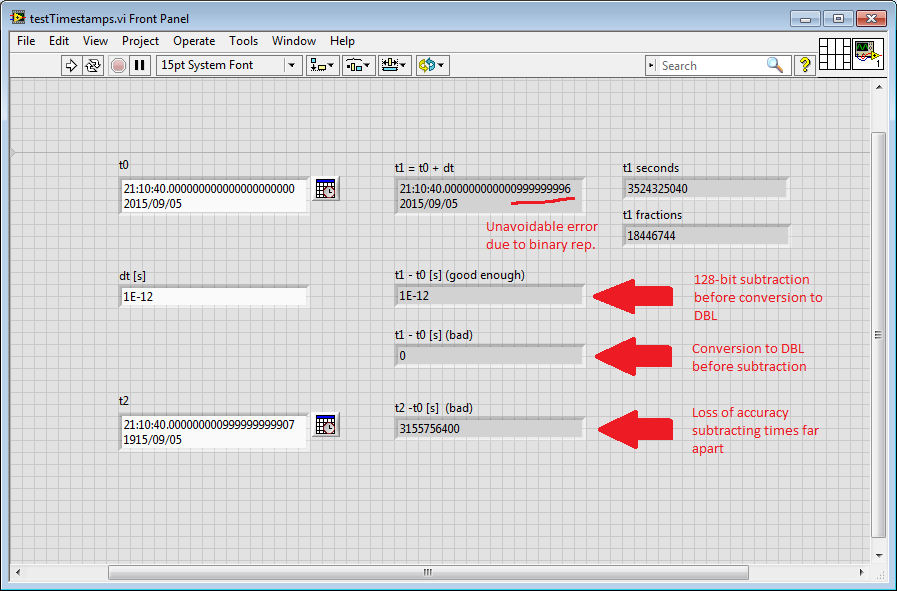

Often the user needs to fall back to the double representation of the timestamp. This happens, for example, when one wants to measure the difference between two timestamps. Also, this may result in loss of accuracy if one is not aware of what is going on under the hood. See discussion in here and example below.

In particular the following should be improved:

1) There is an asymmetry between the "plus" operator and the "difference" operator. Calculating the difference of two timestamps to get a double is as correct as it is adding a double to a timestamp, if we think that the type of a time difference is DBL. Why don't we get any warning when we sum "apples with oranges" while we get harassed when subtracting things of the same type? That signal was enough for me to try to work out myself what was going on inside that block. I would say that only bad compilers warns the programmer about something that is perfectly legal.

2) However, I prefer a second option: the 128-bit fixed point fractional format should be printable both as absolute and relative time, as it is for the DBL representation of time. In this case the output of the difference operator applied to two Timestamps is again a Timestamp. In this case the sum of two Timestamps should be allowed. As this has been debated in the discussion above, users should be aware enough of the facts of Nature to understand that the relative time representation is more appropriate when one subtract two numbers that he/she interprets as absolute timestamps. In fact, I guess users were managing pretty well time differences when they got only the DBL representation of timestamps... Also, nothing in the language stops me to show any DBL number as an absolute time. Why should the language prevent me to show a signed 128-bit number as a relative time?

The example below shows that the difference operator applied to Timestamps performs the subtraction as 128 bit before the conversion to double, therefore, internally, the difference timestamp is already calculated. Note that the inconvenient way of doing the difference (conversion to DBL before subtracting) is not enough even for microseconds accuracy.

Sincerely,

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.