- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Diadem 2017 and memory usuage

Solved!02-13-2018 09:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello,

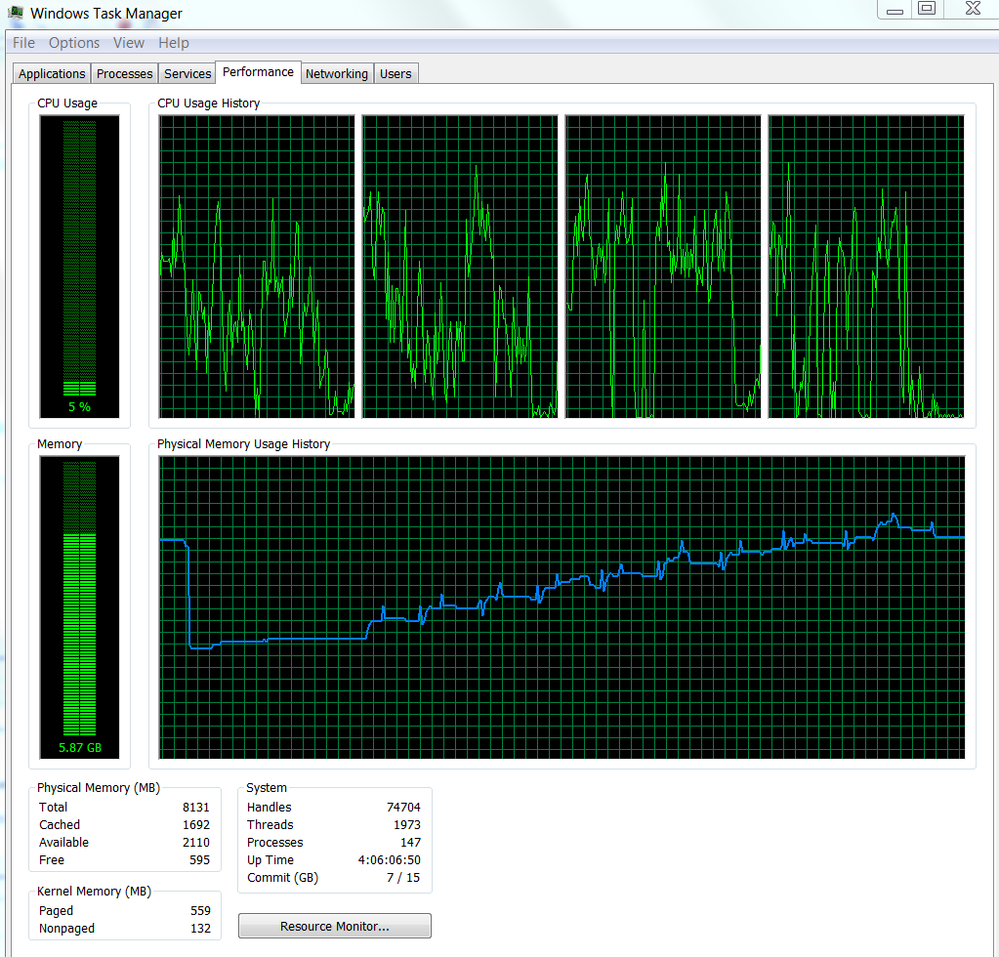

I use a Diadem script to load files sequentially into the data portal and process them one by one. After the processing I save them, clear the data portal and load the next file etc. Despite clearing the Data Portal, Diadem appears to 'hang on' to the memory with each file incrementing the memory usage as shown here.

Is there some way I can 'reset' the memory after clearing the data portal? At the moment I appear to have memory issues if I process many files.

Thank you!

Best regards,

Thomas

Solved! Go to Solution.

02-13-2018 02:04 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Can you add some information.

- What files do you load? (Plugin. e.g. TDM, TDMS, CSV)

- How big are the files?

- What operations do you use when processing the files?

- Your picture starts from a very high point and releases to smaller value before raising again. What happened before the decrease of memory?

02-14-2018 01:56 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Andreas,

- The files are off the type TDM.

- I use DataFileLoadSel to load 24 channels from each of 10 files. Processing consists of concatenation, finding averages, sorting and creating new channels with summarized and grouped data. The main commands used are DataFileLoadSel , chnConCat, chnMultipleSortExt and ChnEventFind.

- A processed file has a typical TDM size of about 21000 KB and a tdx size of 101000 KB.

- The high memory point was from the previous runs of Diadem. I need to shut down Diadem to 'release' the memory.

In fact, we are faced with Diadem crashing without warning and we suspect memory issues.

Thanks for the support.

Thomas

02-14-2018 03:02 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

UPDATE

The DataFileLoadSel command 'locks' the source folder. Even when the processing is finished, and I clear the data portal, it is impossible to delete the original data files or their folders until I close Diadem. At the same time, the memory usage drops back to the normal baseline level. The command DataFileLoad does not do this. Expected behavior for DataFileLoadSel?

Thanks again

02-14-2018 05:35 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

If the files are still locked when portal is cleared something went wrong.

So after data.root.clear all files should be deletable.

Could you please try the following:

Got to: Settings->DIAdem Settings->Navigator->Loading Behavior

Modify "Load bulk data on modification of channel data" to "Always load bulk data"

Maybe it is easier for you to set

BulkDataLoadingMode = eBulkDataLoadingImmediately ChnCharacteristicsUpdate= "Automatic"

at the beginning of your script. Does the effect remain?

P.S.: Are you capable to reproduce the behavior that after the file is no longer deletable after data.rot.clear was called and provide a script and files to us? We would be very interested because this is potentially causing your memory issue.

02-14-2018 06:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Andreas,

"Always load bulk data" does not solve the issue. The files are still locked and the memory usage stays high even when I clear the data portal.

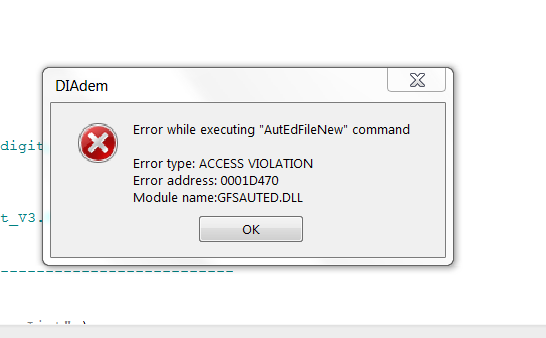

Another point, if at this stage, I try to open a new file (script), Diadem immediately crashes with this error message.

This behavior is repeatable for the memory, file locking and crashing. I would need approval to share data files with you....

02-14-2018 07:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

UPDATE

Hi Andreas,

Attached are (stripped down) versions of the scripts which I am using. These create exactly the same issues with the memory etc. Hope it helps.

Script1 calls script2.

Thanks

Thomas

02-14-2018 12:38 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

This is the method I came up with and it works pretty well:

Dim File, iCount, Directory

'-- Set Directory Path

Directory = "C:\_Processed Data"

'-- Load All TDM Files from Directory

File = DirListGet(Directory, "*.TDM", "filename", "FullFilenames")

For iCount = LBound(File) To UBound(File)

Call DataFileLoad(File(iCount))

'-- Calculations

Call ChnIntegrate("[1]/Time","[1]/Pelvis RY","[1]/Pelvis Rotation RY","TrapezoidalRule",0,0,0)

'-- Save and Clear

Call DataFileSave(Directory&"\"&Data.Root.Name&".TDM","TDM")

Call Data.Root.Clear()

Next

02-15-2018 12:48 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi TamerDTS,

Thanks for the response. I normally use a method similar to yours and it works ok. However, if I use DataFileLoadSel instead of DataFileLoad, I get the memory issues described. I could use DataFileLoad, but then I would load many channels which I do not need and end up with a very large file.

Thanks

Thomas

02-15-2018 08:44 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

CORRECTION : Diadem 2015 NOT 2017

Please note that I am using Diadem 2015 sp2, not Diadem 2017 as I have written initially.

Apologies to all.