- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to query DAQmx task state in LabVIEW?

04-11-2016 02:27 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I do not have a trigger for my application. I just have Start - Read - Stop (All as action Engines) happen in a sequence in a case structure before it moves on and returns back to acquire 1000 samples again.

It is a continuous 'FINITE' sample acquisition. I will try to get a snippet posted soon.

04-12-2016 10:22 AM - edited 04-12-2016 10:31 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

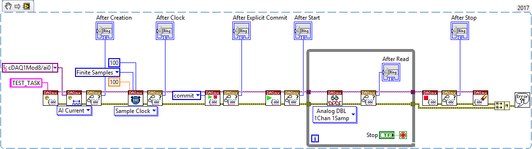

Here is the vi I am using for tesing the concept. It is a modified version of the example for finite input.

Without using control task and commit - Loop - 215-220ms

With control task and commit - Loop - 170-175ms

Additional Info:

The funny thing is there is a difference between simulated HW and actual HW. The above timing holds when I have my sensors connect to the AI module

When I use simulated HW, these are the times.

Without using control task and commit - Loop - 145-150ms

With control task and commit - Loop - 105-110ms (This time would ideal for my application but I don't know why there is an additional 70ms)

04-12-2016 10:33 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks, but I'm still running LV 2013 and can't open the vi you posted. Can you save back to 2013 or post a screenshot?

-Kevin P

04-12-2016 10:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You should be able to open this in LV2013 now.

Thanks for your support!

04-12-2016 04:28 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

At 10 kHz sampling, reading 1000 samples at a time, I regularly get loop times of 101-103 msec.

Using the "commit" first seemed to give me more 101's while skipping it seemed to give more 103's, but there really wasn't a lot of difference.

I tried both a PCI M-series card and a PCIe X-series card. I put some sequencing in your loop to make sure the tick count query happens at the end of the loop. I tried both finite and continuous sampling. None of those changes mattered in an obvious way. There were only a few extra msec consumed by doing a stop and restart. Never anything close to the 75 or 100 msec that you described.

Wondering if you're using a USB or cDAQ board b/c my PCI and PCIe multifunction boards both support much lower overhead looping. Attached is a modified version that measures only the stop/restart time with a higher resolution clock. I kept seeing about 1.1 msec with the commit, 1.5 msec without.

-Kevin P

04-12-2016 04:30 PM - edited 04-12-2016 04:40 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you for the response. I will try your modified version.

I am using a cDAQ 9178 chassis with 4 modules in it (NI 9213, NI 9229, NI 9227 and NI 9375)

RESULTS:

Without Commit - I see config time - 0.133xxx to 0.150xxx (between 133ms and 150ms mostly around 133ms)

With Commit - I see config time - 0.071xxx to 0.073xxx (between 71ms and 73ms)

I am still surprised with such high latency in transfer of data from DAQ buffer to databus. I thought the cDAQ chassis was quite robust; obviously not quite the cRIO but, I would have expected upto 25ms latency. 60-75 seems quite large.

04-13-2016 06:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I happened to be on a cDAQ for other stuff this afternoon so I tried out that last vi. I saw about 40 msec with the "commit", more like 85-90 msec without.

This is a 9178 USB cDAQ chassis and a 9205 module, on the same PC I used with the plugin boards previously. Just an FYI to confirm what you're seeing.

-Kevin P

04-14-2016 08:40 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Kevin_Price wrote:

I happened to be on a cDAQ for other stuff this afternoon so I tried out that last vi. I saw about 40 msec with the "commit", more like 85-90 msec without.

This is a 9178 USB cDAQ chassis and a 9205 module, on the same PC I used with the plugin boards previously. Just an FYI to confirm what you're seeing.

-Kevin P

Thank you so much for confirming what I see. I wonder if USB 3.0 would reduce that time. But, for now, I am going to park this issue.

Regards,

Vijay

06-22-2021 12:06 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Disclaimer: I know this is an old post, I just don't know if I should create a new one and reference this one, since I believe this technically answers the post's question and I wanted to share my solution. (Please advice)

I actually had this question myself a while ago. I was also pretty curious as to why there was a way to set states through the API but no explicit get method for it.

Like OP mentioned, there's actually a function in the nilvaiu.dll called:

int32_t DAQGetTaskState();

Which is pretty self explanatory, the only issue being that the function parameters/signature is unknown and since it is undocumented, they didn't know how to use it.

A bit of background: I was taking a look at the C API of DAQmx and looked at other function calls in the LabVIEW DAQmx API and just tried setting something that would probably look like it/match existing usage of other functions. (At least according to me)

This is what ended up working for me:

int32_t DAQGetTaskState(uintptr_t taskID, int32_t *mode, int32_t incomingStatus, LStrHandle extendedErrorInfo);

I just changed mode to a pointer for reading like in other calls/similar functions. (Pretty much just a guess/luck)

I'm sharing the SubVI here:

A few notes/info:

- I've tested the VI and it works. I've managed to query the state of the tasks I've run. However, I've only tested this in a single cDAQ chassis (9178) and a digital output module (9472). There's no guarantee it would work in other systems, which brings me to the next note.

- This is an undocumented function of DAQmx. So there's obviously no official support as far as I can guess, so I probably would not rely on it. I'm using it for educational purposes.

- The mode ring output includes 2 other values that appear in the C API in "DAQmxTaskControl", so not just the 5 states in the action input of DAQmx Control Task.vi

(I'm assuming this really is undocumented so if it turns out it actually is documented and I didn't find it, then I goofed. I'm still a noob overall)

- Tags:

- DI

06-23-2021 04:25 PM - edited 06-23-2021 04:31 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

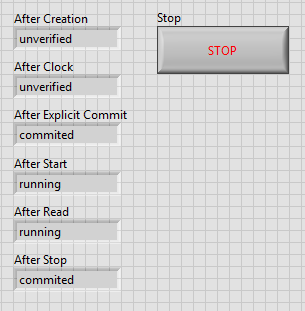

Ok. So somehow I goofed in a really dumb way.

So I tested with more hardware and realized that the constants I'm using are wrong since those are for setting the states. There is no state such as un-reserved. The actual states as described in the documentation are the following:

- Unverified

- Verified

- Reserved

- Commited

- Running

I've tested again and NOW I'm seeing consistent behavior. (I still wouldn't rely on it though since its undocumented)

I'm editing the SubVIs above.

I've also attached a small example/test for reference below:

This were my results:

Edit: Ok. So I guess I can't edit the previous post. I've attached the correct one here:

Edit 2: This is embarrassing. Updated documentation.