- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Algorithm behind IMAQ Learn calibration: Documentation, flaws and limitations

03-01-2011 07:28 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

First, I found no place where the algorithm used by IMAQ Learn Calibration Template is documented. Is it my impression, or something is kept under the carpet? The question has been asked before in the forum (this thread, this thread), but I'm not aware of an answer.

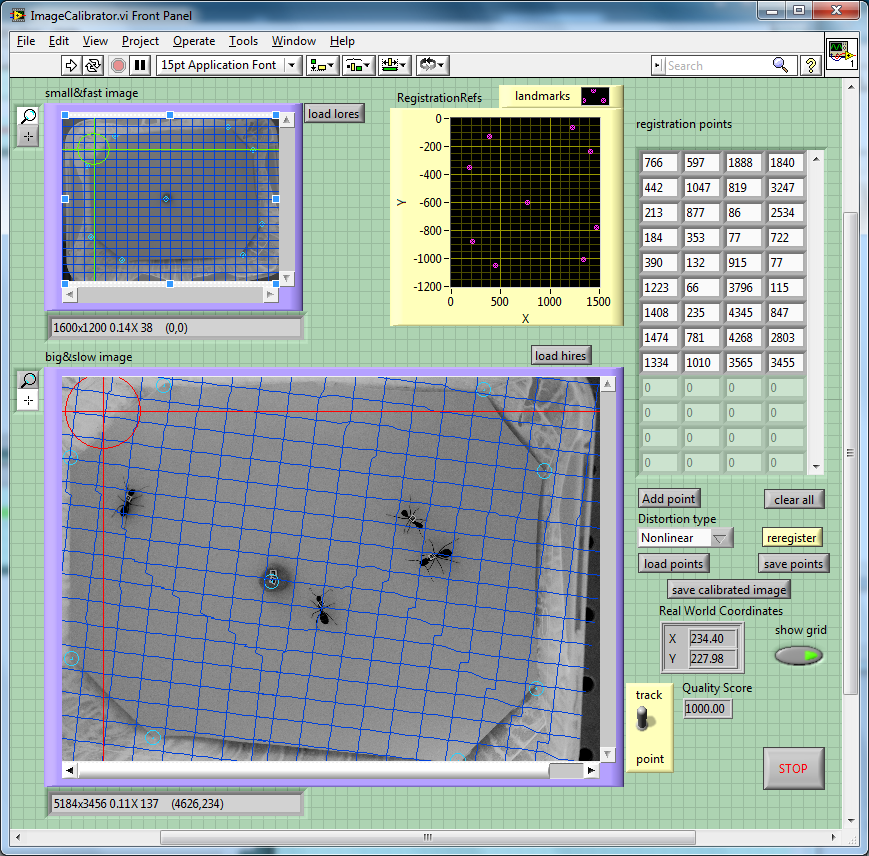

In particular, I'm concerned with what the "nonlinear" distortion is doing - while I would assume that it could attempt, for instance, some sort of least square fit to polynomial functions X(x,y), Y(x,y), from my experiments it rather seems that the algorithm is doing some tessellation of the image to be calibrated, then apply some piecewise affine transformation to each piece of the mosaic. The result may be acceptable for a dense calibration template like that shown in the examples, but it is odd for sparse points. In the attached FP image, I calibrate the lower image versus the upper, and then overlay to the latter straight gridlines in pixel coordinates, to the lower the (finely resolved) transformed gridlines in "world" coordinates. Note the sharp wiggles between morsel and morsel! Displaying the image adjusted with IMAQ Correct Calibrated Image also confirm that this is what is going on. (this in LV2010, at least)

Second, I think there are some limitations in the way the calibration VIs are supposed to be used. In my work, I met:

- the coordinate transformation information is not retrievable independently of the calibrated image. This can be a problem if the support image is big, and not to be retained. The Convert Pixel<->Real world blocks always need a calibrated image wire. Calibration can be saved to and retrieved from a file only together with a png version of the image.

- the coordinate transformation is defined apart of an arbitrary offset, which can be set a posteriori using IMAQ Set Calibration Info. In a way that is correct, but it is pretty obscure what this offset turns out to be in first place. I usually resort to a three step procedure - Learn Template -> find real word coordinates of a fiducial point (e.g. the mean of my sparse calibration points, or (0,0) in pixel coordinates) -> set as new offset the difference between the two. Options in that direction to Learn Template would perhaps help.

- some errors reported by Learn Calibration Template are completely obscure. For example, it took me a while to realize that attempting to reteach calibration to an image with calibration information already attached, causes (but not always) Out Of Memory errors. (the workaround being - to call IMAQ Set Simple Calibration with empty arguments before LEARN to clear calibration).

Any comment?

Enrico

03-03-2011 11:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Enrico Segre,

Unfortunately we do not have documentation detailing the calibration process for our images. I can, however, point you to the Perspective and Nonlinear Distortion Calibration help document. You may also get insight from What are the Requrements for an NI Vision Calibration Grid?

Regards,

Lynn

Senior Systems Engineer

03-03-2011 12:40 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Already read and providing no information in this respect.

Enrico

03-04-2011 11:23 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Enrico,

I will continue to look into your concerns as see if there is any information I can provide addressing them.

Lynn

Senior Systems Engineer

03-06-2011 02:20 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks, that would be helpful. In the meantime, for my application, I think I'd implement a low degree 2d polynomial fit for the coordinate transformation. That would be controllable and independent from any associated image buffer.

Enrico

03-11-2011 01:40 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Enrico,

Unfortunately, all of the information I can give you, you have probably found in our NI Visions Concepts manual. Beyond that, our process is proprietary. I will however, submit your feedback to R&D so that they are aware of your concerns.

Lynn

Senior Systems Engineer

03-15-2011 03:04 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Well, polynomial fit works much better, and it is way faster than IMAQ Learn. I didn't do extensive timings, which of course depend on whether the error table and calibration table are to be retained and on the number of fiducial points provided, as well as on the size of the original image (mine are BIG); but at first sight, it's a matter of some tens of ms (poly) vs 10 sec (Learn) on my test case.

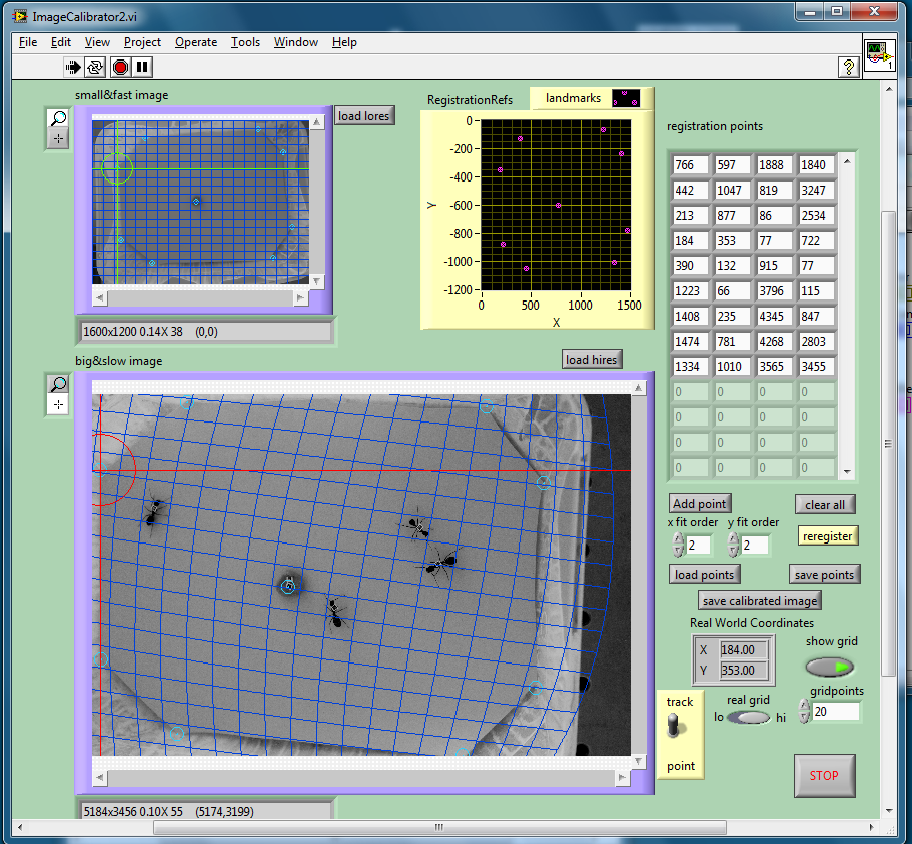

I implemented the poly fit with just a few lines of mathscript code, which need as input just the registration point data and not the associated image. Limitations are perhaps that I don't compute the error estimate (but could, it is standard regression analysis), I don't carry along the morphing table (for Image adjust), and that the poly transformation is not easily invertible for degree>1 (but the inverse fit can easily computed).

Attached below is an image from my test VI.

Enrico

03-15-2011 08:30 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Dear Enrico Segre,

Seems like I have a problem that have some similaritys.

I want to make Images of +/- 1m², token by 3 camera's, who take each 4 pictures(of 5Mpix each).

All the pictures need to be calibrated seperatly, and then combined together.

I only have +/- 1sec to do this, together with some extra's like thresholding...

Can you help me with the polynomial fit?

=> How does it works?

=> Can you send me some code?

=> And how fast is it in compare to the "correct calibrated image.vi"

Kind Regards,

Frederik Deneire

03-15-2011 08:38 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Great stuff, Enrico

Would you consider sharing your mathscript code as an open source e.g. on github.com ?

btw. your ants seems to be dead - they haven't moved for more than 2 months..

Jakub

03-15-2011 11:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@>jcz wrote:

>Would you consider sharing your mathscript code as an open source e.g. on github.com ?

I would be tempted to say "it's proprietary..."

Anyway, the whole mathscript code, including comments, is:

% input data x(i),y(i), z(i), k=1...n % p=order of desired polynomial in x % q=order of desired polynomial in y % works for x,y,z either column or row vectors % output: matrix c (q*p), such that zi=yi.^(0:size(c,1)-1)*c*xi.^(0:size(c,2)-1)' % flattening idea found on Tian-Bo Deng, proceedings ICICS 97, p.1288, % but the idea is simple and essentially also in Bevington X=max(abs(x)); Y=max(abs(y)); % rescaling to condition properly f=zeros((p+1)*(q+1),length(z)); for i=1:length(z) f(:,i)=kron((x(i)/X).^(0:p),(y(i)/Y).^(0:q)); end alpha=f*f'; beta=f*z(:); c=reshape(beta'/alpha./kron(X.^(0:p),Y.^(0:q)),q+1,p+1);

for the fit part (to be done twice, once for X(x,y) and once for Y(x,y)), and

zi=yi.^(0:size(c,1)-1)*c*xi.^(0:size(c,2)-1)'

for the mapping part (also to be done twice).

Attached also the complete VI together with ancillaries - I'm in a generous mood today... Please just keep in mind that it is a proof-of-concept evaluator, not a complete application. For the sake of evaluation, I was just happy comparing two images of a scene taken by different cameras, treating the coordinates of one image as "true world", and computing the deformation of the second image.

Btw, if you play with the degrees of the fitting polynomials, you get obviously nonsense if you try to determine more coefficients than the points you have. The system is underdetermined, etc.

>btw. your ants seems to be dead - they haven't moved for more than 2 months..

no, two weeks - it's the american date format....

Enrico

Enrico