- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

numeric to hex conversion

01-09-2014 03:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi

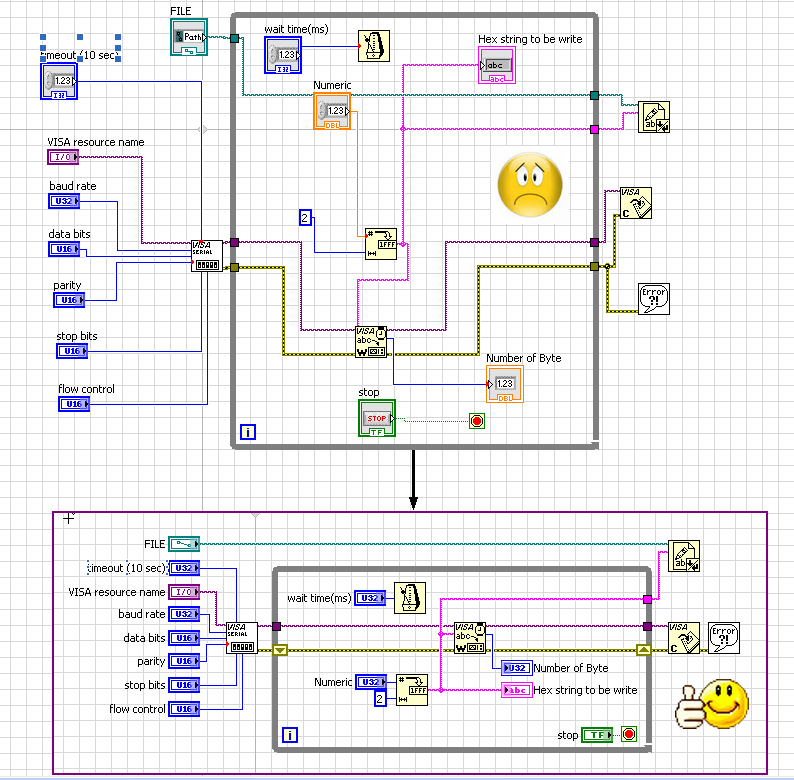

i am new to labview. i have implemented a controller circuit and serially communicating with using lab view in that i am using a numeric value and converting it to hex using numeric to hex string and receiving that on port 1 of controller but that is displaying ASCII values of my number though i have converted it in hex in block diagram of labview.

01-09-2014 04:02 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

01-09-2014 05:51 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

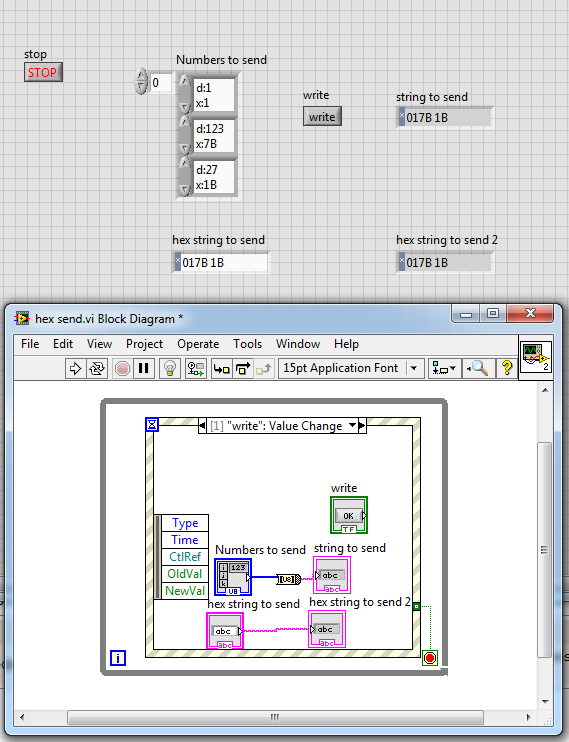

Usually you want to send out hex values and not the hex value in ascii text 😉

the visa write will print any string value.

So the easy way is to just enter your values into a string control set to hex display, or convert an array of byte to a string and send it.

See pic and vi, you have to add the visa functions...

(The display format of the byte array is special 😉 you can enter decimal or hex values.... (this works only for [UI][8,16,32])

Henrik

LV since v3.1

“ground” is a convenient fantasy

'˙˙˙˙uıɐƃɐ lɐıp puɐ °06 ǝuoɥd ɹnoʎ uɹnʇ ǝsɐǝld 'ʎɹɐuıƃɐɯı sı pǝlɐıp ǝʌɐɥ noʎ ɹǝqɯnu ǝɥʇ'

01-21-2014 12:38 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you soo much for help.

01-21-2014 01:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi

I have problem in labview to my hardware communication. when i am using (testing) code for my controller and using CS100FULL.vi then i get whatever data i send on assigned ports. But i want total 12 bit data to deal with and thats to in hex form insted of ASCII so i do the necessary changes in controller code(testing1) and making change in vi also keeping width of decimal to hex string conversion block 3. but i wont get any data at port.

Please please suggest me whwrw i am making mistakes because in keil this code runs properly and i get at desired data at ports in simulator but when i am loding it in controller it won't work with labview but when i am using hyper terminal it communicate properly and i get data at port 0&1 that

means i am doing mistake in vi so please suggest me solution and mistakes i am making in my vi.

Thank you

01-21-2014 02:02 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi spv,

a whole lot of RubeGoldberg...

What is the purpose of all those sequence frames?

What is the purpose of converting booleans to "0/1" instead of wiring them directly to case structures?

What is the purpose of a WHILE loop running just once?

Why are there controls without a label? Would you code in C like this:

"" := "" + ""

???

What should we read from that Assembler code for some unknown microcontroller?

Did you read any of the post above? Did you understand the difference in sending bytes vs. ASCII codes?

Right now you're sending ASCII codes containing 3 chars that form a human readable HEX number. Is this what your µC expects?