- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

TDMS Write Memory Leak

Solved!06-04-2009 02:09 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

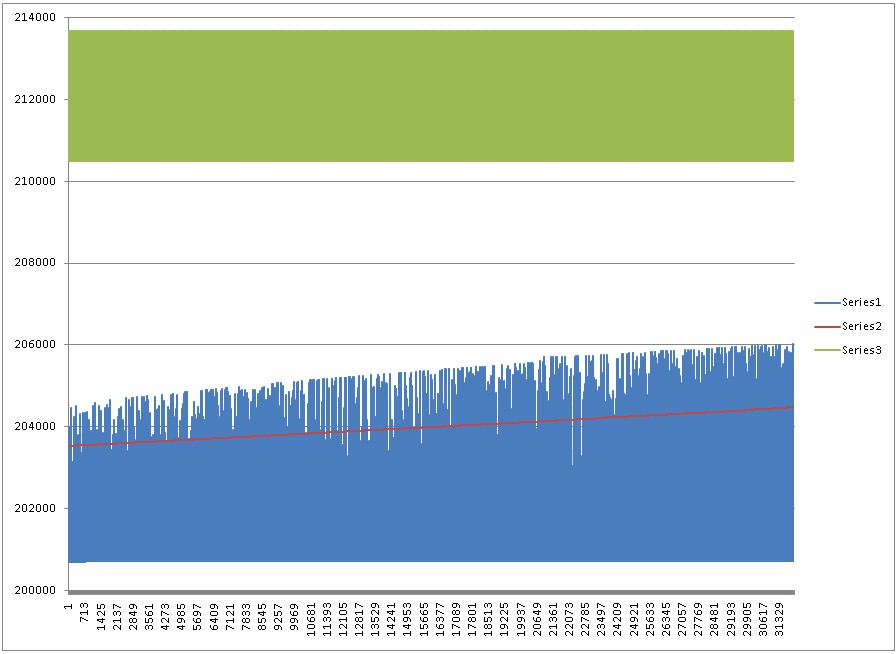

I am using TDMS to store test data which appears (in a very convincing way) to be causing a memory leak in my program. Shown below is a graph of three versions of my program. Series 1 uses TDMS data storage with the file opened, data appended, and closed every 5 seconds. Series 2 uses TDMS data storage with a referense kept open during operation and closed at the end. Series 3 writes data to a CSV file. The graphs show LabVIEW memory usage every .5 second over the last ~4 hours of a 10 hour test.

LabVIEW 8.6.1

Windows XP SP3

Solved! Go to Solution.

06-04-2009 02:21 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Further Information:

TDMS Write

- Group Name - String

- Channel Name(s) - 1D Array of Strings

- Data - 2D Array of Strings

06-04-2009 11:42 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

We tried to reproduce this on our end, but couldn't. Any chance you can post a simple VI that demonstrates this behavior?

Thanks,

Herbert

06-05-2009

12:49 AM

- last edited on

10-31-2025

09:25 AM

by

![]() Content Cleaner

Content Cleaner

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Further explanation ... when a TDMS file is open, LabVIEW will create an index structure in memory that is used for random access to the file. The built-in LabVIEW TDM Streaming API will always create this index, even if you're just writing. The size of the index depends not on the size of the file, but on how fragmented the file is. If you store a relatively small number of large blocks of data, the index will be very small. If you keep adding small amounts of data to your file, it might get pretty large. A remedy for that is the NI_MinimumBufferSize property. You can set this property on a channel in order to make LabVIEW buffer a given number of values before storing them. That way, data from multiple calls to "TDMS Write" counts as one chunk of data in the file. Typical values for this are 1000 or 10000. File fragmentation and memory footprint are reduced accordingly, however LabVIEW will use some memory for the buffers.

If all this fails, and you don't need reading access to the file while you're writing, you can also use the VI based API for TDMS. This will not build the index structure I mentioned above, so there should be no memory increase at all. In this case, I recommend to defragment the files before post-processing.

Hope that helps,

Herbert

06-05-2009 10:10 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Herbert Engels wrote:

You say you leave the TDMS reference open. What happens when you close it? Does the memory footprint go back to normal or does it stay unchanged?

This is the blue line in my graph. When I used this method, the base memory used remain constant, but the maximum memory used continued to grow.

06-05-2009

10:13 AM

- last edited on

10-31-2025

09:26 AM

by

![]() Content Cleaner

Content Cleaner

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Herbert Engels wrote:

If all this fails, and you don't need reading access to the file while you're writing, you can also use the VI based API for TDMS. This will not build the index structure I mentioned above, so there should be no memory increase at all. In this case, I recommend to defragment the files before post-processing.

I have changed my code to use these VIs and I will report my results on Monday. Thank you for your responses! ![]()

06-09-2009

07:40 AM

- last edited on

10-31-2025

09:27 AM

by

![]() Content Cleaner

Content Cleaner

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I ran my program for over 40 hours and the memory useage was stable using the VI based API for TDMS. Thanks for the quick response Herbert! Maybe in future versions of the TDMS VIs there could be an option for not indexing?

06-09-2009 07:52 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

One tip is just to buffer up the data in your own application, and only doing a write to the TDMS file say every minute or something like that. Queues work brilliantly for this kind of thing.

This keeps the index file small as well.

Using this technique it is possible to log for weeks (perhaps longer...) without running out of memory.

05-24-2010 08:14 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

05-25-2010 09:15 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello Chandler,

In LabVIEW's Example Finder, there is an example called Memory Monitor that will acquire this data for you. The example finder is located under Help>> Find Examples. From here, search for memory and it is the seventh result. I hope this information helps you and if you need any more help please feel free to post.

JimS

Jim St

National Instruments

RF Product Support Engineer