- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Performance of state machine with enum vs string?

02-14-2012 09:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Darin.K wrote:

You can still squeeze that last bit of performance out of your state machine!

SpoilerThe case structure actually does slow down with more than two choices. The FN used to be faster, now LV optimizes the silly single iteration loop.

That is SICK!

really, the comparechose default improves performance over ! I belive it because you posted it. how much of an improvement? (or esentially switch- case compare, compare, compare, compare...vs switch default)

What processors is this still true for? (probably none- this is an optomization issue)

another compiler optomization pending?- the constant should be able to be predetermined and the switch cases that are determiable at compile should dead code out the unreachable per unfolded loop ( iteration ) invarient code. Still (Bows to Darin) - SICK!

(Edited)

"Should be" isn't "Is" -Jay

02-14-2012 09:45 PM - edited 02-14-2012 09:46 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Mostly I was testing some old scripting code I have to nest cases. 🙂

02-15-2012 01:55 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I remember reading a suggestion regarding event structures to place the most common events 1st if optimizing for performance. I assume it's doing a linear search through the events. Might the same be true with multiple cases?

I did some enum vs string comparison some time back, and as you noted it's faster. I was surprised that it was as close as it was though, i thought it would be magnitutes slower.

/Y

02-15-2012 05:49 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Steve Chandler wrote:

However I found another disadvantage in using the enums. If you have an array of enums constant it defaults to some particular width. You can resize the array elements so that you can see everything but as soon as you edit the typedef the width goes back to the default. Even if the typedef is strict and even if the array of the typedef is also a typedef.

Steve, I see that you already voted for my Add Block Diagram design for typedefs idea but if anyone else reading this hasn't and has had the same problem, please vote for it.

Coordinated Universal Time (UTC) format string: %^<%Y-%m-%dT%H:%M:%S%3uZ>T

02-15-2012 07:09 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

So the benchmarks confirm our suspicions. Good.

Now extending the theory longer strings will take even more time. This is not the case with enums since they are only numbers and can be used to index to the selected instruction (machine instruction).

The other corallary would be that "Case Insesetive Match" should also take longer.

Ben

11-04-2013 02:38 PM - edited 11-04-2013 02:41 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

SteveChandler wrote:

However I found another disadvantage in using the enums. If you have an array of enums constant it defaults to some particular width. You can resize the array elements so that you can see everything but as soon as you edit the typedef the width goes back to the default. Even if the typedef is strict and even if the array of the typedef is also a typedef.

I discovered this bug in the middle of my CLD exam.

<Tribal Knowledge>

When you Apply Changes to your type defined enum (or type defined cluster containing an enum), LabVIEW auto-sizes all array constants based on the length of the first enum item. So when using enums with message handlers, I always include an initial dummy element that is longer than any other item I might add. (e.g. "-------------------Core-------------------").

</Tribal Knowledge>

Certified LabVIEW Architect

TestScript: Free Python/LabVIEW Connector

One global to rule them all,

One double-click to find them,

One interface to bring them all

and in the panel bind them.

11-04-2013 03:09 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I Love my typedef enum over string anyday.

Certified TestStand Architect

11-21-2017 03:18 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

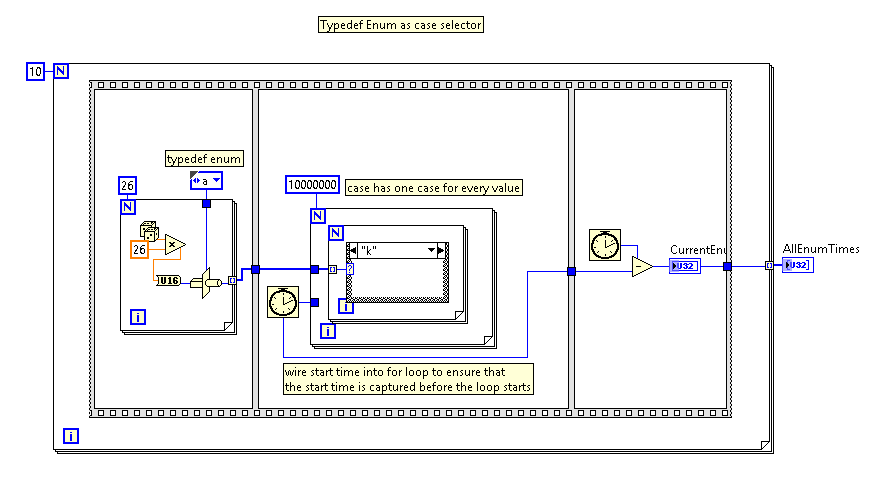

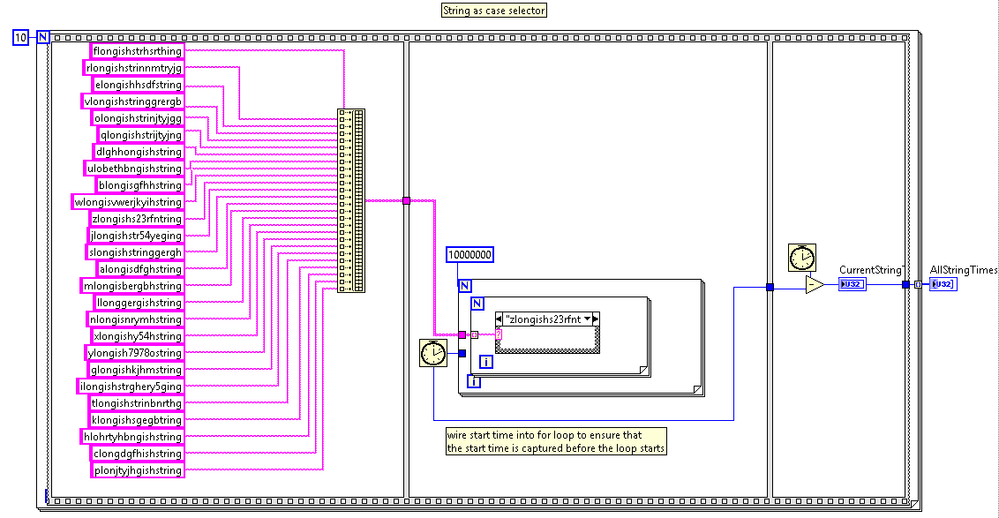

Although this is an old question, I ran into this same question recently and I ran a benchmark and thought I would post the results here for some future souls that are interested. I'm pasting screenshots of the VI block diagrams rather than the source code, since images can be viewed immediately in the browser. Running LabVIEW 2012. Compared a typedef enum case selector in a high-count-loop vs. a string case selector in the same high-count loop.

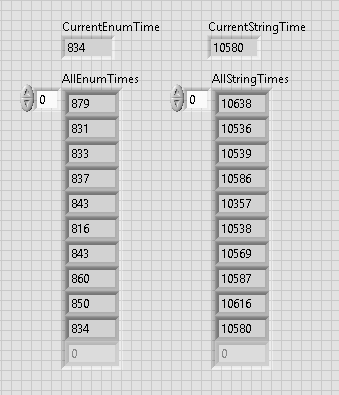

The typedef enum is definitely significantly faster as expected since the comparisons under the hood are comparing an int (enum) as opposed to a multi-byte string (which requires a byte-by-byte comparison on all string cases). The results are summarized here:

- Typedef Enum = 0.84 sec average time to perform 10M case selections

- String = 10.4 sec average time to perform 10M case selections

...so the typedef enum selector is approximately 12 times faster than the string selector under the test circumstances described below.

The screenshots of the block diagram and front panel are shown below. Each 10M-count loop does a case selection on one of 26 values (typedef enum or string). The strings chosen were all unique to avoid compiler optimization and had an average length of ~19 characters (which is an arbitrary but reasonably-sized string which would likely be encountered in a typical medium-sized application). All array initialization is performed prior to the timing, and the tests are placed in a mutually-exclusive Enable/Disable structure to keep the timing clean.

11-21-2017 04:33 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@rbrown

Since you did not post the VI, could you run it with

- case insensitve match

- Is there a separate defualt case for the string, or is one of the cases default.

Cheers,

mcduff

11-22-2017 12:02 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I think you need to randomly select the strings from the array constant. I use it as a LUT for the string to drive the case selector. You garuntee 1 of each string but could choose the same enum value multiple times.

"Should be" isn't "Is" -Jay