- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Optimize 2D array creation for memory usage and parallel processing

Solved!05-25-2012 07:26 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

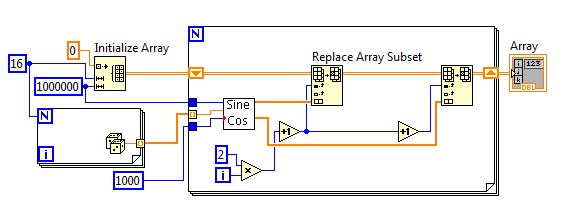

I have and data processing application that requires generating a large 2D matrix with values of sin and cos that need to be updated constantly. This is the bottle neck of my program in terms of speed and memory usage and I would like to optimize it to the maximum. This is my current code:

I have tried to enable iteration parallelism in the for loop to take advantage of the multi-core processor but with the shift registers I cannot do that. Also I have read that with in place element, structures I can reduce the memory usage, but not sure how to do that with a 2D array. Can this code be optimized to improve the speed and memory usage?

Solved! Go to Solution.

05-25-2012 10:27 PM - edited 05-25-2012 10:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Before we get into optimizing this code, are you sure it's doing what you want? I see a couple puzzling things about it:

1) You discard half the data you generate. You iterate through the loop 16 times, and each time you generate 2 rows. However, the array only contains 16 rows, so the second half of the data never gets inserted into the array.

2) You're leaving the first row of the array all zeros, because you start by replacing row 1. Is that what you intended? Don't forget, arrays are 0-indexed.

3) Use the "Sine and Cosine" function instead of two separate operations in the subVI.

You're not really operating in-place the way you'd like here. You're initializing a huge array, then copying data into it. If you want to do this efficiently, either operate directly on the elements of the initialized array, or don't initialize an array at all and let auto-indexing do it for you. The reshape array function may also prove helpful.

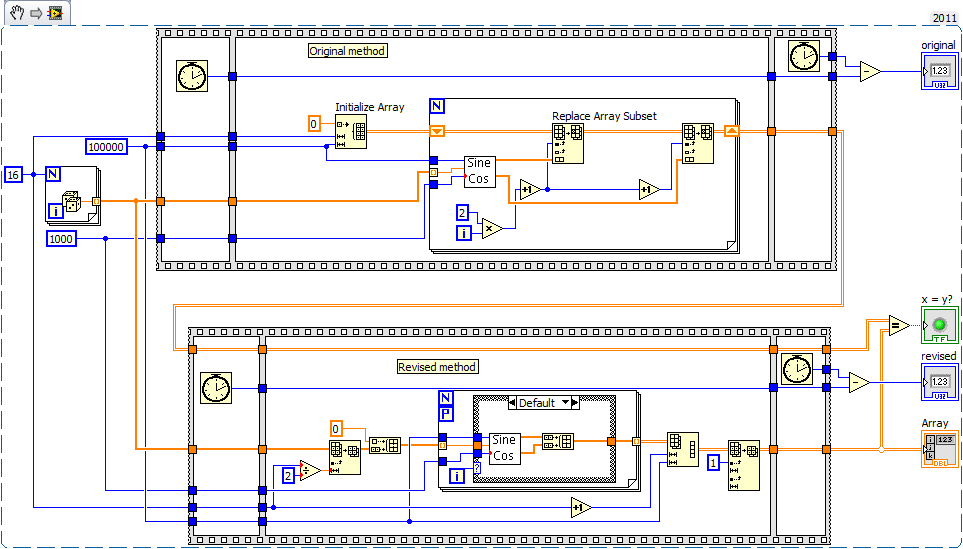

Here's your VI compared with a start at optimizing it. I had to reduce the array size to avoid running out of memory on my machine, since this generates a huge array twice: once with your approach, once with mine, and then compares them to make sure they're identical. This could be simplified a lot if it's not necessary to have the first row contain all 0s. The snippet is in LabVIEW 2011 but the attached VIs are in 2010. I'm not sure about the memory use, but it's about 35% faster (280ms vs 440ms).

05-25-2012 11:50 PM - edited 05-25-2012 11:51 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Nathand already raise a few important issues. Let me continue:

- Your "replace array subset" already operates in place, so that's fine. You will not gain anything with the inplace structure

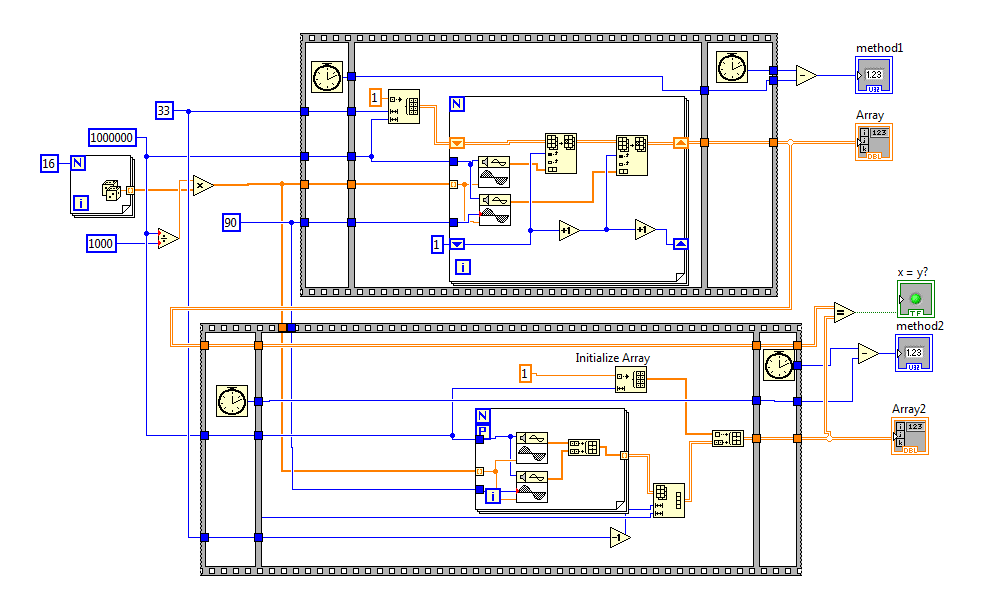

- Your subVI is way too complicated. Use the VIs from the signal generation palette, it is much more efficient. You can do it directly in the toplevel VI, eliminating the subVI overhead. The two "sine pattern" operate in parallel, so you are already benefitting on a dual core machine without a parallel FOR loop.

- The code in the picture below seems about 5-6x faster than yours and gives about the same result. Try it.

Tell us a little bit more about your overall program. How often do you need to generate that array?

If speed is important, you should also disable debugging.

05-26-2012 02:05 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thak you altenbach and nathan!

Nathan you are totally right, I had tried to simplify the problem to the maximum and I had left some absurd things. The big array should contain 33 rows (not 16), and the first row should be all full of "1".

I have compared both proposals with the following code, and in my laptop the method by altenbach (top one in the figure) is around 3 times faster

I use this large matrix to demodulate a signal with many frequencies. This code is running almost contiously

Thank you!

05-26-2012 02:24 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@obarriel wrote:

This code is running almost contiously

What does this mean? If you rewrite the same array many times, you should initialize it once outside the main loop on the toplevel diagram, keep it in a shift register, and keep overwriting it using the current code. Since (almost) the entire array is overwritten with each iteration, you don't need to reinitialize every time. The first row is always all ones, so you only need to write it once.

How does the rest of the program look like?

05-26-2012 10:27 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The sentence in my original post about "not really operating in-place" was incorrect, and replace array subset is efficient there.

Sorry I didn't provide much of a benchmarking framework, I put it together in a hurry, but note that you should wire the Array indicator AFTER both sequence frames, otherwise the array indicator update happens in parallel with the second method, which slows it down unnecessarily.

For the sake of a bit more experimentation I tried the approach below. It's still a little slower than Altenbach's method - maybe because it doesn't run the two signal generations in parallel, although parallelizing the for loop didn't help - but according to the VI profiler it uses very slightly less memory.

05-26-2012 02:34 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you for your help

I attach here a more complete example. I wanted to include a real dataset but it was to big for an attachement, so I have included a random number generator. In my computer wen running this code Labview takes almost 1GB of memory.

In the real program the variables "input data" and "basis function" change over time.

05-26-2012 03:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Do you have an link to a website explaining the algorithm?

Just glancing at the code for 30 seconds, there are quite a few weird things:

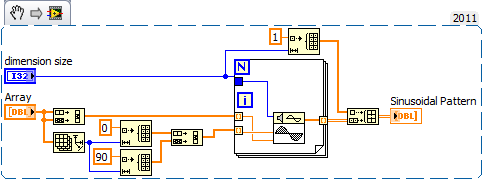

- You can remove that small lower FOR loop and the outcome will not change.

- The second FOR loop only iterates 5 times, because that's the smallest array wired to it. I would recommend not to wire N if you are autoindexing. Why are you also autoindexing on the frequency response, which is size 15. Maybe it should autoindex the frequency response on the inner loop instead?

- Why is the frequency response SGL?

- Why is the 90 an integer instead of DBL?

- Maybe it could all be done using complex representation instead of sine/cos? Would be much simpler.

Are you running this only once? Is this a subVI?

05-26-2012 04:00 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

In addition to altenbach's comments, I wonder if you have been using Matlab prior to learnign LV? It appears that you are ignoring the zeroth elemetnof the arrays in some places. The Sine and Cosine Basis loop starts indexing the Replace Array Subsets at 1, not 0. Thus the first row of the 2D array going to the middle for loop has all 1s. Similarly the inner for loop always omits element zero.

The normalization can be moved outside the loop because none of the data gong to it changes. Calculate it only once.

In the right for loop the coefficients error will always be the standard deviation. You have the same value wired to both selector inputs.

Lynn

05-26-2012 04:03 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@johnsold wrote:

Thus the first row of the 2D array going to the middle for loop has all 1s.

From the discussion above, this is one of the requrements, so it seems correct. Notice that the number of rows is 2n+1.