- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Intel 8 bit Checksum

Solved!02-08-2018 09:56 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi all,

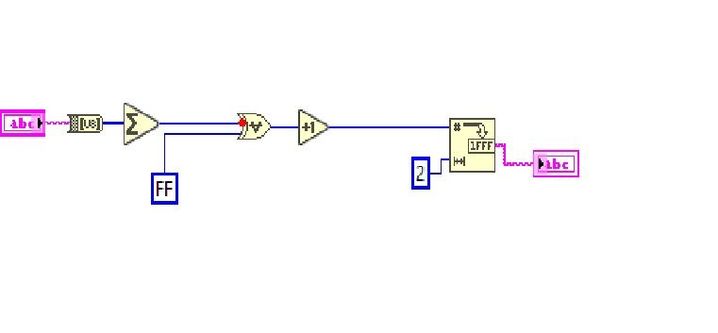

Can someone please explain what I'm doing wrong to get the correct 8 bit checksum below?

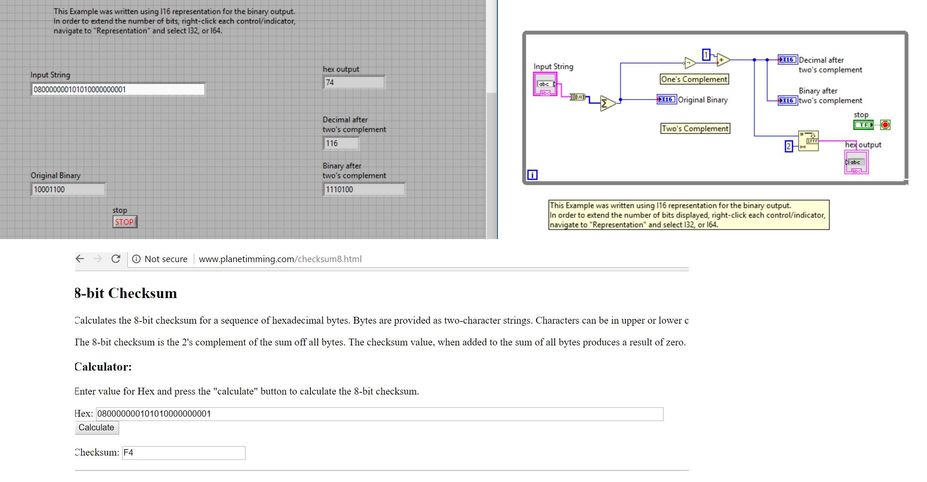

I'm expecting the answer to be same as this calculator

http://www.planetimming.com/checksum8.html

Thanks in advance

Solved! Go to Solution.

02-08-2018 11:30 PM - edited 02-08-2018 11:31 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Try this KB for calculating the two's complement. I just ran it and it got the same values as the website you posted when entering in two bytes.

02-09-2018 12:45 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks for the reply Daniel. I made few changes to meet my requirement, as per the definition of checksum. But answers are not consistent. Please see below.

02-09-2018 12:52 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I think I know the problem although I don't know the solution yet.

Labview takes my input as a ASCI (ofcourse), where as I should be feeding it in HEX.

02-09-2018 06:25 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

wrote:

Labview takes my input as a ASCII (ofcourse), where as I should be feeding it in HEX.

So you have a display issue. Right-click on the input string and you can choose "Hex Display". If you do this, I also recommend turning on Visible Items->Display Style. You will probably want to do the same with the indicator.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

02-09-2018 06:53 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Problem solved. Thank you very much !

02-11-2018 10:07 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi again guys,

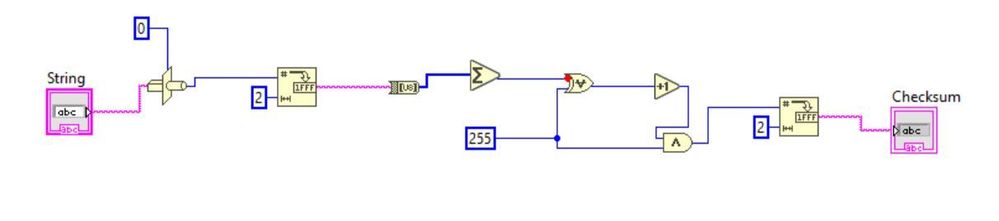

If I still want to asci input other than changing to HEX mode from front panel, what is it that I'm doing wrong ? VI is attached too.

Thanks in advance.

02-11-2018 11:18 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

wrote:

Hi again guys,

If I still want to asci input other than changing to HEX mode from front panel, what is it that I'm doing wrong ? VI is attached too.

First of all, your 255 (or FF) diagram constant (first post) should probably be U8 representation. Notice the red coercion dot?

Second, if you cast your string to a I32, the string must be 4 bytes longs (4 characters) Is it always? Does not make a lot of sense.

The display format of a string is just cosmetic. It does not change the bits.

02-11-2018 11:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Sorry, so what is your suggestion ?

02-12-2018 12:57 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I found the answer from one of your 2005 post @altenbach.

Thanks champ !

Here is the link if anyone is looking.

https://forums.ni.com/t5/LabVIEW/Convert-hex-string-to-ascii/td-p/190846