- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

For Loop Parallelism Optimization

03-22-2022 11:55 AM - edited 03-22-2022 12:12 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello, I am working on a project and in this one I regularly use loops to perform various operations and in particular calculations on elements of tables of variable dimensions.

Everything works perfectly but the execution time is too long and I want to improve it. This is where I learned about "For Loop Parallelism".

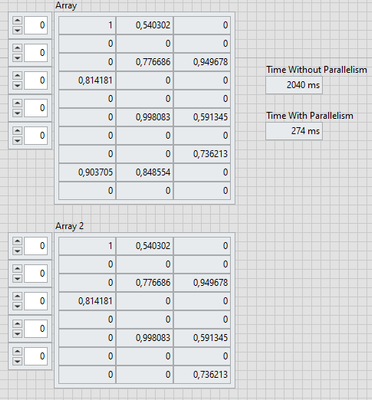

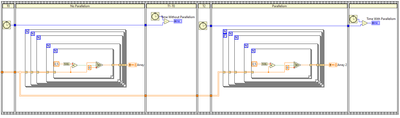

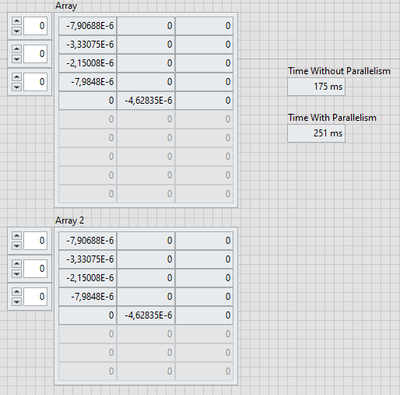

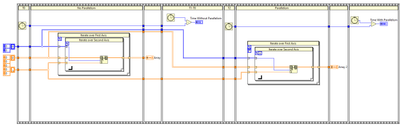

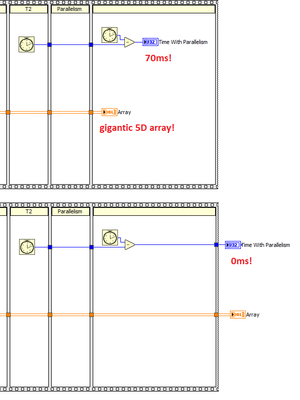

However, after multiple tests I realized that this feature did not always improve the execution time of my loops. (see the attached VI)

(Worth It)

(Not Worth It)

Is there a way to know in advance the cases in which the parallelism will be profitable and which it would not be the case?

Thanks in advance.

03-22-2022 12:25 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I think VI Analyzer can help there.

(Mid-Level minion.)

My support system ensures that I don't look totally incompetent.

Proud to say that I've progressed beyond knowing just enough to be dangerous. I now know enough to know that I have no clue about anything at all.

Humble author of the CLAD Nugget.

03-22-2022 12:47 PM - edited 03-22-2022 12:50 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

There is a parallelization overhead to split the problem and reassemble the results. Do you really need 5D arrays? Why all these coercion dots? You did not even disable debugging!

If the task inside the loop is small, the parallelization overhead dominates, as in your case. Parallelization helps if each iteration requires significant effort.

03-22-2022 02:13 PM - edited 03-22-2022 02:14 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi coco,

despite the other good advices you already got you also should try to use NO LOOPS at all - atleast benchmark such possible solutions:

I agree it most often doesn't make sense to work with 5D arrays.

And you should try to avoid coercion dots on large arrays too - even though there is one in my added code…

03-22-2022 02:33 PM - edited 03-22-2022 02:36 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

In my experience, parallelism is useful only if the content of the loop takes significantly longer to execute than the overhead to manage the parallelism.

For example, signal analysis, accessing multiple instruments are situations which are benefitted from parallelism.

As a rule of thumb, if the loop content takes ~5ms or more to execute, you will be benefitted from enabling parallelism.

Soliton Technologies

New to the forum? Please read community guidelines and how to ask smart questions

Only two ways to appreciate someone who spent their free time to reply/answer your question - give them Kudos or mark their reply as the answer/solution

03-22-2022 03:03 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@GerdW wrote:

despite the other good advices you already got you also should try to use NO LOOPS at all - atleast benchmark such possible solutions:

Ha! I actually played with the same ideas earlier. One blemish is the need to allocate that huge boolean and integer array, but the multiplication can take better advantage of SIMD instructions. (Hard to tell what the compiler does behind the scenes).

In any case, these indicators need to go behind the sequence frames because updating them with these gigantic amounts of data suck CPU from the timing, potentially interfering with reading of the last tick:

No, an empty frame should not take 70ms!!!!!

.

03-23-2022 03:01 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you for all your answers.

@billko I will look at the VI Analyzer.

@altenbach @GerdW Unfortunately most of the operations I perform need to use 5D arrays and I can't usually not use a loop like in the first attached VI.

I apologize for the coercion dots, I made the VIs in a hurry and I didn't pay attention, but in general I avoid them, I read that it could slow down since it creates a copy of the data.

@santo_13 That was my understanding, but I was just wondering if there was a tool to predict in advance if the parallelism would accelerate. For the moment I test my loops one by one to see if the parallelism speeds up or slows down my system but the idea was to avoid all that.

03-23-2022 03:16 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi coco,

@coco82 wrote:Unfortunately most of the operations I perform need to use 5D arrays and I can't usually not use a loop like in the first attached VI.

Why do you need 5D arrays? (Do you really need them?)

In my experience LabVIEW can handle 1D/2D arrays much faster than 3+D arrays. Is there a way to transform your 5D problem into a 1D memory presentation?

03-23-2022 08:12 AM - edited 03-23-2022 08:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@GerdW wrote:

Hi coco,

@coco82 wrote:Unfortunately most of the operations I perform need to use 5D arrays and I can't usually not use a loop like in the first attached VI.

Why do you need 5D arrays? (Do you really need them?)

In my experience LabVIEW can handle 1D/2D arrays much faster than 3+D arrays. Is there a way to transform your 5D problem into a 1D memory presentation?

Yes, maybe some timely concatenation instead of autoindexing could help here.

(Mid-Level minion.)

My support system ensures that I don't look totally incompetent.

Proud to say that I've progressed beyond knowing just enough to be dangerous. I now know enough to know that I have no clue about anything at all.

Humble author of the CLAD Nugget.

03-23-2022 08:25 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Unfortunately, there is no tool to predict that but with sufficient experience, you might be able to make a good guess. Again, even if there were a tool, the tool would not know anything about the runtime data sizes that might affect the execution time, for example, an FFT operation with just 2 elements vs 1 million elements, this is something the tool cannot forsee.

Everything boils down to what your real application is and optimize for that.

Soliton Technologies

New to the forum? Please read community guidelines and how to ask smart questions

Only two ways to appreciate someone who spent their free time to reply/answer your question - give them Kudos or mark their reply as the answer/solution