- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

DAQmx Read, offset First sample

Solved!09-26-2019 03:49 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello,

I'm using the Voltage-continuous input example to read a signal from the input of a DAQ board (NI-USB 6361).In the first image the initialization within the VI, I'm using the continuous reading, but at the same time creating a measurements file every 500k samples acquired.

Now I need the program to ignore the first N samples and start acquiring after that. My idea was to set an offset in the DAQmx read node, using "First Sample" in the "Relative to". But the following error message keeps coming out.

I tried with both the options of "OverWrite", and also changing from "First Sample" to "most recent" in that case there's no error but the offset is applied at every reading cycle.

What can I do to have the offset only on the first reading?

thanks

Solved! Go to Solution.

09-26-2019 04:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Some comments:

- It is much easier to examine code as "code", i.e. either as a VI (my preferred attachment) or as a Snippet of the entire block diagram (as this can be dropped into a blank VI and, abracadabra, it becomes LabVIEW code).

- It helps to explain what you want to achieve, being as specific as you can. It is unclear (to me) the point of "ignoring" N samples (as opposed, say, to simply delaying the start of acquisition for a time corresponding to the acquisition time for those samples).

- Of course, another (almost trivial) thing to do is to collect all of the samples and, in a post-processing step, simply throw away the first N.

- Or use a Producer/Consumer design and split Acquisition from Saving, building into the Consumer "Don't save the first N samples".

Bob Schor

09-27-2019 10:08 AM - edited 09-27-2019 10:10 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

So I didn't load the VI because it's very complex as this is just one part of the whole system. But you're right, I attached an example with only the acquisition part.

Also about "ignoring" N samples, what I meant is in fact delaying the acquisition, but without delaying the whole system. As I see it, when the system start the DAQ should still sample data at the input, but start the actual "acquisition" at the N-th sample, but I may be wrong.

I found a temporary solution that seems to work for now (second attachment), but I think there's a better way to do it.

09-27-2019 01:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello,

I didn't load the code because it's not very clear since whole system is kinda complex. But you're right, attached I made an example only with the acquisition part.

Also about "ignoring" the first N sample, what I meant is in fact, delaying the acquisition, but without delaying the whole system.

I found a temporary solution (second attachment), where I practically delay the data flow only on the input part. Which works for now, but I think there's a better way to do it.

09-28-2019 02:03 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

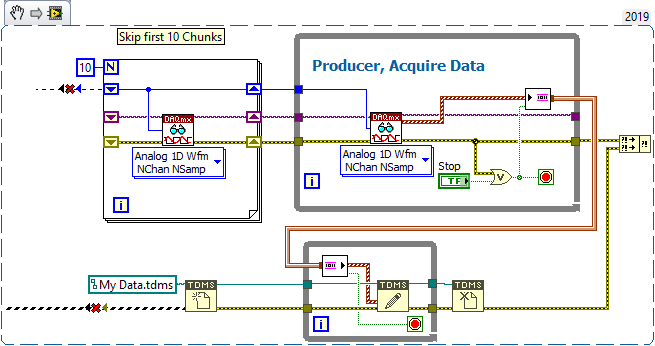

Thank you for posting "real code", which clarifies what you really want to do. Let me paraphrase it based on Example 1 --

- Set up a DAQmx Acquisition Task for Continuous Acquisition at 1 kHz, 100 Samples at a time.

- Start the Task.

- After N (in your example, 1000 samples / (100 samples/acquisition) = 10) Acquisitions, start saving the Data to a TDMS file.

The solution is simple -- separate the Data Acquisition (what I'll call the "Producer", as it "produces" data) from the Saving Data to TDMS (which I'll call the "Consumer", as it "consumes" the data and puts it away for you).

Fortunately, the Producer/Consumer Design Pattern is a well-known and well-documented pattern in LabVIEW -- there's even a Template that will build it for you (open LabVIEW, click on "File, New ..." (the three dots are important!), choose From Templates/Frameworks/Design Patterns/Producer-Consumer Design Pattern (Data) and study the pattern.

So how do you use this to solve your problem? I'm going to outline a method that assumes you do this once, meaning you start the program, start Acquisition, skip some data, start logging, then stop everything, closing files, and ending the program. [If you want to do this several times, it should be an easy modification of the "Do Once" code).

Start by learning how to use TDMS outside of DAQmx, i.e. how to open a TDMS file yourself, how to say "Here's some data, write it to the file", and how to close the TDMS file when you are done. [It's almost never a bad idea to know something about how your data is being saved, and if you learn a little about TDMS, you can, perhaps, format the data file the way you want it].

One (minor) complication is that you really have a Producer (the DAQmx Read) and two Consumers running over different intervals -- the "Display on Graph" that wants to see all the Data, and the "Save to TDMS" that wants to see only part of the Data. Since the point of this exercise is "Offset first Sample", I'm going to ignore the Graph and concentrate only on the TDMS. I'm confident once you "get it", you'll be able to figure out how to add the second Graph Consumer.

So here's the idea. Your Producer Loop is running, generating 100 samples of 100 points at a time. You want to delay writing for 1000 samples, or 10 loops. The easiest way to do this is to put two DAQmx Loops in sequence, the first (without a Stop button) that takes the 10 samples and does nothing with them, and the second (with the Stop Button) that takes the rest of the samples and sends them to the Consumer. (You could do this with a single loop having a Case Statement inside, but the stopping conditions get messy).

The traditional Producer/Consumer manages sending data between the parallel loops using a Queue. I prefer to use Asynchronous Channel Wires, as I find them a better "metaphor" for Asynchronous Transfer of Data between parallel processes, so that's what I've done. Here's a Code Snippet:

The two loops on top are the "Take 10 sets of data, not saving them" and "Take data and send to parallel Loop that handles saving them to TDMS File", i.e. the Producer. The Consumer, below, opens a TDMS file and then waits patiently for Data to be sent from the Producer -- when it gets a Chunk, it writes it to the file.

The funny-looking "Brown Pipe" (Brown = Waveform Wire color, Pipe = Asynchronous Channel Wire, which "sits on top of" Structure boundaries, like the While Loop, signifying that the Data simply flows "over the top" of the Structure as fast as it gets put in (or taken out). The Stream Channel Writer and Reader (those rectangles at the ends of the Channel Wire) have additional inputs that simplifies stopping them -- I've wired the Producer "Stop" wire into the "Last Element?" input of the Stream Writer, and wired the "Last Element?" output from the Stream Reader to the Consumer's Stop Indicator, so when the Producer stops, it "tells" the Consumer to Stop.

And there you have it! You can do the same thing with Queues. Sending a "Stop" signal is a little trickier, but if you look up "Queue Sentinel", you may get some ideas (what I'd do is to exit the Producer and then send one more Waveform with an empty Array for the Data component, and modify the Consumer to check for this "I'm done" signal before writing and exiting from its loop. Note this also means that the Producer doesn't release the Queue -- the Consumer does, which is much safer).

Bob Schor

10-15-2019 08:32 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you very much, that was very helpful.