- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

loading sensor data into an array and then retrieving it

03-31-2010 04:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

04-01-2010 08:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello!

It sounds like what you're trying to do shouldn't be too hard. How will you be acquiring the sensor data?

jpnoon wrote:

I want to load light and rotation sensor data into an array using the loop count as the index.

You actually don't have to use the loop index. If you use an "Insert Into Array" function, it will add the new data to index n+1 by default.

The numeric constants in the loop are just for proof-of-concept. You'll want to replace those with your sensor value inputs.

jpnoon wrote:

The next iteration of the loop, I want to be able to sample any row in the array (by index) to get the data out. How do I do that?

Are you planning to access the data in every "next iteration," or just when a user requests data from index X?

National Instruments | http://www.ni.com/support

04-01-2010 12:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you for your help...I am a novice. I am trying to program an NXT to home in on a light source. I already build the 2 light sensor version, and it works like a charm, and now I want to build a single light sensor version.

Here's how I picture it working. Every loop, I will gather a single light sensor value and rotation sensor values from Motors B & C. I will run the present and previous light sensor values through a comparator, and that will tell me whether I am moving towards the light or away from the light. The only thing I don't know is whether I am "approaching/turning away" the light from the left or from the right. To determine that, I will run the rotation sensor values through a comparator. Here is the result matrix.

Make B wheel rotate faster than C. Make C wheel rotate faster than B.

(L2 > L1) & (B2 - B1 > C2 - C1) (L2 > L1) & (B2 - B1 < C2 - C1)

(L2 < L1) & (B2 - B1 < C2 - C1) (L2 < L1) & (B2 - B1 > C2 - C1)

In my original note, I said I wanted to collect light and rotation sensor data in an array using the loop count as the index. I can see now that is wasteful of memory because all I need are the values from the last loop and the current loop.

04-02-2010 11:31 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Ok, I think I have a slightly better idea now.

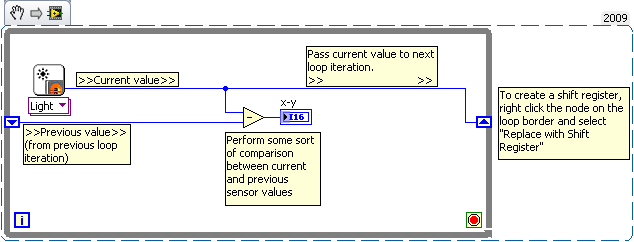

If all you need is the last iteration value, you can just pass the values to a shift register. Here's a quick screenshot to illustrate the process:

National Instruments | http://www.ni.com/support

04-02-2010 02:47 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you Caleb for this simple, but elegant solution to my problem.

Jim Noon

04-05-2010 01:26 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

National Instruments | http://www.ni.com/support

04-05-2010 03:27 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Cedric,

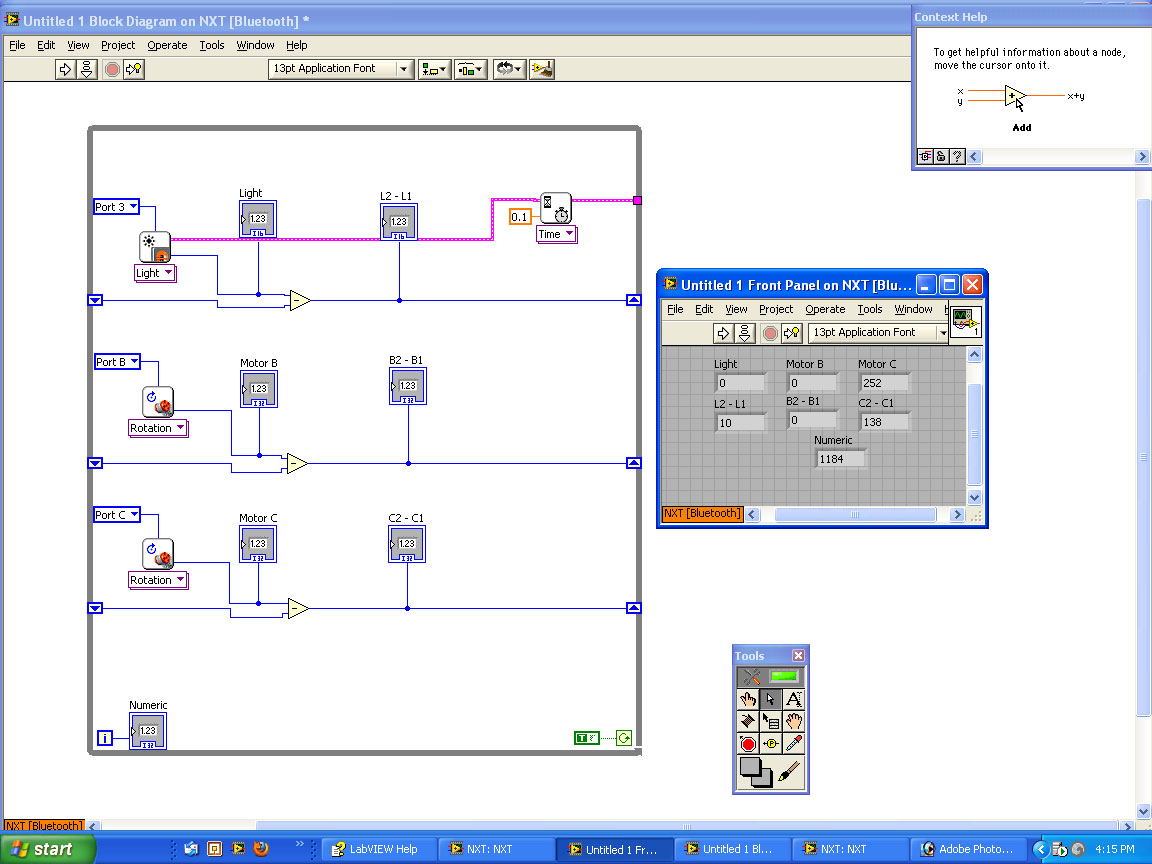

I have a question. I wired up the light sensor and the 2 rotation sensors the way you recommended, and then I ran the program to see what would happen when I varied the light level and turned the rotation sensors. What I was expecting in each case was the difference measurement + measurement 2 would add up to measurement 1. If there was a large excursion in sensor values between loops, then the difference measurement would be large. If there was no change between two loops, the difference measurement should be 0 or very close to it. Well, that didn't happen...the Panel readings were taken after the system had lay quiet for a few seconds. I am attaching my wiring diagram and some typical results. Frankly I am puzzled.

Jim

04-06-2010 07:43 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello Jim!

It looks like you're fairly close. (I looked at you image for a half-hour before it occured to me)

The current sensor outputs should be going to the shift registers. The way you've programmed it, the difference between the values is being passed to the shift register, not the actual sensor value. Essentially, you're taking the difference between the current sensor value and the difference from the last loop iteration.

Hope that helps! ![]()

National Instruments | http://www.ni.com/support

04-23-2012 05:06 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Caleb,

Thank you for your help (more than 2 years ago). I am a retired engineer, and I have had to learn a bit more before I could ask more questions.

I am a Lego Mindstorms NXT enthusiast and the coach of a First Lego League (FLL) team (5th graders). FLL is a lovely program, but it is a bit disconnected intellectually from the adult robotics world. I am trying to bridge the gap by providing my team with some exposure to robotics issues in the adult world through a combination of talks, laboratory projects and site visits to robotics companies. We are working on this right now.

FLL teams always have trouble with navigation, i.e. how to get from here to there, reliably and reproducibly. We have spent 6 weeks sharpening our skills with navigation projects. And to link what we have done with the adult robotics world, I have prepared a talk on robot mapping. I outfitted a robot with a motor driven IR Sensor (Mindsensors) and built a small space to map with cardboard boxes. I scanned the space, moved the data into Excel and generated plots which I present in the 2 attachments. One shows the NXT-G datalogging record of a 2-step space scan. The other is an Excel plot of the data in the datalogging record after I had converted it from cylindrical coordinates (R and Theta) to cartesian coordinates (X and Y). My next project is to get the Mindstorms/LVEE and the computer to do the scans, the calculations and the mapping.

This is an elaborate Thank You.

Jim Noon

04-24-2012 09:20 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hey Jim,

It looks like Caleb is out of the office. But I will forward on your thank you to him as soon as he gets back. I'm sure he will appreciate it.

Thank you for stopping back in to let us know how things are going.

Senior Embedded Software Engineer