- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Issue with USB-6363 and MATLAB interface

10-13-2021 04:55 AM - edited 10-13-2021 05:01 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi folks,

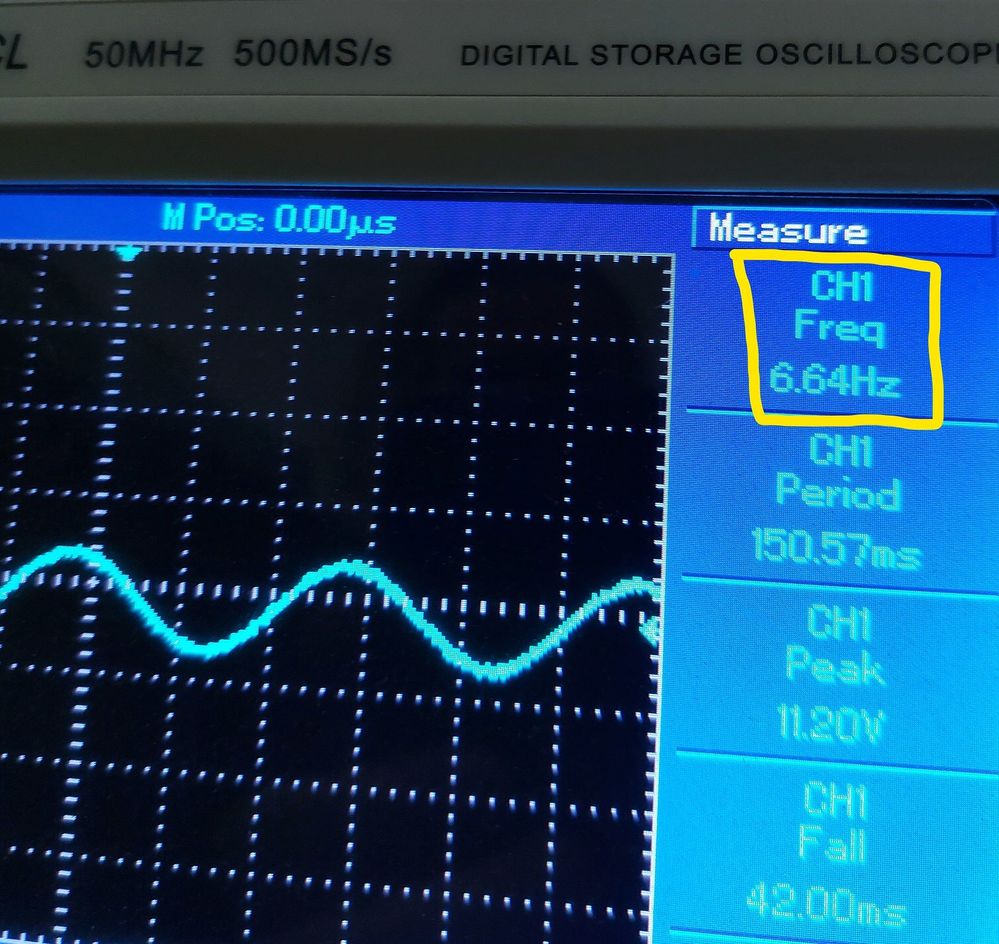

I have an issue outputting an ordinary sinewave from MATLAB via USB 6363 and displaying it on an oscilloscope. The output frequency of the signal shown on the oscilloscope is way smaller than the required. I've configured the frequency at 1000 Hz, but the output is coming around 6 Hz only. I'm sure the oscilloscope is properly configured.

Any help what the cause might be?

Here is what I've tried:

clear;

clc;

d = daq("ni");

addoutput(d,"Dev1","ao0","Voltage");

d.Rate = 1000000;

t = 0; % Starting time

tt = 25; % Total time

dt = 0.00001; % Time step

f = 1000; % Signal Frequency

while t < tt

out = 5*sin(2*pi*f*t); % Resolver Signal

write(d,out);

t = t + dt;

end

10-13-2021 08:28 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

This is simply the difference between your software simulated time with a dt of 0.00001 sec, and the actual loop execution time which would appear to be ~160x slower, with a loop time nearer 0.0016 sec.

I don't know the Matlab API at all, but you would need to configure a buffered AO task using a hardware sample clock set to 100 kHz sample rate. Then you write a buffer of data representing an integer # of sine cycles, start the task, and the driver and hardware will handle everything from there.

-Kevin P

10-20-2021 12:30 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I still don't get it. If the while loop runs with a step time of 0.00001 s, which means that the write process runs also in the same step time. Why the write process runs slower??

10-20-2021 01:22 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You're making an incorrect assumption. Your software *assumes* a step time of 0.00001 s. But in reality, it's not able to *execute* fast enough to make that assumption true.

Running the code in your script loop, and making calls down to the driver to update hardware signal values simply requires more time than 0.00001 s. You could set dt to 1e-12, 1e-6, or 1000 and it would have *no effect* on the actual real-life execution speed. Your *declared* dt has no relationship whatsoever to the execution speed of the script.

-Kevin P

10-20-2021 02:03 PM - edited 10-20-2021 02:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I understand that accessing hardware cannot be as fast as simulation. But even when I drop dt down to ms (say 100 ms, and neglect the sampling distortion), the output is still as slower as ever. which confuses me.

besides, this code below runs perfect, since it outputs the data scan all at once for 10 seconds

f = 10;

totalduration = 10;

n = totalduration * d.Rate;

t = (1:n)/d.Rate;

out = sin(2*pi*f*t)';

write(d,out);

what if I want to output a single output sample in each step time (say 0.0001 s)?

10-20-2021 05:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Sorry, as I said before, I don't know the MATLAB function call API at all. In LabVIEW (and in some C and Python I've seen, but which I also don't know in any detail), there's more involved in setting up a hardware-timed task than simply making an assignment like "d.Rate=". So I figured that your original posted code was still running AO in on-demand software timed mode where each function call to set a single output value would do at the moment of that software call, on-demand. And that *is* going to have a limited execution speed under any API (and moreso with USB devices).

Your latest posted code appears to write a buffer of data all at once to the task. If this works with the desired timing rather than throwing an error, I have to assume that the task had been configured to have hardware timing and a buffer. In that mode, the driver keeps delivering data to the device where it's clocked out at a very precise and possibly very high sample rate.

That's about all I can say. You probably need help from someone that knows the MATLAB toolkit, possibly over on the MathWorks site.

-Kevin P

10-22-2021 10:18 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you. This was helpful.