ni.com is currently undergoing scheduled maintenance.

Some services may be unavailable at this time. Please contact us for help or try again later.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- « Previous

-

- 1

- 2

- Next »

Slow rate calculation

06-20-2009 03:38 PM - edited 06-20-2009 03:40 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

(OK, CSV means comma separated values, however you files are tab delimited. Be careful with extensions! :D)

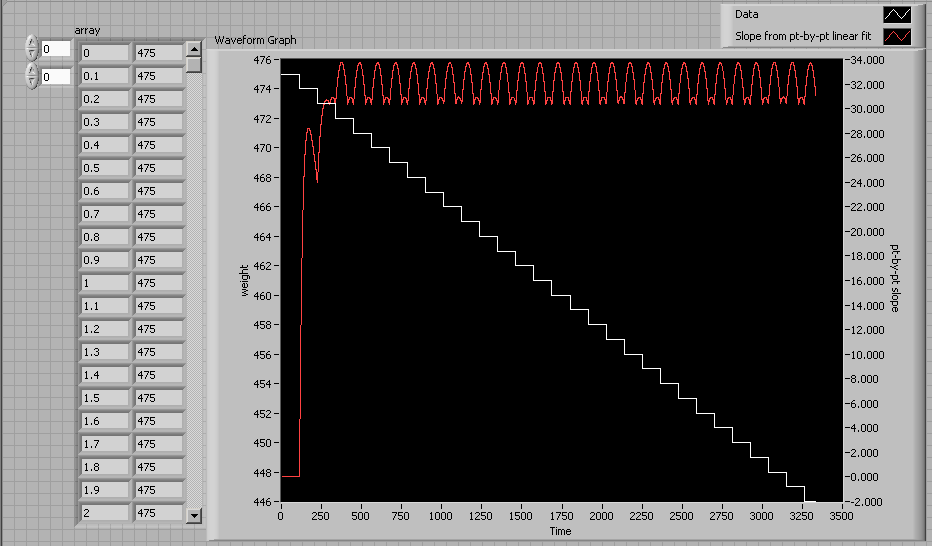

I think all you need to do is use the pt-by-pt linear fit of the incoming data with a reasonable buffer size (e.g. 3000 points) and things look pretty good.

(The lower FOR loop processes the data one point at a time, calculating the instantaneous slope from the historical data. In your application, it would just read the current weight at constant intervals at each iteration.)

Here's a quick example.

06-20-2009 05:24 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@ altenbach

(Interestingly enough, the CSV file format is tab delimited by default in OpenOffice. While technically we should be using a DSV extension for such things, CSV has become the de-facto extension for any delimiter separated value file.)

This is really great. It only takes your software about 2 minutes to give a reasonable rate, while I was having trouble beating 10. Your rate does oscillate by about 10%, though. I need to mix my chemicals with an accuracy of about 1%. That's the tradeoff, of course, between using more or fewer points for the fit.

So here are the points I'm pondering:

- How to display the rate such that the user does not take it for what it's not (the instantaneous rate of the pump)

- How to filter out noise such as that seen in the second example data file (Repeat after me: "The chemical tank is not a table!")

I'll see if I can run this live on Tuesday. It's a good deal better than what I've been using. We'll see how that goes.

06-20-2009 06:40 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

IMSargon wrote:(Interestingly enough, the CSV file format is tab delimited by default in OpenOffice. While technically we should be using a DSV extension for such things, CSV has become the de-facto extension for any delimiter separated value file.)

I think the de-facto standard is tab delimited (execel text export, LabVIEW (write/read spreadsheet file, spreadsheet string to array, etc.). If you call a tab delimited file *.csv, excel does not correctly open it because it expects commas. From a global viewpoint, comma delimited is probably one of the worst choices, simply because some countries in Europe use the comma for the decimal point, so doing a CSV would chop the data up beyond recognition. 😉 So if you ever think your company will expand and sell overseas, don't do csv! 😄

IMSargon wrote:This is really great. It only takes your software about 2 minutes to give a reasonable rate, while I was having trouble beating 10. Your rate does oscillate by about 10%, though. I need to mix my chemicals with an accuracy of about 1%. That's the tradeoff, of course, between using more or fewer points for the fit.

I think the oscillation is real. Look at the data: There are areas that stick much longer to one value than others.

In any case, there is no way to get better info out of your raw data. You have to work with what you have. If the slope is relatively constant between experiments, you could incoporate that knowledge somehow. Right now, there is no way to tell the slope in the early data if all readings are the same.

If your chemical reaction blows up if you're off by 1%, you simply cannot do it this way.

Maybe you could place a massflow controller on the drain or measure hydrostatic pressure at the bottom of the tank for more accurate data?

Instead of a single indicator, you could display it on a chart.

To eliminate the "glitch" in the second example, you need to incorporate knowledge, e.g. ("the weight can only go down") and filter accordingly. This is tricky. What actually happened there? Did some Hippo take a swim in the tank?

06-20-2009 08:12 PM - edited 06-20-2009 08:21 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

To see if the noise is real, I generated some perfect data, where the mass decreases by 1 kg exactly every 112.5 seconds. The results are below. (I also multiplied the slope by -3200 to give me fed Kg/h and autoscaled Y)

The shape of the oscillation does closely resemble that in our previous example data, besides the different frequency.

Edit: I had someone take a look at it. It sounds like what I need to do is run the input data through a lowpass filter. I'll see how that works out.

With just the feed rates, you're right, there just isn't enough information. With these improvements so far though, and coupled with the other inputs from the process (mixer mass, etc) I'm getting closer to that goal than I had previously thought possible. You know, we've been trying to convince my boss to get a mass flow meter or a hydrostatic pressure guage for a year now. Oh well.

"The weight can only go down" is exactly what I'm planning on doing. No time this weekend, but maybe Monday I'll put everything together. I'm not sure exactly what caused that glitch, but maybe I should test a sample for evidence of dissolved hippo 😛

06-20-2009 08:37 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

IMSargon wrote:To see if the noise is real, I generated some perfect data, where the mass decreases by 1 kg exactly every 112.5 seconds. The results are below. (I also multiplied the slope by -3200 to give me fed Kg/h and autoscaled Y)

The shape of the oscillation does closely resemble that in our previous example data, besides the different frequency.

This is similar to "spectral leakage", caused because the history buffer is not a multiple of the step lenght.

How is the weight measured? What kind of hardware is this? Since the problem is due to limited digital resolution, I don't think a direct lowpass filter would help.

Another option could be "dithering". Simply add random noise corresponding to about the step size followed by lowpass filtering?

06-20-2009 09:12 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The scale has an increment of 1lb, so I do have a bit better resolution than I have shown in my example data, but ultimately the problem is the same.

The value comes in as ASCII over RS-232

I tried toying with the point-by-point Butterworth filter. It was successful at high sampling frequencies, but then it took a long time to get up to the real data.

> Simply add random noise corresponding to about the step size followed by lowpass filtering

Really? That would be a brilliant solution if it works. I tried a method by which 1 is added to or subtracted from each data point, at random, before passing through a butterworth filter. In my imperfection, it makes it worse. Does it work for you?

06-20-2009 09:22 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

- « Previous

-

- 1

- 2

- Next »