- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Program lost part of functions after 1.5 month?

01-30-2012 11:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Coulpe of clarifications.

A Shift register passes the output of a loop back to the input (If for instance that is an error from a DAQmx read) the error will propagate throught the loop and the next DAQ read will see error in = T. Without a SR every itieration of the loop starts with the initial value of error in and any errors inside the loop are essentially ignored (Ignoring errors is not ideal)

DAQmx reads and buffers: essentially there are two flavors: "nChan continuous" and "nChan Nsample on demand"

with "nChan Nsample on demand" acquisition starts when you "Demand" the samples. A "Read" actually starts the acquisition and collects the number of samples requested and returns the samples (or times-out) after all samples are read no more acquisitions take place.

"nChan continuous" when started this type takes samples at a regular rate and puts them in the transfer buffer where the samples sit untill "Read" from the buffer. They are read out oldest to newest so the samples returned from your read are allways the oldest available. If you don't read as fast as the samples are aqcuired the buffer gets full and starts overwriting old data that has not been read and when you attempt to read them DAQmx throws an error and tells you you got data that was overwriten.

SO, if you have a continuous task and something starts slowing down the loop that reads the buffer (Like data files getting LARGE) you may get to a condition where the read can no longer keep up with the sample rate. Enough of that and you get an ovrerun.

"Should be" isn't "Is" -Jay

01-31-2012 03:08 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Jeff,

Sorry for the late reply I was on a B-trip yesterday.

Thank for the detailed explaination. In my case, I am doing the while loop every 5 seconds, and I read only 10 points @ 1KHz. This should be sufficient in terms of buffer size and reading speed etc, right?

Anyhow, like I said I just throw these out to see if there is any other potential issues. Thanks again for your help!

Rgds,

Harry

03-28-2012 11:22 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It happened again...

After the last implementation of code uses CCI Delay.vi, the system lost I/O once again after about 46 days... This is the 3rd time! Let me describe the symptom again:

1. Day 1: Program starts running; I/O works normal.

2. 44, or 45 or 46 days later: the system lost I/O all the sudden. While this happens, the Windows still running, and my code still running ok (the clock is going, tabs can be toggled etc). Only thing lost is the I/Os. They all display "NA" instead of showing current values like 15mA or so.

3. It looks very much like if I unplug the USB cable from the dock, which will also show all "NA" but everything still looks normal. Is it possible Windows lost USB connection after a certain period of time? Memory leak in USB driver??

We checked memory usage on the program and over 30days, it stays at ~40 MBs pretty stable. So does not seem memory leak in the code.

It's very frustrating because it's hard to trouble shoot (we have to fly 2000+ miles to get to the site) and it takes 40+ days to happen.

Any suggestions? Please?

Thanks in advance....

Harry

03-28-2012 02:26 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Harry,

You get a vacation every 40 days and you are complaining?

Long time delay intermittent problems can be extremely frustrating.

Can you post the VI and all the custom subVIs? It is very difficult to trouble shoot an image. As has been discussed above, the likely suspects are anything which uses integers and tries to count or increment past the maximum value.

Do you have any charts (not graphs) on the front panel? I never looked at the chart behavior over long times, but it is conceivable that the internal code in a chart could do something funny at that time like while loops or Wait(ms).

Lynn

03-28-2012 04:16 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Lynn,

Thanks for your reply. I have attached a zip file which contains everything. The Main.vi is the main which calls all the other subVIs.

Yes I do have a chart on the display but it was disabled during the issue happens. Actually most of the functions like data log, display were all disabled.

I would really appreicated if you could take some time to look at my code. Thanks again!

Rgds

Harry

03-28-2012 05:42 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Harry, I was afraid this would come back and bite us again. I was not satisfied with the previous explaination.

What do you mean by

" Only thing lost is the I/Os. They all display "NA" instead of showing current values like 15mA or so"? Whicth indicators? Really NA or do they display NaN (Not A Number)- A Search through the heirarchy shows no instances of the text "NA" so something internal to LabVIEW must be updating the text.

Thanks for sharing the project. there are a couple of things I would change.

You run the error cluster on a tunnel. This means each iteration starts with no error and at the end of the iteration no error checking is performed. I'd definately get some error handeling in the project.

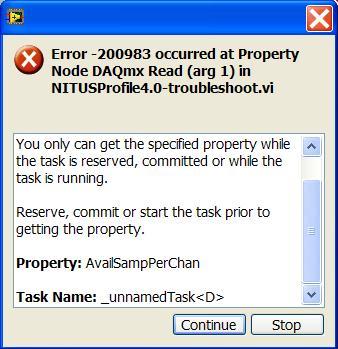

One prime candidate I see is a buffer overflow error in the DAQmx Read task. Looking at the task timing you create a task to take 100 samples at 1kHz. Then enter a loop where you read 10 samples with Autostart = true. Esentially, you leave 90 samples in the buffer. Then you come around some time later after the task stopped and start it again. again you only read 10 samples (Actually the second 10 of the first acquision) and leave another 90 in the buffer. This is bad. (Quick Test: Drop a DAQmx Read p-node and monitor property "Status.AvailableSamplesPerChannel") If this grows with each iteration the fix is to change the 100 Samples per channel to 10 in Create CurrIn.vi

"Should be" isn't "Is" -Jay

03-28-2012 07:53 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Harry,

Jeff gave you some good advice.

I wonder about your file operations. Since the files continue to grow, the OS may move or fragment the files from time to time. It looks like you have a pretty good idea of how much data you want to save. Try creating the file and writing more than the maximum amount of dummy data to it before entering the loop. That should cause the OS to create a file and to allocate more than enough space to it. Before entering the loop reset the file postion to the beginning. Inside the loop you may need to track the file position in a shift register. Inside the loop you overwrite the dummy data with the real data as it is acquired.

You have a lot of code which looks more like refugees from a text based language than LabVIEW code, but I do not think that it is causing your problem.

If you replaced the Analog Read with the 2D array version you would not need the Split Signals and the multiple Dynamic Data converters. Index Array would do what you need. It could be moved into RawCurr.vi, cleaning up the diagram considerably.

Your use of Formula Nodes seems quite strange. In DenRecon.vi Band_End and Band_Start are not defined inthe node so always produce default values (0). Actaully I was surprised that the complier did not throw an error. Everything else in there generates constants. Why not just use native LV constants, outside the loop?

Lynn

03-29-2012 10:16 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Jeff,

Thank you first for taking time to read my code. My answers to your comments/questions.

1. Sorry about the typo. Yes, it is "NaN" in the indicators. If you look at the bottom part of "Measurement" tab where "Raw Current", "Raw Density" and "Density" columns are, those are the values based on current input I read from analog card (with some calculation). The "Raw Current" is exactly what I read from the card.

2. I admit that I am not clear on the error handling. I thought putting a "simple error handler.vi" at the end of the loop was the way to do it. Do you mind show me how should i do it correctly?

3. Seems another my misunderstanding. I thought each cycle the buffer will get cleared and have new data pulled in. I did the test you suggested by dropping the p-note after the DAQmx read.vi. I saw the indicator of p-note dropping from 90, 80, 70... 10, and then an error pop up. What did i do wrong?

Another point is, one of my previous version of code used the default number of sample (i connected -1 to the "number of sample per channel" of DAQmx read), and I still got the 45 days problem. At that time I thought by reading less number of sample I might save "something" - pretty stupid huh? 🙂 Anyhow I'll change the Create currin vi to 10 points.

Sorry to throw out some many things. I guess I just lost direction...

Rgds,

Harry

03-29-2012 10:53 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Lynn,

Thanks for you and Jeff trying to help me out! To answer some of your comments,

1. I think you for sure right about the file operations. The .txt file I save to hard drive is in the size of a few MBs I believe at default log rate which is every 10 mins (~52000 entries /yr, with 32 numbers per entry). But the sad part is, we never ever true on the log data function at all. So seems to me it should not cause this 45 days issue.

2. Yes I am a newbie of LabView. I still remember my first time showing code in this forum when I literally immigrate the text based code to LabView. My code was humongous with local variables + sequence structure everywhere... I got beat up by ppl here pretty bad... lol.

3. The Formula node I put here actually just a frame and does not do anything. That part of code is created by another researcher. Basically some calculations (involving multiple loops and lots of "if, else if" sort of things) are done in the Formula node and I just need to worry about the inputs and outputs of the Node. That's why you saw dummy Formula node here. Initially we thought using LabView's data flow programming to do those calculations is too complicated so we chose use Formula node. Will the execution of Formula node causes issue? LabView's formula node is kind of hard to debug and I don't know how it uses memory? Can you give me some suggestions?

4. I am not very familiar (or a little intimidated) with 2D arrays, especially dealing with Index Arrays. Do you think it's a must? If so I guess I could try to force myself learn some new things. It' just I am afraid to introduce more variables/uncertain in my code considering I'm still learning...

I am kind of lost direction at the moment. I want to blame the PC or Windows we use at the site, which never get any windows updates because of no internet connection. I would think Windows might lose USB driver somehow so it cannot recognize the cDAQ deck, but it seems just an uneducated guess.

Any suggestions? Thanks in advace...

Rgds,

Harry

03-29-2012 12:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Harry,

1. If you are not logging data, then this should not be the problem.

2. You are making the effort to learn. That counts for a lot.

3. Formula nodes are often somewhat slower than native LV code, but they should not cause your 45 day problem.

4. No, you do not need to use a 2D array. I personally dislike the dynamic data type because you really do not know what is going on in there and the conversions to other datatypes are not clearly defined.

I do not expect you to change a lot of things now. That would just introduce other problems while you are chasing the 45 day issue. I was just mkaing some suggestions to help you learn how to use LV more effectively in the future.

Your comment about Windows and USB drivers may be relevant. If something overflows a 32 bit counter in the OS and shuts down the USB port, it could cause this. I do not use WIndows much, so I have no idea about how likely this is. I checked your RawCurr.vi. With empty input arrays (which is what you would get with a read error) the outputs are NaN.

As Jeff suggested, look at your errors. Change the tunnels to shift registers and put error out indicators on the wires inside the loop. I suspect that you will see an error when the NaNs start showing up.

Lynn