- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

PCIe-7852 Analog In Timing

02-25-2010 02:33 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I have a PCIe-7852 and am trying to figure out the precise timing associated with the analog in channel. On other boards, like my PCI-6259, I am able to externalize something called AI_Convert and look on my oscilliscope to see the signal being sampled relative to AI_Convert.

Rick

MIT Kavli Institute for Astrophysics and Space Research

02-26-2010 04:02 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

FPGA Card’s timing are handled a little different than a standard M-Series card. This R-Series card has a 40 MHz clock that the user can modify to whatever rate they need for their application. This is simply done by multiplying or dividing the clock to whatever value is necessary.

The PCIe-7852R has 8 Analog Inputs that can sample at rates up to 750 kHz. This is a limitation to the ADC not the FPGA itself.

Mike S

NI AE

02-26-2010 04:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

My question has to do with the timing of the ADC's sample and hold, not really setting up the sampling frequency.

I am looking for a way of telling exactly when the ADC clamps analog signal relative to the DIO outputs that are controlling the sensor I am looking at. As I mentioned, in the M-series I can just send AI_Convert to an output and look at it's timing on a scope.

The spec for the PCIe-7852 says that the AI is rated at 750Khz. So, when I do an Analog-In measurement and am twiddling DIO control signals to the sensor using the 40Mhz clock, I need to understand when the S&H on the signal occurred.

MIT Kavli Institute for Astrophysics and Space Research

03-01-2010 04:20 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Rick-

R-Series cards work a little differently than you are used to. Typically, these events are based on “Ticks” as opposed to actual time measurements. This is due to the fact that the user has control over the clock rate and the card simply requires “Tick iterations” not amounts of time (some tasks can be faster or slower).

As we have said, the clock rate on your PCIe-7852R card is 40 MHz. For ADC, the FPGA requires 3 ticks. Therefore running your clock at 40 MHz, it will convert in 75 nano seconds (25 x 3). However, the maximum sample rate for this card is 750kS/s. This translates to 80,000 nano seconds per sample. The reason for this has to do with settling, conversions, buffering, and all the other requirements associated with taking an analog measurement.

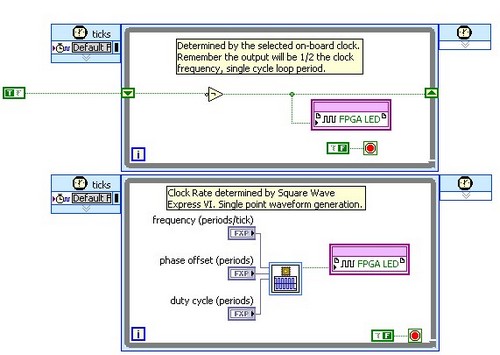

Two methods of viewing this close with your R-Series are shown below. The top example will utilize the actual clock signal while the bottom one will generate a signal in parallel. Because this is FPGA, they run exactly the same.

Does this make sense?

Mike S

NI AE

03-01-2010 04:55 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

So, for a Single Cycle Loop running at 40Mhz, with concurrently reading an analog in channel and writing a DIO

i.e.

Tick 0 (T=0): Set DIO0 low and Read AI0

Tick 1 (T+25ns): DIO is still low, and AI0 is still converting

Tick 3 (T+50ns): DIO is still low, and AI0 is still converting

Tick 4 (T+75ns):DIO is still lo and AIO is done converting

What I need to know, relative to DIO going low, when does the ADC do it's sample & hold and how long does the signal need to be stable. Is the S&H occuringconcurrently with the falling edge of DIO, or a tick later

I understand that at 750KHz, I can only read in that analog channel one every 1/750KHz = 1333ns, but my question has to do with the fine timing in those 3 ticks when the converstion is occuring.

MIT Kavli Institute for Astrophysics and Space Research

03-02-2010 07:08 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

After further investigation into this issue, I believe I gave you incorrect data. I was inaccurate in saying an Analog Input requires 3 Ticks. I apologize for this misinformation.

The FPGA IO Nodes only run as quickly as the RIO card will be able to acquire samples. As we have said, this particular card will run at 750 kHz at its maximum speed. For example, if you simply put an IO Node in a while loop with no timing, it will run at 750 kHz. During this time, everything that is required to take a sample is handled in this node. Therefore, you will be able to run IO Nodes back to back without issue. This includes the ADC which is the bottle neck for the reading itself.

Does your question pertain to the specifics of the timing for the ADC? This will be specific to the card. If you would like, I can get with our R&D department to find out exactly the timing associated with the ADC with the PCIe-7852R.

Exactly what is your application where it is necessary to know the interworkings associated with the ADC? How does this information affect your project? Our R&D Team will want to know this information before releasing these specifics.

Mike S

NI AE

03-03-2010 07:59 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I am controlling a detector using various FPGA controlled DIO signals from the card and the state of the output of the detector is dependant on those signals. when I toggle the last contol DIO line that is part of the control sequence and issue and FPGA I/O Item read of AI0, there is no way for me to know when the actual sample and hold occurred (I am aware that an SuccApprox ADC then needs some amount of time to process the result after the input has been sampled). So I know when the DIO line was asserted as I can see that on the oscilliscope but have no way of knowing when the S&H actually occured.

We seem to contimue to be on different pages regarding my question and I apologize if I am not being clear.

Any information about the ADC and it's timing relative to the FPGA FPGA I/O Item read being executed would be appreciated.

MIT Kavli Institute for Astrophysics and Space Research

03-04-2010 05:15 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I have a much better understanding of your issue. Thank you for the clarification. Unfortunately, I don’t know the answer to your question. I have escalated this issue to our R&D department and will post back to this forum with their response. Thank you for your patience.

Mike S

NI AE

03-05-2010 04:52 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

As I mentioned, the FPGA I/O Node speed limitations are directly related to the ADC. However, for the exact timing, that gets more complicated. Once the FPGA I/O Node is called, it generated a convert clock and within nano seconds it calls into the ADC. The specs on the ADC (just as with all ADCs) are defined very loosely. Most spces for ADCs are based on a minimum amount of time as opposed to giving a range and/or maximum. This is due to the fact that there are many conditional attributes that can account for the amount of time including operating temperature, age, range of input, etc. With this in mind, the exact amount of time can vary from card to card. For this particular card, our R&D department would expect it to be approximately 300 nano seconds from the time the FPGA I/O Node is called until the sample is being taken. From there the conversion begins and the data is communicated to the FPGA. For more accurate timing specs, I would suggest testing your particular ADC directly since each will be a little different.

Mike S

NI AE