- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Interpolate Gaps in a Large Array

Solved!07-25-2020 07:13 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

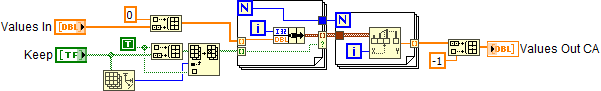

I have a very large array of doubles, and some conditions (that boil down to boolean) that determine whether each element should be kept or discarded; the discarded elements to be replaced by interpolated values. Because the array is very large, I want the code to be very efficient. Attached is what I have come up with. Can anyone come up with something more efficient?

Solved! Go to Solution.

07-25-2020 11:02 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

What you have works. Why do you think it would be inefficient?

It looks like you keep one copy of the array and do Replace Array Subset to replace elements.

It looks like you just advance through the array just one time.

Both of those things make it seem efficient to me.

07-26-2020 06:51 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@RavensFan wrote:

What you have works. Why do you think it would be inefficient?

It looks like you keep one copy of the array and do Replace Array Subset to replace elements.

It looks like you just advance through the array just one time.

Both of those things make it seem efficient to me.

It's not that I thought that it was necessarily inefficient; but often people here find clever ways to make things better.

07-26-2020 09:02 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

If there is a way to make it better, Altenbach will find it! 😄

07-26-2020 02:10 PM - edited 07-26-2020 02:28 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@RavensFan wrote:

If there is a way to make it better, Altenbach will find it! 😄

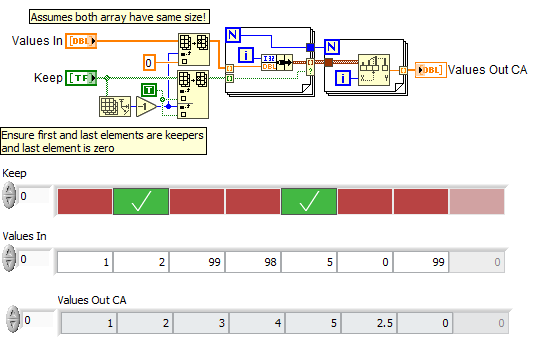

Maybe not "better", but certainly faster to program and debug (fewer places of bugs to hide! ;))

(attached VI contains both versions to compare operation)

NOTE: the results differ if the last element of the boolean array is FALSE. It is not clear what you would want in that scenario. See post below to get the same result.

07-26-2020 02:27 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@altenbach wrote:

NOTE: the results differ if the last element of the boolean array is FALSE. It is not clear what you would want in that scenario.

If you want the same result even if the boolean array ends in FALSE, this might work.

07-26-2020 02:38 PM - edited 07-26-2020 03:00 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@paul_cardinale wrote:

Because the array is very large, I want the code to be very efficient. Attached is what I have come up with. Can anyone come up with something more efficient?

"Very large" is a fuzzy term. Do you have some numbers? The efficiency between the two algorithms also depends on the TRUE probability of the boolean array. It is sparse or mostly true? (This determines the size of the intermediary data structures in my code).

One problem in your code is the initial "prepending" of elements, which can potentially lead to new array allocations (there is an allocation dot). Try to avoid that.

07-27-2020 09:24 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you. That's just what I was looking for.

07-27-2020 09:30 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@altenbach wrote:

@paul_cardinale wrote:

Because the array is very large, I want the code to be very efficient. Attached is what I have come up with. Can anyone come up with something more efficient?

"Very large" is a fuzzy term. Do you have some numbers? The efficiency between the two algorithms also depends on the TRUE probability of the boolean array. It is sparse or mostly true? (This determines the size of the intermediary data structures in my code).

One problem in your code is the initial "prepending" of elements, which can potentially lead to new array allocations (there is an allocation dot). Try to avoid that.

There will be a few million to several million elements. The % of TRUE elements in the boolean array is unpredictable, but most likely from 30% - 50%.

I should have realized that, because of the nature of the data source, the beginning elements will always be keepers, so I really don't need prepend anything. Also, the data near the end of the array is of lesser importance (and declining importance as you go farther up the array). Most of the upper values will be near 0; and what happens with the very last element is inconsequential.

07-27-2020 10:04 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@paul_cardinale wrote:

I should have realized that, because of the nature of the data source, the beginning elements will always be keepers, so I really don't need prepend anything. Also, the data near the end of the array is of lesser importance (and declining importance as you go farther up the array). Most of the upper values will be near 0; and what happens with the very last element is inconsequential.

I am not sure where that -1 comes from and if it is important. Maybe it can be left out. If the first element is guaranteed to be valid and the last element asymptotically zero, you could just ensure these while keeping all arrays at the same size.

Still, I would recommend some benchmarks.