- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

For loop parallelism on single core

Solved!01-25-2018 04:40 AM - edited 01-25-2018 04:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

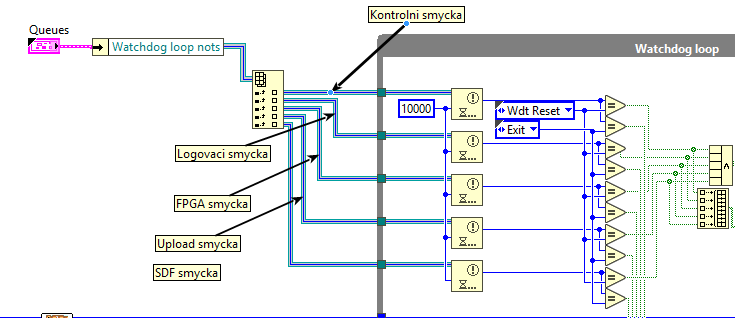

I use array of notifiers (let's say size n). There is a watchdog code which waits for every notifier from array with 10 seconds of timeout. If any of them times out, it runs a function. At this moment, i have no for loop and these notifier waits run simultaneously, so they all finish after 10 seconds max. I would like to do the readings programatically, so when I add new watchdog notifier, I don't have to add new branch of notifier waits. If I use for loop, there is no parallelism and it is finished after n * 10 seconds. On dualcore, if I turn parallelism on, it takes n * 10 / 2 seconds. I need something to run in parallel on single core, is there any option?

Thank you.

Solved! Go to Solution.

01-25-2018 04:44 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It would really help if you uploaded your VI. That way, we could see what is going on with anything else within your VI.

01-25-2018 12:08 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You are not even using the "timed out?" output? How do you tell if a timeout has occurred?This all seems clumsy.

Why can't you run them with a shorter timeout and keep track if any of them time out e.g. 10x in a row and if so trigger the timeout code?

Yes, please show us more of the code.

01-25-2018 02:14 PM - edited 01-25-2018 02:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Your level of parallelism is dependent on a number of factors. It would be worth reading this (on the dark side) that will help fill in some gaps. It looks like the Wait on Notification is a blocking call, which means while a thread is calling this node it is not readily available to execute another Wait node.

Since you say you are locked to a single core CPU, possible improvements to overall time are:

- Maintain the "watchdog timeout" independently of the Waits - basically run the Notifiers with a small timeout (say 10ms) inside a loop and then keep track of the total expired time, resetting if needed when notifications come in. its a bit more work but it minimises the blocking behavior of the node and when run on a multi-core machine the overall resolution of detecting a timeout improves.

- Customising the number of threads LabVIEW allocates per Execution System per priority. The link I gave has information about this. This should allow you to programmatically increase the number of Notifications you wait on - however I don't know the "secret sauce" as to whether LabVIEW's parallel FOR loop will try and utilize all available threads in that Execution System / priority. You could benchmark it however to determine the improvement.

- Splitting your notifications into groups (via more than one typedef control) and wrapping groups in VIs that run in different Execution Systems (any except User Interface) and priorities. This will give you some immediate gains (since the threads in other execution systems will probably be idle) but you will need to re-evaluate this balancing act every time you increase the number of refs in the arrays.

01-25-2018 04:16 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@tyk007 off-topic but everybody at LAVA calls NI the dark side, first time I've seen the reverse. ![]()

01-25-2018 04:23 PM - edited 01-25-2018 04:25 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Haha I got into the habit of calling the other side from where I was posting the "dark side" ![]() Unfortunately our work proxy blocks LAVA otherwise I'd frequent it more often.

Unfortunately our work proxy blocks LAVA otherwise I'd frequent it more often.

01-26-2018 02:59 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

So I've been studying parallelism and it seems all I needed to know is that if you wire the mysterious P terminal on for loop, LabVIEW makes parallel instances independently of number of cores, hopefully splitting it between the cores in the most efficient way. I've made a snippet below with Wait blocks, it makes no sense but works the same as with Wait on Notification. The solution on the right is the equivalent of the one on the left.

01-26-2018 03:05 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The timeout terminal is not connected but the notification terminal has the information of the timeout itself since it returns default value only when timeout occurs.

I am not sure if I understand your idea but I already found the solution, you can see it in this thread. Turning the parallelism on and wiring P and N terminal with number of iterations makes it fully parallel.

01-26-2018 03:08 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

As always, it's good to know how/why things work as they do. A parallel loop is automatically set to the number of cores (threads?) since it's safe and should yield benefits. Depending on what's happening inside that can of course vary greatly. With a simple Wait a single core can handle lots of 3 sec waits with decent precision. If you'd done some 2048 points FFT which is already parallel you'd simply lose performance due to overhead.

But yes, wiring P allows you to control the number of threads which can be great if performing slow commands, like opening 8 serial ports or something.

/Y

01-26-2018 01:48 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Yes, you can define the number of parallel instances in a parallel FOR loop, but I wasn't sure how far you want to take this. It only scales in a limited range. The number of parallel instances is limited (default is 64, can be change to 256 hard max).

I am pretty sure there is a better, infinitely scalable solution. 😄