- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

FPGA - Data tranfer using DMA FIFO - coding / decoding

07-08-2015 03:46 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi everybody

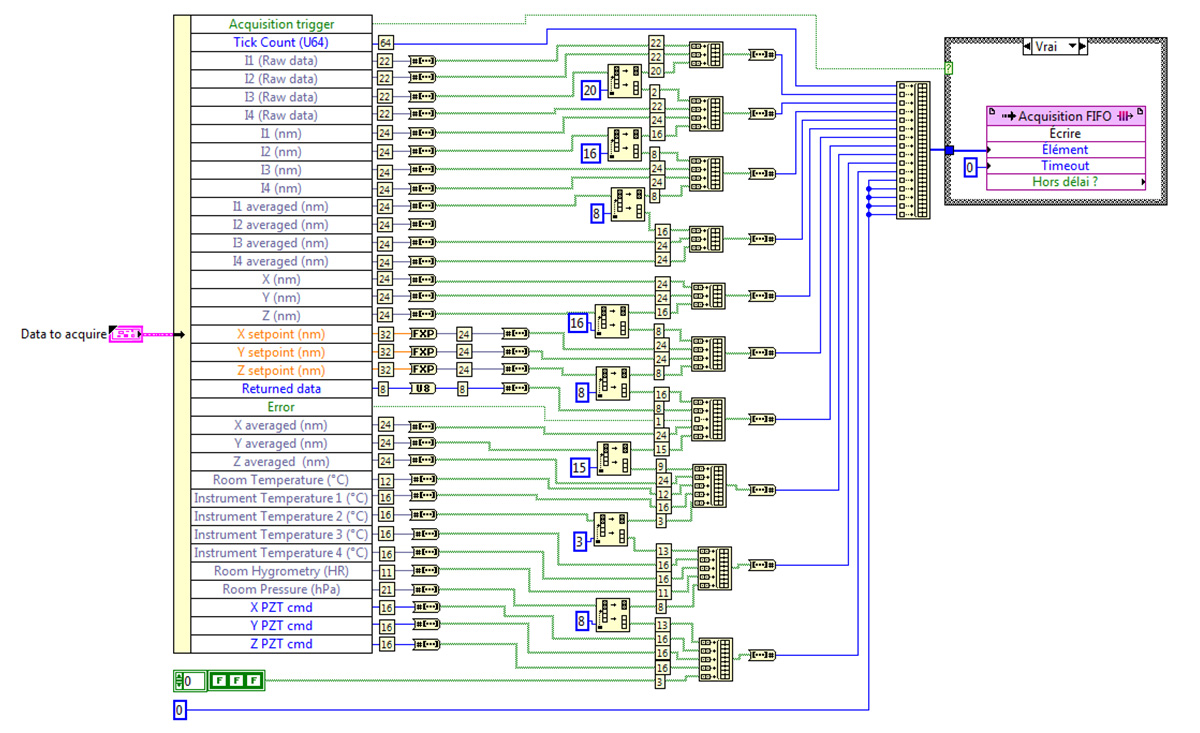

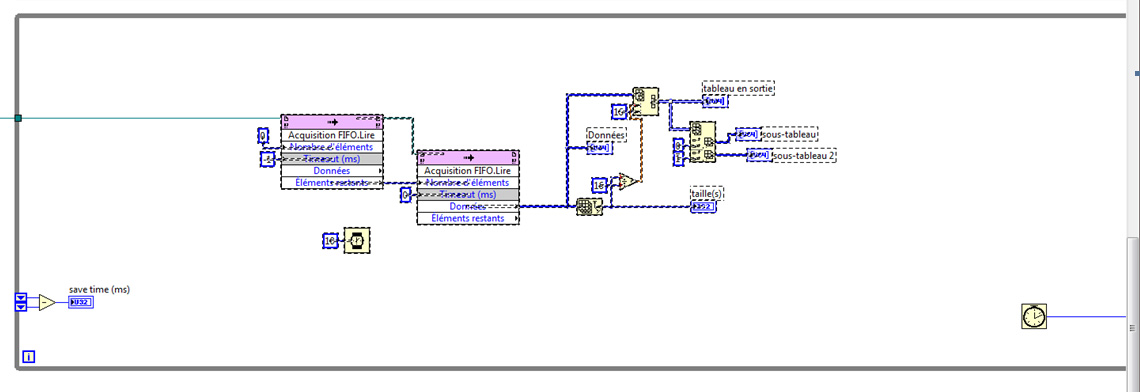

For my current application, I need to transfer from my FPGA (PXIe-7975R) a large amount of data regrouped in a cluster (about 1000 bit) at a frequency of 1-2 MHz. For that purpose, I convert my cluster in words of U64 that I send by block of 16 words into my DMA FIFO. On my RT target, a loop get the data back (n x 16 blocks of u64). I have for the moment no problem with the data transfer rate. My problem concerns data recovering on the RT target. I would like to process (decode) the data quickly, using arays for example, to extract some data and to display them. Unfortunately, the only solution I have found is to decode each block of 16 U64 one by one, and its take me a long time. What solution would you propose to code the data on the FPGA and decode them on the RT targer ?.

Thanks in advance

Sebastien

07-08-2015 04:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Not such a help but I read this, maybe it could inspire you :

https://forums.ni.com/t5/LabVIEW/Most-efficient-data-transfer-between-RT-and-FPGA/td-p/2728189

07-08-2015 08:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

thanks llebras for the information.

After reading several posts, what I want to do is to flatten the cluster to pass it onto the DMA FIFO and then unflatten it on the RT targer. Is there a (easy) solution to do this ?

The function on PC or RT target is flatten to string. We could also use variant but this is not availble on FPGA !

07-08-2015 09:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Your implementation for the FPGA is (unfortunately) the most common and recommended approach to flatten data on the FPGA if you need to pack the bits as tightly as possible. Note that on the FPGA all those bit and packing operations are pretty much free since they just turn into wires (routing logic) on the FPGA.

You'll need to do the inverse set of operations on the RT side to extract the information. All the primitives you used on the FPGA are available on RT, so you just need to convert from bools to numbers where you converted from numbers to bools, for instance.

This bit twiddling is more expensive on RT. If you have extra bandwidth left with the DMA stream you can probably find a balance between packing elements more loosely on the FPGA side to require fewer bit shifts on the RT side. For instance, you could coerce all the numerics less than 32 bits to 32 bits so you can simply coerce the value back to the lower set of bits on RT rather than having to do any bool array operations.

There are some other tricks like reverse engineering the flatten to string function used by RT and constructing that string in the FPGA as a byte array before sending it to RT. Then on RT you cast the byte array to string and just unflatten. However, that is actually slower in some cases and might need to be tweaked after upgrading LabVIEW to account for versioning.

07-08-2015 12:16 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you Dragis

This is exactly what we have done. We try to get some data very close to 16, 32 o 64 bit to be able to use data manipulation functions on the RT PC that works fine with arrays. Those functions allow us to directly manipulate the data flow coming from the DMA FIFO to extract the different signal without using element by element operations. So it is relatively fast.

Considering what we have done to send the data, I wonder why customed control can not be used for DMA FIFO. If the cluster size respects the maximun number of bit you can send in the DMA FIFO with one block of sixteen U64 (1024 bit) , we should be abble to flatten/unflatten the cluster ? Will it be available on further version of Labview FPGA ?

07-08-2015 01:16 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

There's no technical limitation, just the time and resources to implement it. There is an Idea Exchange suggestion specifically asking for this feature: http://forums.ni.com/t5/LabVIEW-FPGA-Idea-Exchange/Allow-Cluster-and-Array-Transfer-Types-for-DMA-St... . Please vote on it and ask all your friends to as well : )