- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- « Previous

- Next »

Calculate the time difference between two time stamps?

09-08-2015 02:08 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Although extended precision numbers occupy 128 bits, only 80 bits are used in calculations.

09-08-2015 02:41 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

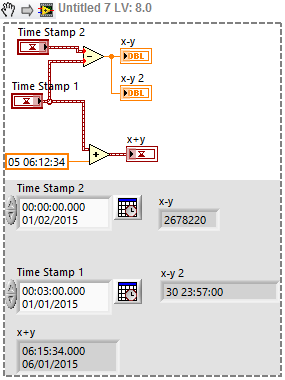

Try this

09-08-2015 04:49 PM - edited 09-08-2015 04:53 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The limit of 1600 is given by Windows which does not allow SYSTEMTIME values before 1601 and the FILETIME is a 64 bit unsigned integer with 100ns intervals starting at that time too. NI could of course go to the trouble to implement their own date/time library that allows dates back to 0001 or maybe even 15 billion years BC just to be safe for the big bang physicians, but date time handling is a complex problem in itself that bigger fish like Microsoft have put in many man years and still only support a limited set.

Inciidentially the 1601 in Windows is not exactly an arbitrary date to choose as epoch for a timesystem, as only a few years earlier (1582) the still valid Gregorian calender was introduced. Before that the Julian calander was used which had every 4 years a leap year and therefore had been gotten out of sync by 10 days. most likely 1601 was choosen instead of 1600 to avoid the 400 year exception in leap year calculationright in the beginning of the epoch for this timesystem. Why there seems to be an upper limit of 3000 I'm not sure. Windows allows years up to 30827 but there might be other platforms which have lower limits and LabVIEW traditionally tries to stay within the limits of any system it is running on.

Anything beyond 3000 however is most likely anyhow quite academic since there is not a big chance that we will still use the Gregorian calender in 1000 years.

And one extra tidbit, originally the LabVIEW timestamp was not even a double but an unsigned 32 bit integer like in the Mac OS Classic from which LabVIEW originates from. The first Windows version also used that format but this got later changed to use a double precision floating point number, which allowed for fractional seconds and a potential date beyond 2034. The timestamp as it is now got introduced around LabVIEW 7. This was mainly to have a specific datatype for absolute time information. There is always a lot to say about any particular choice in such an implementation. As far as I know the lower 32 bit of the fractional part are not even used at all. This still allows for a theoretical resolution of 1/2^32 seconds which is 1/4*10E-9 or about a quarter of a nanosecond resolution. But if you get the timestamps from the computer you will be hard pressured to get timestamps with a higher resolution than milliseconds. Most OSes don't really update the internal clock faster than that.

LabVIEW treats absolute time as a very special datatype which has some very specific arithmetic attributes. While such things can be discussed and there will be always people disagreeing with whatever decision is made, I do find the current arithmetic rules for timestamps pretty sensible. Adding up absolute times for instance is at best a questionable exercise but more likely would have to produce about umptien different results depending who you ask and with what particular timestamp values you try. To avoid such ambigious operations LabVIEW simply defines the addition of two absolute times as an invalid operation. Similarly there is no common sense result from a multiplication or division with a timestamp.

If you want to do normal mathematics with time you need to use double precision as a relative time from a self defined start point and not something like an arbiitrary and inconsistent system such as the gregorian calender on which the LabVIEW timestamp is based.

09-09-2015 05:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi all,

this is my last post about this subject, I feel a bit like an alien...

It seems to me that the timestamp object is sold as an object with a much broader scope than a date object. I like it because in principle it can be used to represent both very large and very small time stamps. As you put it, the timestamp type is a toy, something that when things get serious about timekeeping, well, one is better off with doubles doing himself the job... I can't believe that this was the original intent of the engineers that designed a 128-bits timestamp.

Let me point out again that the objections about the absurdities of summing and subtracting absolute times are totally irrelevant. All the objections made are not related on how the time is encoded (in the 128-bit timestamp or in the doulbe) or manipulated algebraically (I never mentioned multiplication or division, though), but on how is visualized in the indicator or control. That code, the code transforming a doulbe or a timestamp into a string that human readers interpret as year, month, day, hour, etc. -- the part related with the Gregorian calendar -- is pretty much the same and already there. Guys, you can already format any doulbe, including the difference of two doubles encoding absolute times, as absolute time! Do you really need a type just to prevent users to do that?

For engineers, time is a real number. Real numbers (better, a subset of) can be represented as floating point doubles or as 128-bit fixed point fractions. Apart of the low level implementative

details they work the same, because they represent the same quantity. When you subtract, or add, it is just a subtraction or addition of two real numbers encoded as floating point doubles or 128-bit integers, nothing exotic.

If you say, OK we are not going to change anything because you are the only one who ever noticed this and in 99% of the applications you are better off with doubles, well, I can understand and in part agree, but there are no other reasons.

You have not convinced me, timestamp type should be extended to be summable and differentiable and to be formattable as absolute and relative time, because this will extend, not shrink the applicability of the type, because it is very easy to do, nearly everything seems already done, and because you may end up in a situation where in future fewer times a user get answers like "you are better off with double doing things yourself instead of using library functions".

Sincerely,

09-09-2015 08:34 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I still don't understand your problem and I'm not sure why you think the timestamp type is a toy. As far as I can tell, everything is working correctly and your only issue is that a timestamp can't be considered as relative? What's the big deal with that? Just take a numeric and format it as relative time and you're done:

You can see that the difference is almost 31 days, and unlike absolute time, this actually makes sense. You can add 5 days and 6 hours and it makes sense.

I would argue that if anything, the fact that numerics can be formatted as absolute time is a leak which shouldn't be there. Most likely it's there because it was needed for backward compatibilty. In the same way, I don't think there's a need for the timestamp to show relative time. The fact that time is "a number" should be considered an implementation detail, not something to play with.

___________________

Try to take over the world!

- « Previous

- Next »