- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Linear mapping method

02-27-2023 09:46 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello,

We are recording some data through a data acquisition unit, that does not always create perfect time channels (monotonic increasing with perfect sampling periods).

However, for ease of use, we want to work with waveform channels.

To create waveforms, we re-create a perfect, implicit, time channel with the theoretical, expected, sampling frequency. So far so good.

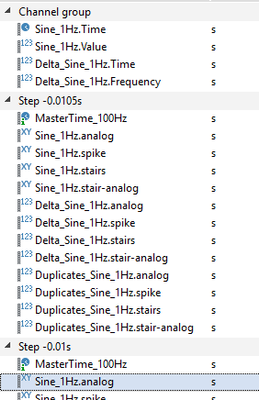

We then linear map the original time and value channels, on the new perfect implicit time channel, with a "Stairs" method.

Here is the linear mapping command used (DIAdem 2018):

Call ChnMapLinCalc("Signals/Time_" & oChn.Name,oChn,"Master Time Channels/MasterTime_"& Round(LinearMap_fs,2) &"Hz",oChn,1,"const. value",NOVALUE,"Stairs",0)

And the behavior of the linear mapping method is not what we would hope.

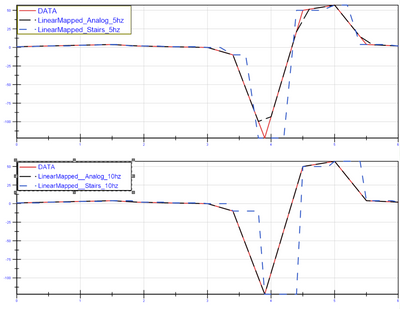

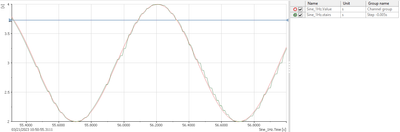

What we would hope is that always the last valid value is used, not the closest value, see example in attachment:

- in red the original time/value signal,

- in green the result from the "Stairs" linear mapping method,

- in blue the desired behavior (drawn for 3 obvious sampled only).

Is there a better function than linear mapping, to perform such desired behavior?

We tried the Resampling function, using an interpolation channel, but that yields the same result.

Thank you,

Jacques

02-27-2023 02:19 PM - edited 02-27-2023 02:21 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

- I created fake data with imperfect time stamps that are supposed to be around 2Hz

- I generated perfect linear channel with 5Hz and 10Hz timestamps (within the same range as the original time channel

- Linear mapped both using stairs and analog methods

- From there toy can convert the lineargenerated_10hz and linearmapped_10hz_analog to a waveform.

The best one happened to be the 10Hz analog method, it follows the original data perfectly without shifting the time.

Attached the tdms and a comparison plot

Issue: expands the data the data

02-28-2023 01:25 AM - edited 02-28-2023 01:27 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks for the reply.

The aim is not to up-sample the time channel. We want to keep the original time channel sampling frequency, which, in the case of the example, is 2000Hz. While it's very close to perfect 2000Hz, some periods are slightly too short, or slightly too long.

That's why I create a perfect 2000Hz implicit time channel, as close as possible to the original time channel. That implicit time channel will be used to convert the numeric channel to a waveform.

Also, we want to stay as close to the original dataset as possible, that is: the vector of the original numeric channel and the vector of the linear mapped numeric channel, should be as close as possible, ideally identical.

For that reason, we decided to use a Stairs method: we don't want to alter the original numeric channel with an Analog method.

Finally, we don't want any sample of the linear mapped channel to "predict the future". This is what I try to highlight in the attachment.

Thanks,

Jacques

02-28-2023 09:12 AM - edited 02-28-2023 09:14 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

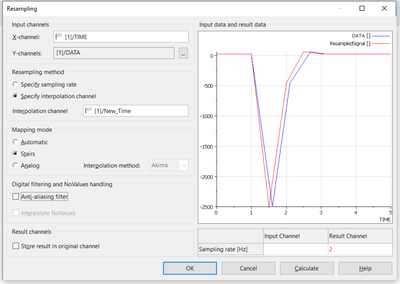

Does this work for you? Results look good on my end:

The "New Time" channel is created by "Generate Numeric Channel"

03-01-2023 05:55 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi DJacques,

Maybe the question is a bit too simple, nevertheless I would like to ask it.

If the time channel is affected only by small variations and deviates only marginally from the ideal time channel and the number of measured values is not to be changed, why is the time channel not simply replaced by the ideal time channel?

Greetings

Walter

04-03-2023 09:31 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello,

Thanks for the suggestion.

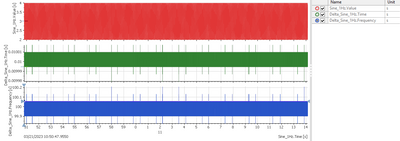

The main reason we want artificial time channels is to have one common MasterTime for all signals that have the same sampling frequency. Same MasterTime, same sampling frequency, same start time. This, to ease the calculations among signals.

Our data acquisition system record signals from many sources. From analogical sensors, but also systems information transmitted on ARINC 429 buses, or Ethernet RS232 buses, that we splice to record.

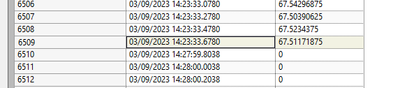

These systems may switch OFF during the recording (though it is not the nominal behavior), in which case there is simply no time or value info when they are off. Example below of "raw" data of a system that switched off for 4min30:

We still want time samples while the system is off, with associated value samples set to NOVALUE.

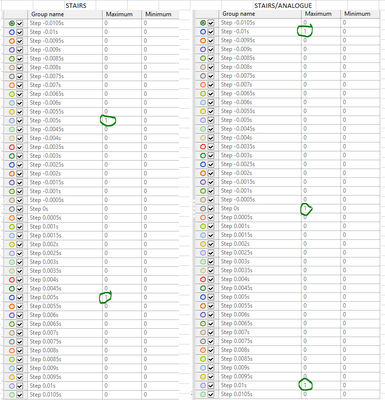

I have additional questions regarding the linear mapping method: what is the difference between the "Stairs" method, and the "Stairs/Analogue" method ?

I created a small experiment.

Attached is a dataset, with a typical time channel (from an ethernet RS232 bus), and a fake 1Hz sinewave value channel on top of it.

With the attached VBS script (tested on DIAdem 2020), I create articificial implicit MasterTime channels, with different start times shifted by 0.0005 sec (i.e 5% of the sampling period).

The script generate one group per time shift.

I then linear map the original signal to the new shifted MasterTimes, with 4 different linear mapping methods (although I'm only interested in the "Stairs" or the "Stairs/analogue" methods).

For each linear mapped signal, I highlight the duplicates values.

For me, these duplicates values are indicative of the "performance" of the linear mapping method. We want to avoid these duplicates as much as possible. They may look benign, but they can introduce artifacts in the frequency domains.

If you are interested and have time, I let you run the script and try it out.

But to summarize:

With the "stairs" method, we get duplicates for time shift of 0.005sec and -0.005sec. All other are OK.

With the "Stairs/Analogue" method, we get duplicates for time shift of 0 sec (Why ?), -0.01sec and +0.01sec. All other are OK.

Without the duplicates at 0sec time shift, I would have used the "Stairs/analogue" method.

So, what is the difference between "Stairs" and "Stairs/Analogue" ? Maybe understand the difference will help me the results from my little experiment.

Sorry for the long and boring context to my question.

Thanks in advance!

Jacques

04-04-2023 03:51 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It is directly from the DIAdem Help file but was a little tricky to find

I also attached the image