- Document History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report to a Moderator

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report to a Moderator

myBalancePlate: Machine Vision Based Control System

myBalancePlate: Machine Vision Based Control System

Project Aim

To demonstrate one of the main principals of control theory; the inverted pendulum problem using NI myRIO and machine vision.

Background

The inverted pendulum is one of the simplest demonstrations of control theory. One example of this control system is the classic ball balancing on a plate. By controlling the angle of the servo motor in the below system, the ball can be balanced in the centre of the plate

To control the servo position, we must first acquire the position of the ball. In this system, the NI-IMAQ machine vision library to acquire the ball position using a webcam.

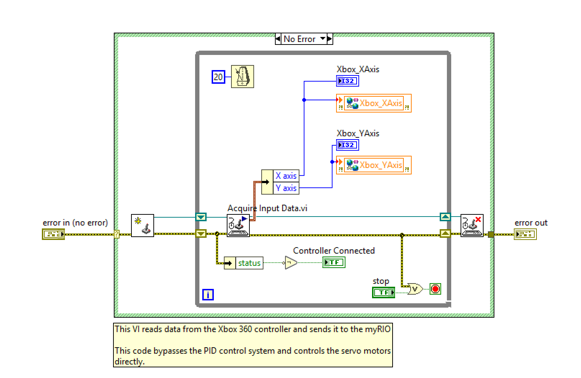

For debugging purposes, an Xbox controller interface was used to control the servos without the need for the webcam.

Systems Overview

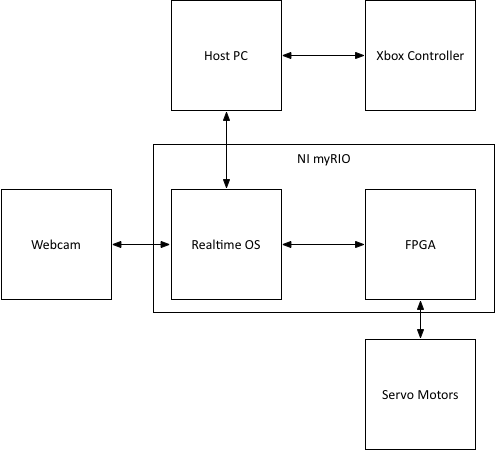

There are three main systems within this project:

- Host PC – This will be a laptop used to interface with the Xbox controller and to monitor the status of the RT.

- Realtime OS – This is the Linux based operating system of the myRIO. This is where the majority of the processing for the PID control and machine vision will be done.

- FPGA – The low level code for controlling the servo motors will be ran here to maximise speed and determinism.

The high level overview of the system can be see below:

Background

Pulse Width Modulation

The servo motors must be supplied with a 50Hz control signal, the angle of the servo can be controlled by modulating the amount of time the digital signal is on, versus off. This is commonly referred to as pulse width modulation (PWM):

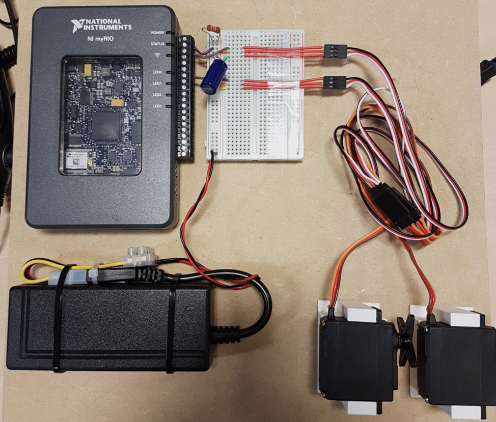

The digital output pins on the myRIO are capable of providing this 50Hz PWM signal, therefore by connecting them to the corresponding pins on the servo motors, the angle can be controlled. The power for the servos is delivered by the 5V power supply shown below:

Building the plate system

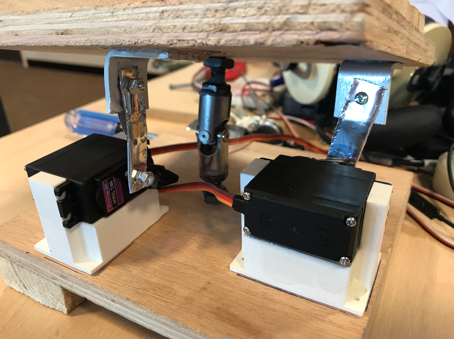

A universal joint in the centre of the system allows for movement in the X and Y axis, whilst preventing any unwanted rotation.

Mounts were designed and fabricated for each of the servo motors using a 3D printer, this raised them from the base plate and allowed the full 180-degree rotation required to move the platform.

Software Architecture

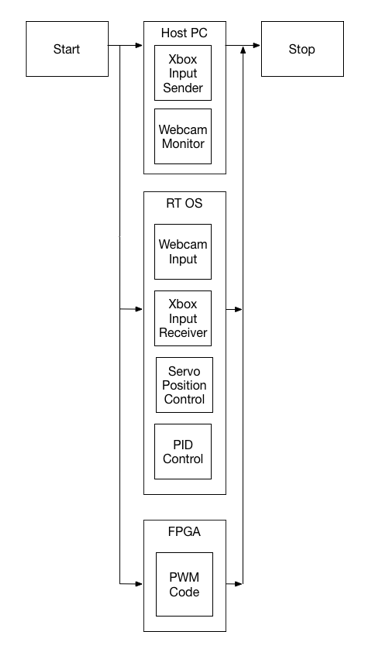

Once the high level system structure has been established, we must plan how each system will operate, and how they will integrate together. From this we can begin planning the functions and code-modules we will require. As most of these systems are co-dependent and must run in parallel, ensuring the correct execution is critical.

Below is an execution diagram from the planning phase of this project:

LabVIEW Architecture

Once we have established the architecture of the software, we begin to build it in LabVIEW. In order to make the project as scalable, maintainable and upgradable as possible, we will use a modular approach to software development, containing our code within discrete functions, these are known in LabVIEW as subVIs.

Host PC

This will run the code for monitoring the current position of the ball on the plate, as well as the code for interfacing with the Xbox controller. This is shown below:

Xbox Controller Interface

Realtime Code

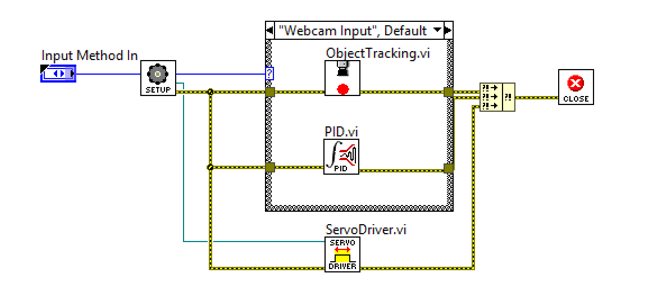

This is where the majority of the processing in this system is done. The top level VI for the RT system along with each subVI is shown below:

|

Main VI |

|

|

Object Tracking |

|

|

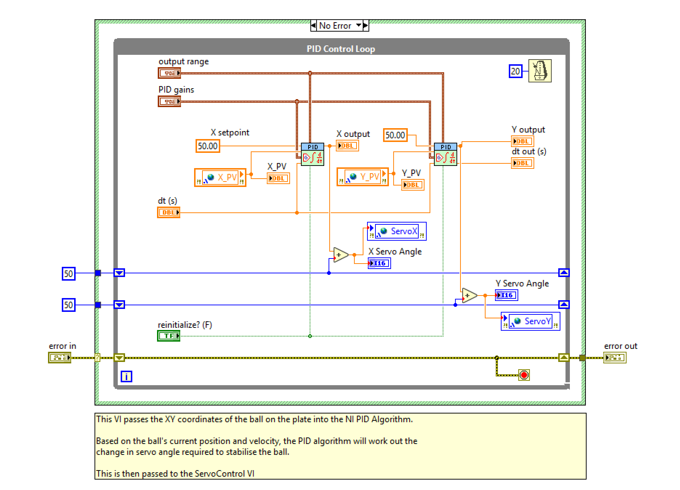

PID Control |

|

|

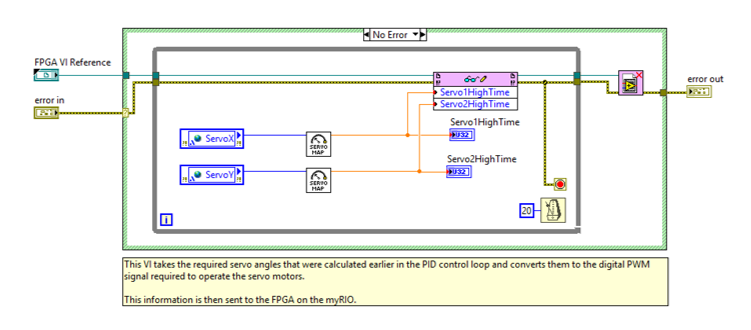

Servo Driver |

|

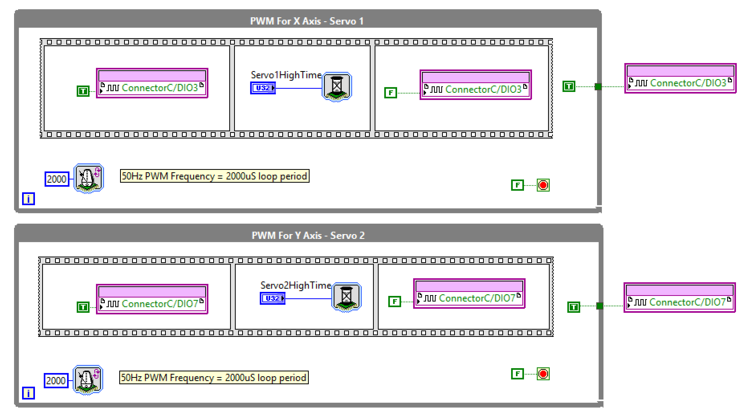

FPGA Code

The FPGA code is low level code that controls the state of the DIO pins on the myRIO. The values for ServoHighTime are sent to the FPGA via a DMA FIFO in the servo driver code on the RT. This is shown below:

Testing

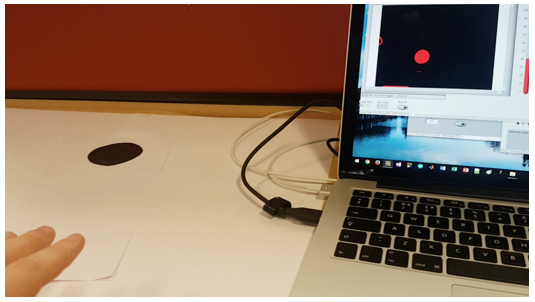

Once the system was built the final step was to test it. Below are some photos from the testing phase of this project.

At this stage an Xbox controller was used in place of the webcam input to simplify the testing of the plate system. For this I attached a simple ball maze game to the plate, allowing players to complete the game using the controller.

The machine vision system was first tested with a black paper dot before moving to using a squash ball:

Next Steps:

Now that all of the systems have been built, the final step in this project will be tuning the PID controller to achieve a stable platform.

Source Code:

https://github.com/michaelstewart1995/VisionBalance

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Great job. Could you please tell me what sort of polychromatic camera (wall detection, ball position) I should use for a LEGO maze?

link --> http://foto.askix.com/upload/8/7b/87ba0df9a831b4918f527f14abf3c6ae.jpg. Also, what camera specifications should I be looking at?

My project consist in solving a maze using image processing.

Thanks

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report to a Moderator

Hi I really like this proyect and Im trying to make it by myself seeing your code to understand the logic begin the proyect, in github there are missing some Subvi´s, there is any chance that I can get them? Thank you!!