- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

USRP 2954R NI Simple Streaming Example Changing Relative Phase

11-25-2019 11:05 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

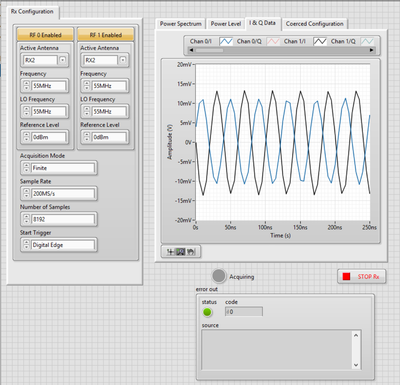

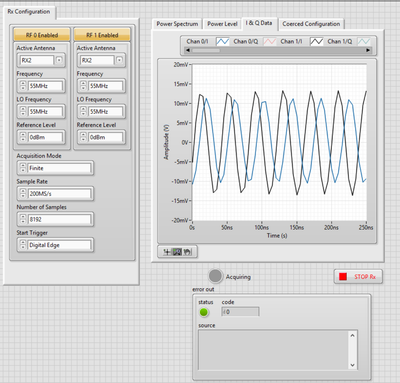

When using the Rx Streaming VI in the NI Simple Streaming example project, there seems to be a changing relative phase between RF0 and RF1. My setup that discovered this is below. I simply stopped the acquisition (and thus application) and restarted and then stopped again, taking screenshots and the phase difference is changing. This can also be seen when the application is running.

The Rx connection is directly to a function generator at 80MHz with a single output being split and sent to RF0 and RF1. I understand that a phase difference is definitely possible, but the phase difference is changing with time which is not acceptable for my application. Any ideas on what is causing this changing phase difference?

12-02-2019 08:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The two channels are on different daughterboards and have different LOs, so they are tuned independently and start at random phase relative to each other.

This will happen every time you change the frequency. So, for your application, I recommend you tune both channels once, then measure the phase difference, then correct that digitally. Don't re-tune after you have done this calibration.

12-03-2019 09:23 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

That makes sense, but what I seem to be seeing a relative phase difference between the two channels that is changing with time. It could just be a weird vision persistence phenomenon or display rate issue on the IQ plot but the phase difference between the I plot for RF0 and the I plot for RF1 is not constant in time for a given set of parameters (Frequency, sample rate, LO, number of samples, acquisition type, etc)