- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

FPGA USB-7855R - Setting input voltage range has no effect.

Solved!03-12-2019 09:06 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi!

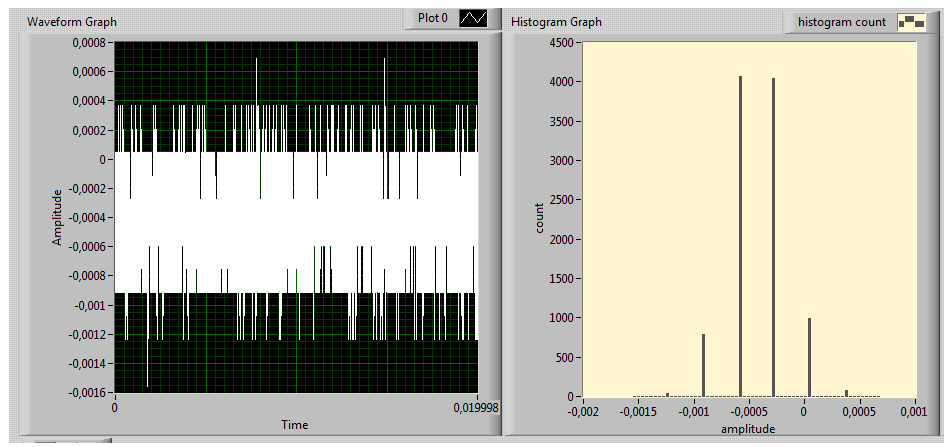

It seems that setting the IO range for my FPGA (USB-7855R) in the project explorer has no effect; When I terminate AI0 with a resistor and measure the 0 Volt baseline with a frequency of 0.5 MHz, I get a standard deviation of 0.3 mV and a quantisation of 0.3 mV/bit, consistent with the 10 mV range, both when I select this range and the 1 +/- V range. Does anyone have any thoughts? The graphs below show measured voltage and the corresponding histogram, which look the same for all voltage ranges.

Cheers,

Marco

Solved! Go to Solution.

03-12-2019 12:34 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Are you recompiling between tests? Some code would be helpful so we can see where the scaling is coming in

03-12-2019 02:07 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

Yes, the Labview IDE forces a recompile after changing the IO range through the project explorer, which is the only place I (know of to) change this setting; I don't do it anywhere in the code. (For clarity, this is when you right-click on the target and then click 'Change IO settings', which brings up a pop up where you can set the ranges for each individual IO channel.) Will be happy to post some code tomorrow however if you think this could be helpful.

Cheers,

Marco

03-13-2019 05:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi again,

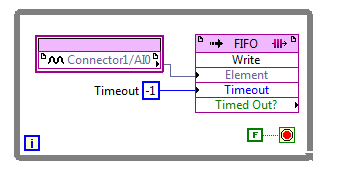

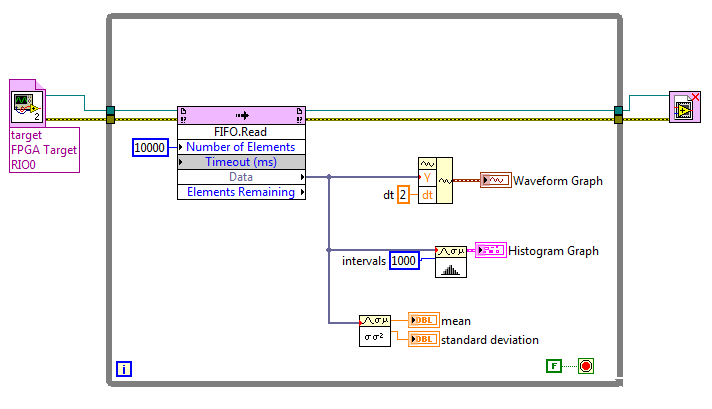

This is the target code (not very exciting!)

And this is the host code:

As you can see, there's nothing spectacular happening here :o). I've attached a zip file with the project files.

03-13-2019 05:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi again,

This is the target code (not very exciting!)

And this is the host code:

As you can see, there's nothing spectacular happening here :o). I've attached a zip file with the project files.

03-13-2019 02:16 PM - edited 03-13-2019 02:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It sounds like an NI bug propagating the settings. Try this—instead of changing the input range from the project you can use a property node in the FPGA code.

http://zone.ni.com/reference/en-XX/help/371599P-01/lvfpga/io_property_node/

http://zone.ni.com/reference/en-XX/help/371508V-01/target2devicehelp/ni_usb-7855r_oem/