From Friday, April 19th (11:00 PM CDT) through Saturday, April 20th (2:00 PM CDT), 2024, ni.com will undergo system upgrades that may result in temporary service interruption.

We appreciate your patience as we improve our online experience.

From Friday, April 19th (11:00 PM CDT) through Saturday, April 20th (2:00 PM CDT), 2024, ni.com will undergo system upgrades that may result in temporary service interruption.

We appreciate your patience as we improve our online experience.

04-13-2021 03:28 PM

Hi.

I am trying to use a shunt to measure current.

This is the setup:

- cRIO 9045

- Module 9205

- 1 Mohm resitor at the module

- RSB-500-100 shunt (https://www.micro-semiconductor.com/products/Riedon/RSB-500-100)

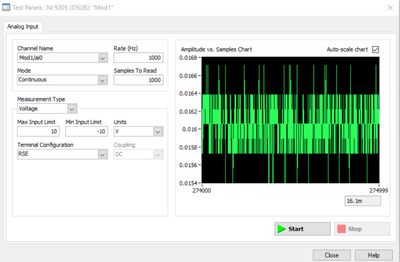

Unfortunantely the raw readings are very noisey. After applying the multiplyer to calculate current out of the raw voltage I end up with basically useless data since it is not accurate.

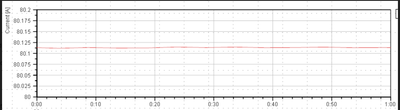

To verify that the hardware setup is correct I ran a test with a different DAQ (keysight) as well. Here is the comparison:

And this is the current:

Does anyone have an idea what might cause this?

Thanks

Matt

04-14-2021 03:52 PM

Matt,

Whilst it isn't very clear, the variability you are seeing in the voltage appears to be over a very long time period (hours), so are you sure the current isn't just varying a bit or these re effects from slowly changing environmental conditions. The DAQ trend without the variability looks to be over a much shorter time scale.

There can be all sorts of reasons why noise / variability can arise - electrical interference, the use of multiplexed adc, anti-aliasing relate to sample time and frequency content, code problems (deterministic vs underterminstic, buffer overflows etc), graphing issues - but most of these tend to happen at high frequency or give some sort of clue in what the resulting signal looks like.

Eliminate any software things by looking at the signal in NI-MAX.

Have a think if the trends you are showing are actually the raw data (sample by sample), what the sample time is compared to timing of the loops gathering the data (timed loops or while loops and a wait?), scan interface timing settings, is cRIO doing anything else (CPU load <80%), some IO modules only support prescribed sample times.

Sorry cant be specific - but hope the above gives you some things to think about.

04-15-2021 11:44 AM

Thanks for the answer.

The minimum reading was 15.7 mV and the maximum 16.4 mV. That is a delta of about 0.7 mV.

04-15-2021 03:06 PM

Turns out that changing the input and output limit to +/- 200 mV makes a big difference: