-

NI Community

- Welcome & Announcements

-

Discussion Forums

- Most Active Software Boards

- Most Active Hardware Boards

-

Additional NI Product Boards

- Academic Hardware Products (myDAQ, myRIO)

- Automotive and Embedded Networks

- DAQExpress

- DASYLab

- Digital Multimeters (DMMs) and Precision DC Sources

- Driver Development Kit (DDK)

- Dynamic Signal Acquisition

- FOUNDATION Fieldbus

- High-Speed Digitizers

- Industrial Communications

- IF-RIO

- LabVIEW Communications System Design Suite

- LabVIEW Electrical Power Toolkit

- LabVIEW Embedded

- LabVIEW for LEGO MINDSTORMS and LabVIEW for Education

- LabVIEW MathScript RT Module

- LabVIEW Web UI Builder and Data Dashboard

- MATRIXx

- Hobbyist Toolkit

- Measure

- NI Package Manager (NIPM)

- Phase Matrix Products

- RF Measurement Devices

- SignalExpress

- Signal Generators

- Switch Hardware and Software

- USRP Software Radio

- NI ELVIS

- VeriStand

- NI VideoMASTER and NI AudioMASTER

- VirtualBench

- Volume License Manager and Automated Software Installation

- VXI and VME

- Wireless Sensor Networks

- PAtools

- Special Interest Boards

- Community Documents

- Example Programs

-

User Groups

-

Local User Groups (LUGs)

- Denver - ALARM

- Bay Area LabVIEW User Group

- British Columbia LabVIEW User Group Community

- Chicago LabVIEW User Group

- Egypt NI Chapter

- GUNS

- Houston Area LabVIEW Community

- LabVIEW - University of Applied Sciences Esslingen

- [IDLE] LabVIEW User Group Stuttgart

- LUGG - LabVIEW User Group at Goddard

- LUGNuts: LabVIEW User Group for Connecticut

- Madison LabVIEW User Group Community

- Mass Compilers

- Melbourne LabVIEW User Group

- Midlands LabVIEW User Group

- Milwaukee LabVIEW Community

- Minneapolis LabVIEW User Group

- CSLUG - Central South LabVIEW User Group (UK)

- Nebraska LabVIEW User Community

- New Zealand LabVIEW Users Group

- NI UK and Ireland LabVIEW User Group

- NOCLUG

- Orange County LabVIEW Community

- Ottawa and Montréal LabVIEW User Community

- Washington Community Group

- Phoenix LabVIEW User Group (PLUG)

- Politechnika Warszawska

- PolŚl

- Rutherford Appleton Laboratory

- Sacramento Area LabVIEW User Group

- San Diego LabVIEW Users

- Sheffield LabVIEW User Group

- South East Michigan LabVIEW User Group

- Stockholm LabVIEW User Group (STHLUG)

- Southern Ontario LabVIEW User Group Community

- SoWLUG (UK)

- Space Coast Area LabVIEW User Group

- Sydney User Group

- Top of Utah LabVIEW User Group

- Utahns Using TestStand (UUT)

- UVLabVIEW

- Western NY LabVIEW User Group

- Western PA LabVIEW Users

- Orlando LabVIEW User Group

- Aberdeen LabVIEW User Group (Maryland)

- Gainesville LabVIEW User Group

- LabVIEW Team Indonesia

- Ireland LabVIEW User Group Community

- Louisville KY LabView User Group

- NWUKLUG

- LVUG Hamburg

- LabVIEW User Group Munich

- LUGE - Rhône-Alpes et plus loin

- London LabVIEW User Group

- VeriStand: Romania Team

- DutLUG - Dutch LabVIEW Usergroup

- WaFL - Salt Lake City Utah USA

- Highland Rim LabVIEW User Group

- NOBLUG - North Of Britain LabVIEW User Group

- North Oakland County LabVIEW User Group

- Oregon LabVIEW User Group

- WUELUG - Würzburg LabVIEW User Group (DE)

- LabVIEW User Group Euregio

- Silesian LabVIEW User Group (PL)

- Indian LabVIEW Users Group (IndLUG)

- West Sweden LabVIEW User Group

- Advanced LabVIEW User Group Denmark

- Automated T&M User Group Denmark

- UKTAG – UK Test Automation Group

- Budapest LabVIEW User Group (BudLUG)

- South Sweden LabVIEW User Group

- GLA Summit - For all LabVIEW and TestStand Enthusiasts!

- Bangalore LUG (BlrLUG)

- Chennai LUG (CHNLUG)

- Hyderabad LUG (HydLUG)

- LUG of Kolkata & East India (EastLUG)

- Delhi NCR (NCRLUG)

- Montreal/Quebec LabVIEW User Group Community - QLUG

- Zero Mile LUG of Nagpur (ZMLUG)

- LabVIEW LATAM

- LabVIEW User Group Berlin

- WPAFB NI User Group

- Rhein-Main Local User Group (RMLUG)

- Huntsville Alabama LabVIEW User Group

- LabVIEW Vietnam

- [IDLE] ALVIN

- [IDLE] Barcelona LabVIEW Academic User Group

- [IDLE] The Boston LabVIEW User Group Community

- [IDLE] Brazil User Group

- [IDLE] Calgary LabVIEW User Group Community

- [IDLE] CLUG : Cambridge LabVIEW User Group (UK)

- [IDLE] CLUG - Charlotte LabVIEW User Group

- [IDLE] Central Texas LabVIEW User Community

- [IDLE] Cowtown G Slingers - Fort Worth LabVIEW User Group

- [IDLE] Dallas User Group Community

- [IDLE] Grupo de Usuarios LabVIEW - Chile

- [IDLE] Indianapolis User Group

- [IDLE] Israel LabVIEW User Group

- [IDLE] LA LabVIEW User Group

- [IDLE] LabVIEW User Group Kaernten

- [IDLE] LabVIEW User Group Steiermark

- [IDLE] தமிழினி

- Academic & University Groups

-

Special Interest Groups

- Actor Framework

- Biomedical User Group

- Certified LabVIEW Architects (CLAs)

- DIY LabVIEW Crew

- LabVIEW APIs

- LabVIEW Champions

- LabVIEW Development Best Practices

- LabVIEW Web Development

- NI Labs

- NI Linux Real-Time

- NI Tools Network Developer Center

- UI Interest Group

- VI Analyzer Enthusiasts

- [Archive] Multisim Custom Simulation Analyses and Instruments

- [Archive] NI Circuit Design Community

- [Archive] NI VeriStand Add-Ons

- [Archive] Reference Design Portal

- [Archive] Volume License Agreement Community

- 3D Vision

- Continuous Integration

- G#

- GDS(Goop Development Suite)

- GPU Computing

- Hardware Developers Community - NI sbRIO & SOM

- JKI State Machine Objects

- LabVIEW Architects Forum

- LabVIEW Channel Wires

- LabVIEW Cloud Toolkits

- Linux Users

- Unit Testing Group

- Distributed Control & Automation Framework (DCAF)

- User Group Resource Center

- User Group Advisory Council

- LabVIEW FPGA Developer Center

- AR Drone Toolkit for LabVIEW - LVH

- Driver Development Kit (DDK) Programmers

- Hidden Gems in vi.lib

- myRIO Balancing Robot

- ROS for LabVIEW(TM) Software

- LabVIEW Project Providers

- Power Electronics Development Center

- LabVIEW Digest Programming Challenges

- Python and NI

- LabVIEW Automotive Ethernet

- NI Web Technology Lead User Group

- QControl Enthusiasts

- Lab Software

- User Group Lead Network

- CMC Driver Framework

- JDP Science Tools

- LabVIEW in Finance

- Nonlinear Fitting

- Git User Group

- Test System Security

- Product Groups

-

Partner Groups

- DQMH Consortium Toolkits

- DATA AHEAD toolkit support

- GCentral

- SAPHIR - Toolkits

- Advanced Plotting Toolkit

- Sound and Vibration

- Next Steps - LabVIEW RIO Evaluation Kit

- Neosoft Technologies

- Coherent Solutions Optical Modules

- BLT for LabVIEW (Build, License, Track)

- Test Systems Strategies Inc (TSSI)

- NSWC Crane LabVIEW User Group

- NAVSEA Test & Measurement User Group

-

Local User Groups (LUGs)

-

Idea Exchange

- Data Acquisition Idea Exchange

- DIAdem Idea Exchange

- LabVIEW Idea Exchange

- LabVIEW FPGA Idea Exchange

- LabVIEW Real-Time Idea Exchange

- LabWindows/CVI Idea Exchange

- Multisim and Ultiboard Idea Exchange

- NI Measurement Studio Idea Exchange

- NI Package Management Idea Exchange

- NI TestStand Idea Exchange

- PXI and Instrumentation Idea Exchange

- Vision Idea Exchange

- Additional NI Software Idea Exchange

- Blogs

- Events & Competitions

- Optimal+

- Regional Communities

- NI Partner Hub

-

Jacobson

on:

AI is going to take our jobs - everyone should panic now.

Jacobson

on:

AI is going to take our jobs - everyone should panic now.

-

swatts

swatts

on:

LabVIEW Firmata - Easy control of Arduino Microcontrollers

on:

LabVIEW Firmata - Easy control of Arduino Microcontrollers

-

Dhakkan

on:

LabVIEW OpenSUSE Leap15.5 on a LattePanda V1

Dhakkan

on:

LabVIEW OpenSUSE Leap15.5 on a LattePanda V1

-

swatts

swatts

on:

LabVIEW and Linux - Intro

on:

LabVIEW and Linux - Intro

-

swatts

swatts

on:

It's time for me time.

on:

It's time for me time.

-

LFBaute

on:

Sneaky Peak and Some Love to Spread (CTI)

LFBaute

on:

Sneaky Peak and Some Love to Spread (CTI)

-

swatts

swatts

on:

News Drop! Community Training Progress, GDevCons

on:

News Drop! Community Training Progress, GDevCons

-

jenniferbni

on:

1 Million!

jenniferbni

on:

1 Million!

-

Enrique.Noe

Enrique.Noe

on:

Community Training Initiative

on:

Community Training Initiative

-

Taggart

Taggart

on:

USA we're coming to you!

on:

USA we're coming to you!

Re: The Concept of Block Diagram Time

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report to a Moderator

Hello Lovelies,

The main purpose of Software Design is to break a large problem into smaller more manageable problems. Up until now we've concentrated on actual Computer Science based design techniques and metrics like Coupling, Cohesion and various other principles. We also apply heuristics like globals=bad or a block diagram should be a single page.....

I think there is a concept specific to graphical programming and it's to do with block diagram time.

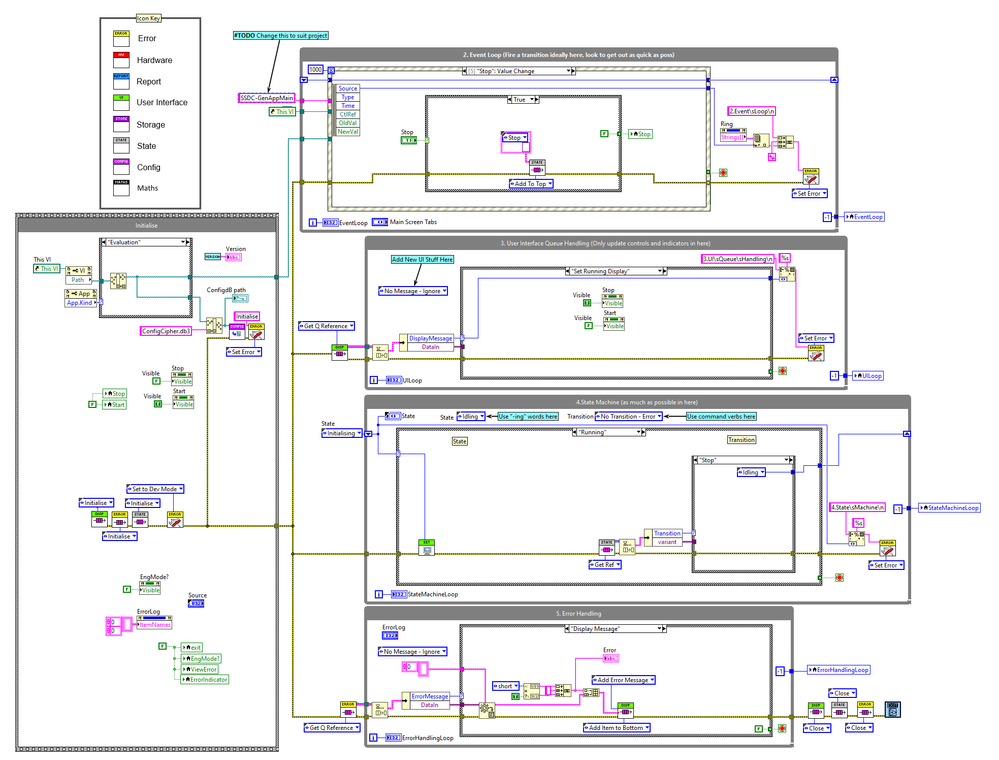

If you look at an SSDC design it will consist of several loops, most will be doing something in a very short period of time.

1. Initialisation structure.

2. Event Loop

3. UI Loop

5. Error Handling Loop

The only one that is being busy is the state machine loop. This means that 3 of the loops can pretty much be ignored... The UI Queue loop is only updating the display, if that works it can be ignored. The event loop is updating a display or firing off a transition. The error loop is only called on an error and that is displayed on the front panel. One of the reasons they can be ignored is that the thing they are doing is so trivial...

Straight away this simplifies debugging.

This can even be applied to the state machine part - breaking it into meaningful states that are only doing one thing and in a predictable time period. (i.e. don't put your massive diagram in a running state and then do everything in there!)

One heuristic we always break is to do with local variables, LabVIEW programmers wire themselves in knots to avoid using them. Which is a shame because they are a tidy and descriptive way to get access to local data. We can break this rule because of these short time periods. The difficulty with accessing a local variable is knowing that the data you are acting on is relevant and timely. If you keep your accessing of a local variable to a short period of block diagram time you will make it more predictable and therefore easy to use.

This is where the concept of block diagram time equates nicely with the concept of cohesion, we've all seen the large block diagram that's doing lots of stuff. Here's a link here where you can find plenty of examples.

They become complex because we cannot fit everything that's going on in the diagram in our heads. So if a diagram encapsulates seconds of computer time, knowing what is happening at one point relative to another is very difficult. Breaking our block diagram into defined sections that run in microseconds allows our brains to keep up and also simplifies certain operations to the point where we can ignore them.

I'm at a very early stage of thinking about this, but I think it may really help understand why a design gets complex. The next part I need to get my head around is applying time based interactions across an entire system.

Anyways its the seed of a concept!

Lots of Love

Steve

Opportunity to learn from experienced developers / entrepeneurs (Fab,Joerg and Brian amongst them):

DSH Pragmatic Software Development Workshop

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.