Hello everyone,

I've a problem to develop an IIR filter on my FPGA board.

I'm using this hardware :

- PXIe-7965R

- NI Module 5762-02 (16 bit digitizer)

My IIR is used as a DC-blocking filter. It should process 662 points datas at 125 MHz clock rate.

a = 0.97845459

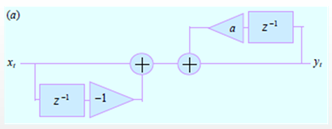

On Labview (CPU), I've implemented this filter and it works fine :

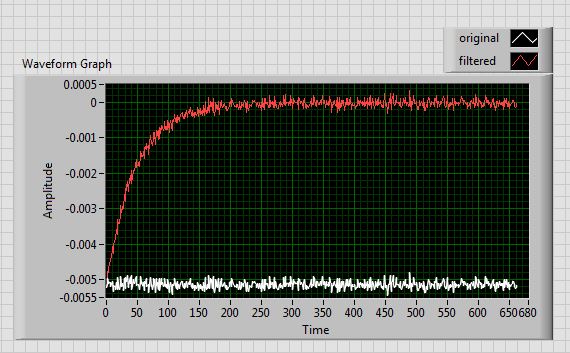

The result of this filter is :

All is OK but it seems to be quite different on my FPGA.

You can find my FPGA code as an attachment and a picture there :

Throughput = 1

The latency of each high throughput boxes is 1 cycle/sample.

For the same input values, the result of the filter on the FPGA is :

If a zoom in :

Do you have an idea of what's happening ?

I'm wondering if there is not a problem if the first FIFO times out. The good input data of the first substract block (= the second input) may be lost, no ?

I hope that my post will be understandable, I thank you for any answer

Have a nice day

Regards,