- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

TestStand locks-up for ~3 seconds calling same VI multiple times from sub-sequence

09-24-2021 12:21 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi all, starting a new a new post in the TestStand forum having started one in the DQMH forum and finding it wasn't a DQMH issue. Below is a quote from the original post:

Interesting issue when trying to send requests to a DQMH module from TestStand. Part of our software application is a single DQMH module that is used as an OPC client – it uses the OPC UA Toolkit and is compiled as a PPL. In TestStand, we are using the parallel process model as we have 18 custom test devices for testing UUT’s simultaneously. The status of these test devices is sent to the OPC client module which in turn sets tags on the local OPC server for use by the system PLC. We have a separate DQMH module as a driver for the test devices which we clone for each test device. TestStand is configured to use LabVIEW Run-Time Engine as the server. Other info: TestStand 2020 (64-bit), LabVIEW 2020 SP1 (64-bit), DQMH 5.

Now if we need to re-initialise all of these test devices (say in response to a safety stop), they will all want to send an updated status to the OPC Client module more or less simultaneously. From the test device DQMH module clones, they each call a TestStand sub-sequence with the updated test device status which makes a request to the OPC client module. So if 18 test devices are initialised at the same time, the 18 test device modules all call the same sub-sequence which all make a request to the single OPC client and generating multiple events in the EHL of the OPC client module. This works okay for one or two test devices, but when we get more, the whole LabVIEW/TestStand environment seems to lock up for a few seconds while it sorts itself out. This lock up behaviour is causing other issues elsewhere in the software, so we want to ensure there are no delays introduced when updating the OPC tags.

What we found after this original post was:

- DQMH was not the issue (or OPC). Trying to execute any VI in this sub-sequence that was called 18 times would cause this lock-up behaviour. The VI could be a very simple stand-alone VI that was not part of a PPL or any library.

- If we skip the VI in the sub-sequence (so the sub-sequence still executes 18 times, but does nothing), we would not see this lock-up behaviour.

- Separately, we found that calling a sub-sequence once but that sub-sequence contained a for loop that would execute the VI 18 times could also cause this same lock-up issue (not as often though).

We are not expecting this sort of behaviour (obviously). Has anyone else seen something similar?

09-28-2021 07:38 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Feedback from NI Support:

"One thing to try would be to bump the number of allowed parallel threads with this LabVIEW INI token:

ExecThreadGrowCapacity=[num]

The default on most systems is 24, so bumping to 48 or 72 or something like that would be an easy way to see if this is a threading issue. If this doesn't help, then our guess is that too much is happening in the UI thread (which I think I've heard can be a common problem with TestStand+LabVIEW setups with lots of parallelism).

If the INI token does help though, then that means there's likely a shared resource that all the parallel code instances are choking on, and identifying that resource (and wrapping it in a non-reentrant VI, thereby delegating the execution management to LabVIEW instead of the OS) may be an avenue worth exploring."

Certified LabVIEW Architect and LabVIEW Champion

DQMH Trusted Advisor

https://wiredinsoftware.com.au

09-30-2021 08:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

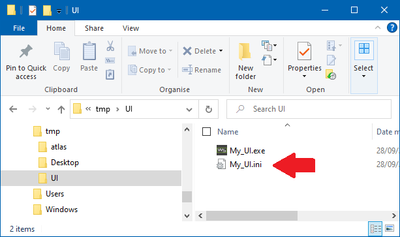

Hi, I'm trying to understand how to actually set this token on the deployed system, given that it is a TestStand deployment.

From what I can tell from this post, LabVIEW tokens can be set using an ini file with the same name and in the same location as the LabVIEW user interface (which is the initial file launched).

And creating a [LVRT] section in that file, with the token that you want to set.

Does this sound right?

Also, is this a separate setting to LabVIEW Adapter setting in TestStand?

10-13-2021 01:27 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

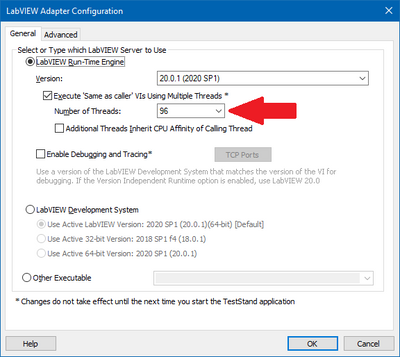

If you are using LV Run-Time engine to execute your VIs and the execution system for all your VIs are set to "same as caller" then you can use the setting in TS to change the number of threads available to execute the VIs.

If not you will have to modify the setting in the ini file. See this link for more information.

Hope this helps,

Regards,

Anand Jain

NI