- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Synchronize PXIe-6363 to external TTL signal (Matlab)

09-29-2022 10:36 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi everyone,

I would like to provide digital and analog signals for multiple devices with my 6363. All the signals should be synchronized to an external TTL signal with 100kHz. As some of the signals have their peak half a period after the rising edge of the external TTL signal I assume a sample clock rate of double the TTL rate (so at 200kHz) would be easiest. However, I am not quite sure if and how it was possible to synchronize the internal sample rate to this (lower) external sample rate. I guess another way would be to use the 100kHz signal directly as the sample clock rate and create another signal half a period delayed to the sample clock rate. Would this also work if the external TTL signal was for example 150kHz?

I would be super happy if someone could help me and if possible even provide the basic MATLAB code lines for this operation.

Many thanks!

09-29-2022 11:08 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You can configure AI to sample at the falling edge instead of the rising edge of the clock this way you can sample your delayed signals.

Yes, you can use any arbitrary clock rate as an external sample clock as long it is within the instrument's capability (typ. max is limited)

Soliton Technologies

New to the forum? Please read community guidelines and how to ask smart questions

Only two ways to appreciate someone who spent their free time to reply/answer your question - give them Kudos or mark their reply as the answer/solution.

Finding it hard to source NI hardware? Try NI Trading Post

09-29-2022 11:48 AM - edited 09-29-2022 11:57 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Sorry, I can't help with any of the Matlab syntax, just general DAQmx and X-series comments.

The 6363 is a highly capable device, it's likely there's a way to accomplish what you need. But I don't yet understand *all* of the things you need. And there are some subtle considerations you may not have considered (or even be aware of).

Example: An externally-generated 100 kHz clock and a 100 kHz clock generated by your 6363 will *not* generally stay in sync with one another. There are accuracy tolerances on the timing for these clock signals and their cycles will skew from one another over time. Many X-series devices (the product family of your 6363) are spec'ed to 50 parts per million which works out to about 3 millisec per minute worth of possible accuracy error. At 100 kHz, that's 300 samples worth. If your external device is spec'ed similarly, they could be skewed from one another by as much as 600 samples per minute, i.e. 10 samples per second of acquisition.

So it will turn out to be fairly *critically* important to understand exactly what kind of sync you need with respect to the external clock. But again, there are likely going to be ways to get there from here. But first we'd need a really thorough description of the timing relationships you need to establish and maintain among your various signals.

Also, do you need your system armed and ready for the very first pulse from the external clock? Or would you be able to do a "sneak peak" to *measure* its frequency before configuring the timing of your tasks? And can you be sure that the external clock will pulse at a constant frequency throughout a given test run? Or is it variable?

-Kevin P

09-30-2022 03:03 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you both for the quick response.

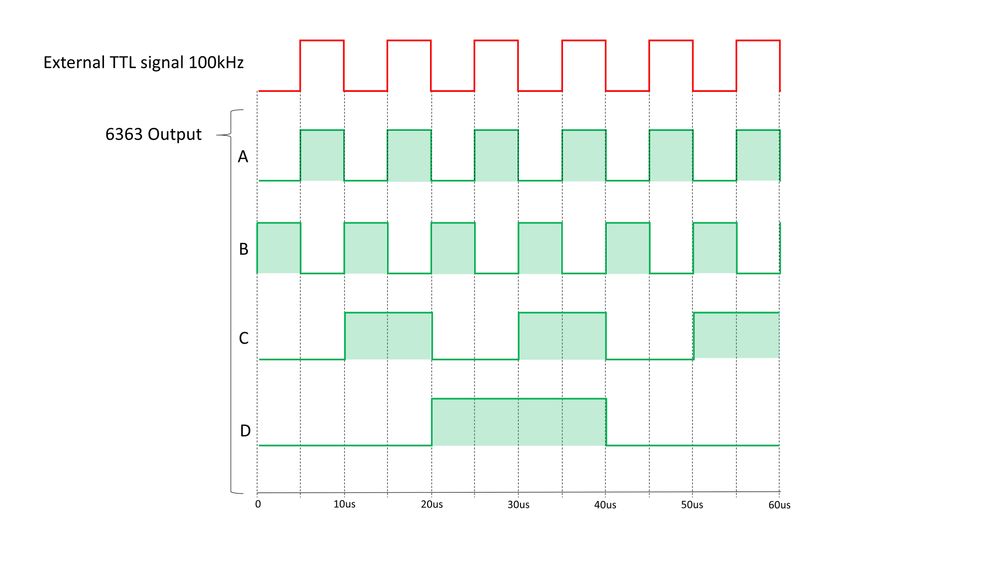

I am sorry for not being clear about the exact timing which I need. So far the card provided the signals shown in green. For that a internal sample clock rate of 200kHz was chosen. However, my external TTL signal is only 100kHz, but I wanna provide the same output as shown in the timing diagram. So I guess just using the TTL signal as sample clock rate wouldn't work as I could provide A and B only at 50kHz instead of 100kHz right?

09-30-2022 07:08 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Because you show your output signals making transitions that coincide with either rising or falling edges of the external signal, this looks like a great candidate for using change detection mode. (Note: change detection & other hardware timed i/o will only be available on port 0).

You'll need a DI task configured for change detection which means you'll need to physically wire the external signal into one of your port 0 lines. You may need to keep reading from this DI task, even if you completely ignore the data, just to prevent a buffer overflow that might (I'm just not sure) stop the change detection function.

I'm also not sure whether you can configure your output tasks to use "/Dev1/di/SampleClock" as their sample clock or whether you need to designate a special internal signal known as "/Dev1/ChangeDetectEvent". But whichever it is will produce a full pulse for each transition seen on the external signal, both rising and falling.

-Kevin P

09-30-2022 11:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Kevin,

sounds absolutely reasonable. Unfortunately I am failing in implementing it. So I would start with a DI at port0 and the line I connected my signal to - eg:

s.addDigitalChannel('Dev1', 'Port0/Line1', 'InputOnly');

I found also the "Dev1/ChangeDetectionEvent" in the terminals but I have no idea how to connect them to actually provide a clock in the end.

09-30-2022 03:11 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I'm really no help with the Matlab API syntax. From prior discussions, I've gathered that the API has some limitations -- i.e., it seems certain advanced capabilities aren't supported in the API. Not sure if that's still true (or even if it ever was), just reporting what has been stated.

In any event, it seems to me that the needs of this app shouldn't be too advanced for the API to support. I just don't know the syntax. Even if you aren't *using* LabVIEW, can you read & understand it? I could put together a LabVIEW example if it'd help.

-Kevin P

10-01-2022 03:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Kevin,

I would very much appreciate if you could do so - I used Labview a long time ago but I guess I could still try to translate to MATLAB and even post the solution here afterwards.

10-02-2022 09:16 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

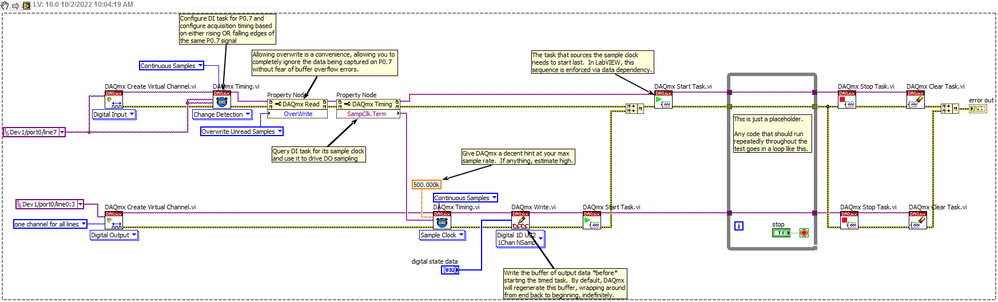

Here's a quick annotated example that ran without error on a simulated X-series device here. You'll need to change the data buffer to match your desired output signal timing, I just used a generic pattern.