- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

sample 0 of the first iteration does not seem correct...

04-26-2016 05:53 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Dear LabVIEW experts,

I have tried to work on a simple synchronized AO-AI program recently, and found a phenomenon that I could not understand.

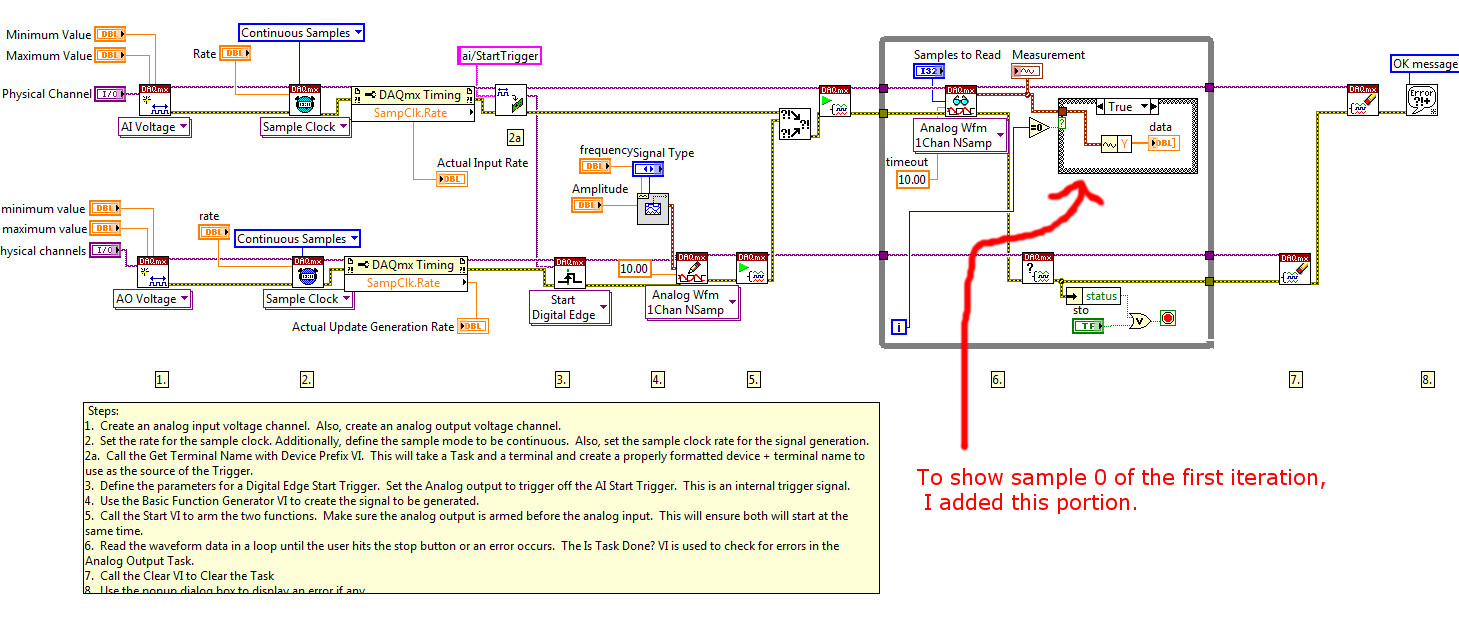

I started with the example code via LabView >> Help menu >> Find Examples >> Hardware Input and Output >> DAQmx >> Synchronization >> Multi-Function >> Multi-Function-Sync Ai-AO.vi

My setup was as follows. I used a USB-6216-BNC device, LabVIEW 2009, and Windows 7. For debugging purposes, I connected the AO0 to AI0 with a BNC cable on my DAQ device, so I could see the output on the waveform graph. When I ran this VI (without any modification; it was the original VI that came with LabVIEW), I noticed that the very first sample (and the very first sample only) of the first iteration (and the very first iteration only) appeared to be a strange (probably a random number) that I could not comprehend.

For the ease of your review, I added a simple structure within this VI, so that sample 0 of the first iteration can be shown on the user interface. Please see the two pictures below.

I ran this VI 10 times. The values of sample 0 of the very first iteration for the 10 runs were:

-0.42474

-0.24715

-0.84305

-0.36653

-0.80852

0.06394

-0.12383

-0.68322

0.12643

-0.72729

I tested this VI (i.e., the original example VI that came with LabVIEW) on another DAQ board (PCI 6221) and a different BNC cable in my lab, just in case if my DAQ USB-6216 device or the cable was bad), and the same phenomenon appeared.

My understanding was that sample 0 should be a very small number, i.e., close to zero. However, it was not the case for the first iteration (and the first iteration only). Starting from the second iteration, sample 0 was all very small and close to zero. So, was this weird sample 0 of the very first iteration a result of some left-over value? If so, from where? From a previous condition? Or was it part of the trigger edge? I have tried my best, but was unable to solve this mystery. If someone could help me with this, I would greatly appreciate it.

Thank you!

04-26-2016 08:11 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Please attach the actual VI so we can try it out, look at it more closely, and try to figure out what is happening. Very hard to tell from a picture (and way too much work and eyestrain to even bother trying).

Bob Schor

04-26-2016 08:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

That value is most likely where the AO stopped on the previous run. You really should write a 0 to the Analog Output before closing the task.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

04-27-2016 08:57 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Sorry! Attached is the VI that I copied from the LabVIEW example code library.

04-27-2016 10:27 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I would advise to start AI task on ao/start trigger, not vice versa as it is now.

crossrulz is right, ao has not updated first value yet when AI is reading it.

04-27-2016 03:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

A Stop Task VI before the Clear Task VI should return the channel to it's condition before the Start Task VI was run. I would think this would return the output back to 0V.

Get a voltmeter and measure your output channel after you stop the VI as it is now.

If you are truely wanting to capture all information about the input waveform I think it is better to start the input channel before the output channel.

I assume the input channel buffer is clear when starting the VI so that shouldn't be an issue??

04-28-2016 10:18 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you all so much for your insightful comments! Greatly appreciated!

As I now understand what may have happened in this scenario, it makes me feel better. I mean, the reason that I connect ao0 to ai0 with a BNC cable is for testing purposes. I usually do that when writing a new program. In practice, ai0 will be connected to a senor to collect data. And I will just have to be sure that I write a zero to ao before closing the ao task, or to clean up ao buffer before the first iteration.

Thanks a lot!