- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

central limit theorem

Solved!04-08-2015 12:30 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

hi all,

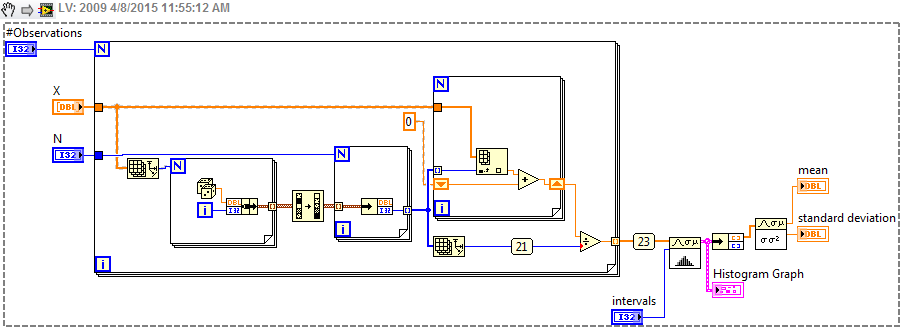

i have made a small VI to apply the central limit theorem, it works very good except that it is slow, i know i can use a more powerful computer and it will be faster, but i want to optimize this vi for minimum time.

anyone can help?

what i do in this vi: 1-generate N (usualy 30) unigue random positive integer numbers

2-i use this 30 integers as indices to select 30 random elements from an array X (which has at least 40 samples)

3- i get the average of this 30 randomly selected elements from array X

4- i repeat the previous 3 steps at least 10^6 times.

5- i draw a histogram.

is there a way to make this vi faster (as possible) , even if i have to change the algorithm, as long as it does the same.

thank you

Solved! Go to Solution.

04-08-2015 01:53 PM - edited 04-08-2015 01:55 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I couldn't quite figure out what your while loop was doing. Here's a stab at it using the sort function on an array of clusters which sorts on the first element.

04-08-2015 02:15 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

thanks for your help, but still something is missing, the output is not gaussian as expected

i generate 30 random numbers that must be unique, so, i generated just 30 random numbers, then i removed the duplicates, therefore the output is less than 30 random numbors, that is why i used a while loop to fill the array with extral elements to be exactly 30 random uniqe integers.

because i want to extract 30 different but not repeated elements from array X and average them

thanks 🙂

04-08-2015 02:35 PM - edited 04-08-2015 02:50 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

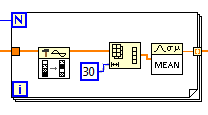

Here's what I would do.

For 1M samples, it takes abour 2s on my computer.

If you parallelize the outer loop, it will be proportionally faster (~0.15s total on my 16 core dual Xeon :D)

Code assumes that the input array is >30 and that you want to pick 30 random elements. Change to diagram constant wired to N of the inner loop to change the pick size (or change to a control on the toplevel diagram).

04-08-2015 03:01 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@altenbach wrote:

Here's what I would do.

For 1M samples, it takes abour 2s on my computer.

If you parallelize the outer loop, it will be proportionally faster (~0.15s total on my 16 core dual Xeon :D)

Code assumes that the input array is >30 and that you want to pick 30 random elements. Change to diagram constant wired to N of the inner loop to change the pick size (or change to a control on the toplevel diagram).

thank you very much altenbach, this is just perfect 🙂

04-08-2015 03:09 PM - edited 04-08-2015 03:27 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Alternatvely, you could just shuffle the orange array and use the "riffled index" output. Seems to be about the same speed (even though we riffle a larger datatype). Less code. 😄

(There are a couple of other little tweaks that can gain you another 15% or so. Divide the raw array by 30 and just sum in the loop (instead of taking the mean). inline riffle (make a copy first!), etc.)

04-09-2015 09:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@altenbach wrote:

Alternatvely, you could just shuffle the orange array and use the "riffled index" output. Seems to be about the same speed (even though we riffle a larger datatype). Less code. 😄

(There are a couple of other little tweaks that can gain you another 15% or so. Divide the raw array by 30 and just sum in the loop (instead of taking the mean). inline riffle (make a copy first!), etc.)

Christian, I think you are hitting a optomization with that.... The mean is the mean regardless of order of the array elements. I would suspect the inner loop is absent in the compiled object and the array is simply sliced into sections.

(Didn't I learn a sorted riffled array is insane a while back?) That whole pallete is suspicious in benchmarks and scares me a little with a smart compiler.

"Should be" isn't "Is" -Jay

04-09-2015 11:08 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Of course, if we're riffling the double, we might as well get rid of the for loop. It's no faster but it's kinda nice and compact.

04-09-2015 11:57 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

04-09-2015 01:45 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Just tested again, and riffling the I32 array followed by an inner FOR loop is about twice as fast when parallelized (0.15s vs 0.3s) compared to truncating the riffled DBL array. YMMV. 😉