- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

cRIO IMAQ: Writing time images to string then to JSON

06-25-2018 10:25 AM - edited 06-25-2018 10:27 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@elcalverado wrote:

It looks like that is stripping out the cluster information. We already determined that you can't add on to the string and then try to unflatten it back into a cluster since the cluster is a fixed size. Do you have to use that data structure? Could you use an array of clusters instead of trying to append to the data string?

-Jordan

I don't need to use any data structure specifically, I just need to be able to write whatever data structure I use to the proper JSON formatting.

How would I implement the array of clusters here though?

06-25-2018 10:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Rather than doing this:

- Flatten to string

- concatenate string

- unflatten from string

You would instead use Build Array to add the new image cluster to an existing array of clusters:

You will also need to change the string constant that is initializing your shift register to an constant array of your image cluster.

Again, not sure if this is what you're looking for, but it should allow you to append as many new images as you want to and export the results to JSON.

-Jordan

06-25-2018 10:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Should I use the "Build array" or "Build cluster array function"

The part that I'm asking about though is the vision acquisition express. I'm not sure if I am using the right control to capture at different time intervals or if I need to be using a different one.

06-25-2018 01:11 PM - edited 06-25-2018 01:18 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

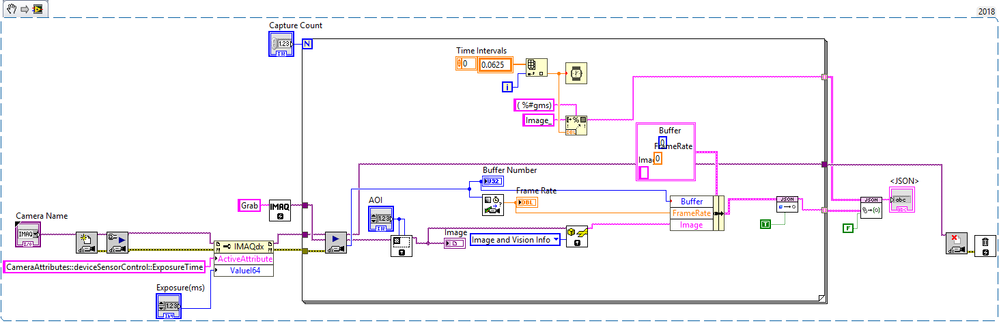

I was able to convert the image and place it in JSON format correctly!!! I decided instead of using the express VI I would just use the lower level functions.

Now I need to add a capture button, and an event case that will allow it to stream the image whenever its not capturing.

Would the best way to do that be a true/false case in a while-loop? Making it grab the image and send it to the Image indicator when false, and when its true write the timed JSON images

Edit: I seemed to jump the gun a bit, my frame seems to be stuck at 0 for all the images being taken. Any ideas why?

06-25-2018 01:18 PM - edited 06-25-2018 01:21 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You could just place the entire for loop in a case structure. Have the for loop in one case and in the other case, just do a grab and display the image.

Edit: Sorry, didn't see that you didn't have a while loop in the code. You should do the grab in a while loop so that it runs continuously and place your JSON conversion code inside of a case structure.

I don't see in your new code where you are updating the time between images. How are you controlling that?

-Jordan

06-25-2018 01:20 PM - edited 06-25-2018 01:31 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@elcalverado wrote:

I don't see in your new code where you are updating the time between images. How are you controlling that?

The Wait ms function, I followed this guy sort of https://forums.ni.com/t5/Example-Program-Drafts/Time-Lapse-Frame-Video-Capture/ta-p/3517904

@elcalverado wrote:

You could just place the entire for loop in a case structure. Have the for loop in one case and in the other case, just do a grab and display the image.

Edit: Sorry, didn't see that you didn't have a while loop in the code. You should do the grab in a while loop so that it runs continuously and place your JSON conversion code inside of a case structure.

Would the images update properly in a case structure with the way this is written?? Or would I just be receiving the next image instead of the timed capture

Another thought is I can grab the images continuously in the while loop and when capture is pressed I can use a local variable to send the images to the for loop to save them. Would this work?

06-25-2018 02:32 PM - edited 06-25-2018 02:45 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@atokadAnother thought is I can grab the images continuously in the while loop and when capture is pressed I can use a local variable to send the images to the for loop to save them. Would this work

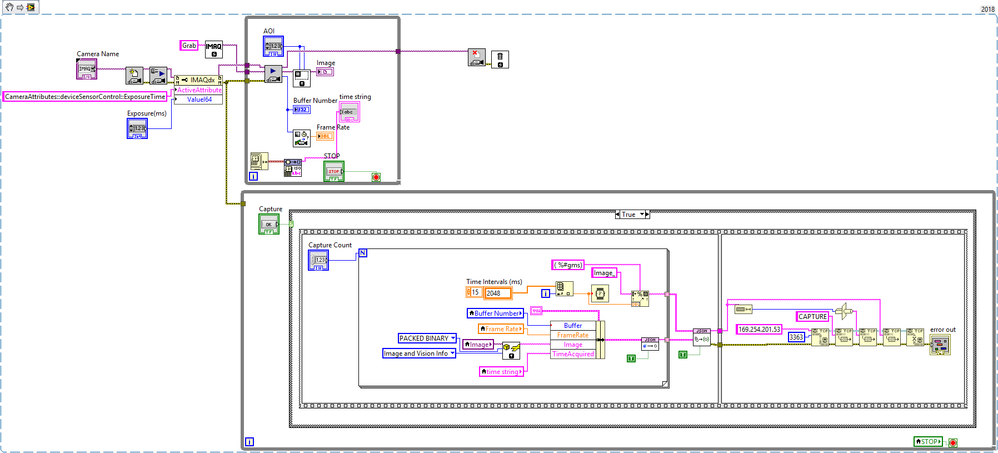

Explaining and building off of this idea:

- I could run two while loops in parallel with each other. The first loop could be grabbing the images continuously and the second one would hold a case structure to check if the images need to be acquired, like so,

-

Or if that is inefficient my other option is to capture all images for the total amount of time (about ~2.1 seconds) that would be in the for loop, then send it to my Python server via TCP connection and sort it out from there. I'm not sure which method would be more lossless or efficient (?) as far latency is concerned.

Also, I attached my current output text. Looks like some of the images have the same buffer number, is that an issue?

06-26-2018 10:03 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Using a separate loop to do the image conversion is a good idea. Since you don't care about getting every image, this would get you the images pretty close to the time intervals you want. There is going to be a little margin of error based off of the frame rate at which you are running the camera. Depending on whether the image local variable is read right after a new image is acquired or right before a new one is acquired your margin of error is essentially one frame period.

Doing a sequence of images at a set frame rate for the time duration and then extracting the wanted frames would also be a good option. In that case you would use a sequence acquisition instead of a grab so that you aren't overwriting the IMAQ image buffer (see the sequence example in the example finder). After the acquisition finishes, you have an array of images that you could index to get the ones you want.

-Jordan

06-26-2018 10:56 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I'll continue to move forward with the separate loops for now if my margin of error is that small. Now I am working on the TCP transfer and I am getting an issue with my saved file..

LabVIEW VI:

Python Code:

import socket

server = socket.socket(socket.AF_INET, socket.SOCK_STREAM)

server.bind(('', 3363))

server.listen(1)

while True:

conn, addr = server.accept()

cmnd = conn.recv(4) # The default size of the command packet is 4 bytes

print(cmnd)

if 'CAPT' in str(cmnd):

# Do the initialization action

filename = input('Enter the file name: ' )

filename = filename + '.json'

with open(filename, 'wb') as f: # open in binary mode

data_len = int.from_bytes(conn.recv(4), 'big') # find out how much data is waiting

f.write(conn.recv(data_len)) # collect that much data

f.close()

print('File has been saved.')

server.close()

As you can see my file isn't collecting all of the data acquired, instead it seems to be collecting only about 1/3 of what is supposed to be sent across. In my Python code I am trying to save it as a JSON, which gives me the same output as the txt output, the difference is I can't upload a JSON to the forums!

06-27-2018 12:47 PM - edited 06-27-2018 01:00 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I corrected changing CAPTURE to CAPT, and I am converting the string length to U32. But the issue remains that the entire JSON is not being sent to my Python script even though it is being completely written (on the LabVIEW side) when I probe the Make JSON Object output. So I'm assuming that means the error pertains to the TCP

Another thing may be I might have to do is convert the image to base64. Which I would have to flatten the image, then convert it to base64 before formatting it to JSON, and when I receive it in Python convert the base64 to an image. Would this work? And is there a simple way to convert the image to base64 in LabVIEW