- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- « Previous

-

- 1

- 2

- Next »

XNET Frame Sniffer question - best way?

Solved!01-05-2018 10:07 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

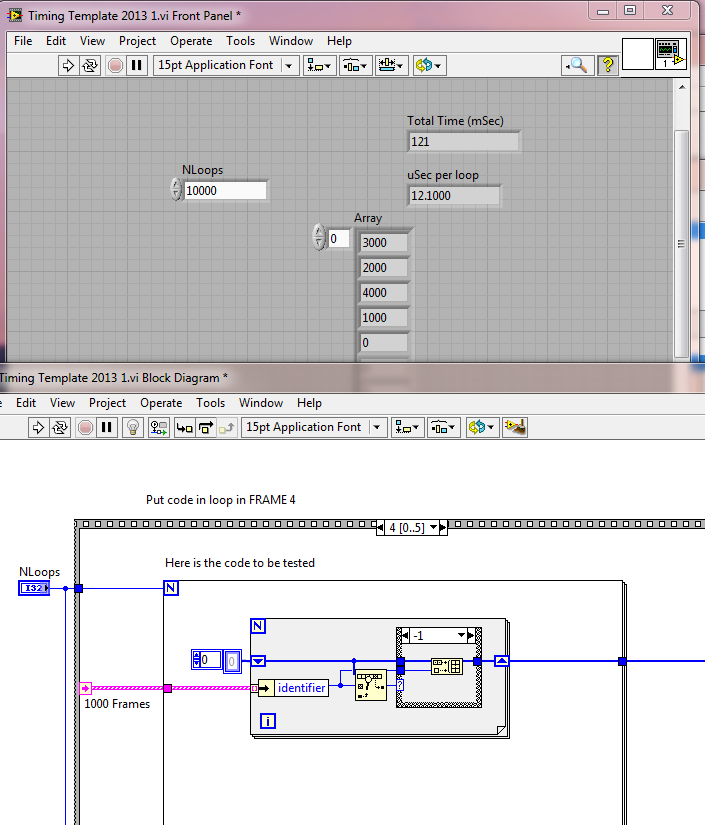

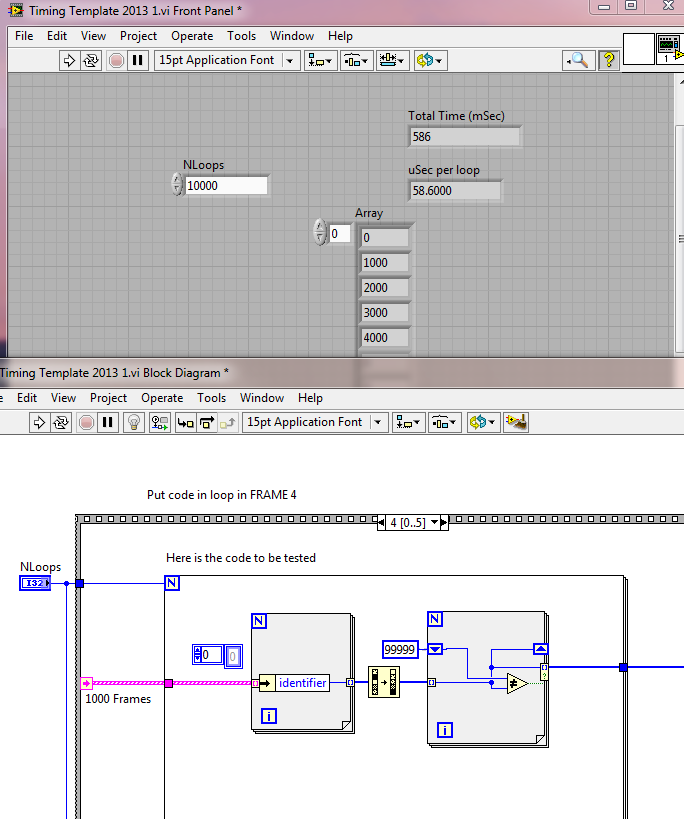

For the record, here's a timing test applied to three different ways of doing it:

All cases have 1000 frames, each with a random one of 5 identifiers.

CASE 1: 12.1 uSec

CASE 2: your SORT idea - 58.6 uSec

CASE 3: Chunk-at-a-time 13.6 uSec

Culverson Software - Elegant software that is a pleasure to use.

Culverson.com

Blog for (mostly LabVIEW) programmers: Tips And Tricks

01-05-2018 11:37 AM - edited 01-05-2018 12:06 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks for benchmarking it. I forgot that the timestamp is the first element - you're right, creating a new array of IDs removes any advantage sorting might have (and, of course, I could be completely wrong that sorting is worthwhile anyway).

EDIT: removed suggestion to use a single pre-allocated array. That approach does not work here because it forces Search 1D Array to search through a much larger array (even though most of it is unused) each time.

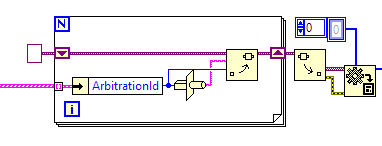

However, I have an idea that may be even faster: use variant attributes. Attributes are stored in a tree so searching for an existing element is fast (no need to compare every single element), and it automatically removes duplicates too! See image below:

EDIT: I benchmarked this. For 2000 elements, the build array and variant approaches are similar. For a 50000 element array, the variant approach is 10x faster (1s for build array, 0.1s for variant). For a small array (a few hundred elements), build array is faster, although the absolute difference in time is pretty minimal at that point.

01-05-2018 12:20 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

nathand wrote:

EDIT: removed suggestion to use a single pre-allocated array. That approach does not work here because it forces Search 1D Array to search through a much larger array (even though most of it is unused) each time.

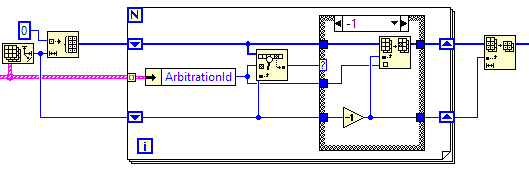

In case anyone is curious, here's a pre-allocated array approach that is as fast as (or marginally faster than) build array in a loop:

And that's enough playing for me today. My benchmark VI is attached in case anyone else wants to play.

- « Previous

-

- 1

- 2

- Next »