- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Why is there a receive and sending buffer emptying during setup of the serial communication.

Solved!09-17-2020 04:46 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello,

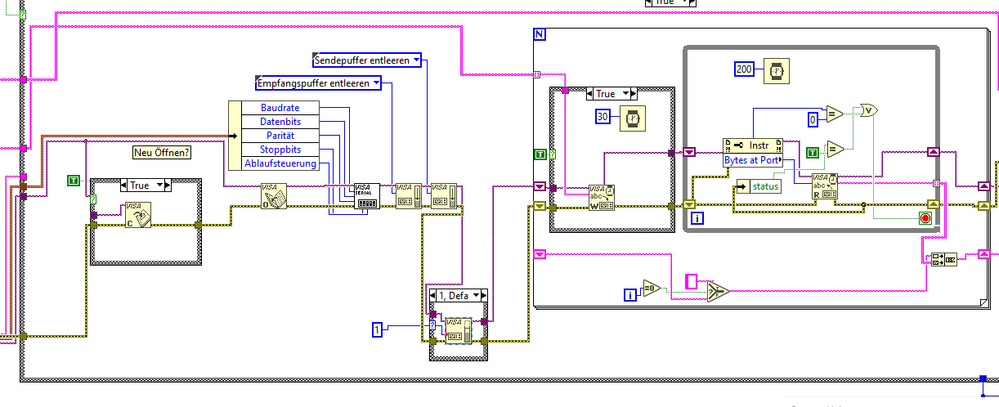

I'm trying to understand a piece of code, which i want to add to my program. The code reads a measurment system via serial communication, and works. My question is that immediately after the port is configured, the receive buffer and send buffer are emptied, and the Visa serial I/O Buffer is set to 1.

Why are the buffers emptied and the buffer set?

The VI in Question are here (Ahlborn Init):

http://download.ni.com/support/idnet/A731568A8F1E46A6E05400144FFABDFD/ahlborn.zip

Solved! Go to Solution.

09-17-2020 04:54 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

09-17-2020 05:24 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi

Both points were my feeling. Thanks for the clarification!

Matthias

09-17-2020 06:06 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It has happened to me that there's crap on serial ports with some instruments so i've done a similar solution just to make sure the 1st read doesn't have extra characters.

This has typically been in Environment with serial port Communication, old instruments and computers going to sleep mode and/or Cables being unplugged.

In some cases the serial commuication with the instrument has completely died until it's been powered off and Cables unplugged ...

09-18-2020 06:07 AM - edited 09-18-2020 06:55 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It's usually not necessary but also doesn't hurt to do. Some devices may react with some garbage when they see modem control lines activated (which can be part of implicit initialization of the serial port when opening it). So in order to make sure, it's actually a good practice to clear all input and output buffers. Please note however that the actual value to the VISA Flush IO Buffer node is a bit mask, altough LabVIEW defaults to a ring control when you do a "create constant". You could also just create an u16 constant with the hex value 0x90 and wire it to one single VISA Flush IO Buffer node to achieve the same. Adding a diagram note alongside such a constant explaining the bit values it contains would be a good practice in that case.

The call to set the Receive buffer to only one character is pretty useless. Even the lowest cost uart chips nowadays have internally usually a FIFO for 8 or more character bytes and there is no way the serial port driver could disable that. Also it would seem totally useless to try to reduce that size like that. This function is only necessary to increase the buffer size to larger amounts if you have highspeed transmission and find that you seem to loose characters on the receiving end.

I wouldn't call this Rube Goldberg code, although some diagram cleanup would not hurt for this VI. The use of the Bytes at Serial Port property is definitely BAD code and the code to abort the read loop not only on error but also when Bytes at Serial Port is equal to 0 highly bogus even in light of the 30 ms delay in parallel to the VISA Write.

The only Rube Goldberg like code I would see in there are the two comparisons inside the read loop, with the equals to True being totally useless and the equals to 0 having a more direct node, although as mentioned earlier that check is pretty bogus.

One could argue that the case structures with wired boolean constant are also kind of Rube Goldberg altough that has more to do with not cleaning up the VI after debugging. This is a common approach during debugging to easily enable and disable code but I would feel a little ashamed to leave that in code that is officially released as an instrument driver by a manufacturer.

03-03-2021 06:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

What is the difference between the size of the serial port buffer and the value that is returned from "Bytes at port"?

I have probably never used serial ports properly before, so I wrote the attached to explore what is going on.

The Bytes at port exceeds the buffer size (when the loop is slowed down). Where are these extra bytes being held if not the buffer?

Also if I don't use a termination char, have a device that overproduces data that I poll for say 500ms every ten minutes for months on end, do I have to flush the buffer?

Thanks

Graham

03-03-2021 07:30 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The serial port buffer size you tell VISA to use, if you do at all, is simply a recommendation. VISA will try to configure the underlying serial port driver (under Windows that is the Windows COMM API) for this size but modern Windows systems today take that as best as a hint.

MSDN has to say this about the parameters for the SetComm() function:

The device driver receives the recommended buffer sizes, but is free to use any input and output (I/O) buffering scheme, as long as it provides reasonable performance and data is not lost due to overrun (except under extreme circumstances). For example, the function can succeed even though the driver does not allocate a buffer, as long as some other portion of the system provides equivalent functionality.

Basically, setting the buffer size is nowadays usually just a little hint to the driver that will be usually ignored, unless you set a huge value, in which case the driver MAY decide: Wait a moment! Someone seems to want to do something rather tricky, maybe I should really change my default settings!!

So to answer your question, those extra bytes are held and managed by the driver in the communication handle associated with the device and it works since if you set that buffer to a smaller value than what the driver wants to use anyhow, he will typically just ignore that request completely.

The Flush buffer is simply to make sure that any bogus data that may be in the buffers after you open the port is purged and won't mess up your program, when it is going to try to decode supposed data that is in fact just random noise on the line between when the driver was opened and you start to talk with your device. If your receiving code is robust enough to skip over such illogical data and find the real beginning of communication in the random data stream it receives, you can leave it away, but adding a Flush after opening a port is about 200% easier than testing and guaranteeing that your receiving code will not trip over its feet when presented with random gibberish.

03-03-2021 07:57 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks for that. Presumably then Windows is allowing me to inadvertently fill up memory if I sample a serial device without emptying the buffer?

For example, I had a tablet running overnight, sampling 200 bytes of serial data every minute from a device chucking out thousands of bytes continuously. The following morning the tablet had seized up. The code was written on a PC that barely noticed it was being asked to do something.

03-03-2021 08:21 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I found it. I hadn't disabled the termination character. Bytes at port was =/= return value. It is now. No buffering. 🙂

03-03-2021 08:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@grahamwebb wrote:

I found it. I hadn't disabled the termination character. Bytes at port was =/= return value. It is now. No buffering. 🙂

Generally, it is a very bad idea to use the Bytes At Port to tell the VISA Read how many bytes to read. You want to use the data protocol to decide how much data to read at a time. I would need to know a lot more about the communication protocol to give much more advice.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5