- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- « Previous

-

- 1

- 2

- Next »

Wait for less than 1ms.

Solved!07-09-2014 06:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

07-09-2014 06:53 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Mimi,

your instrument seems to be connected to your PC using a GPIB connection.

Do you account for latencies of that bus?

Do you account for bandwidth/ data transfer speed of that bus?

Do you account for latencies of the driver software (aka VISA)?

Do you account for latencies in data handling inside your instrument?

Accounting all those terms (and probably more) will bring you to the conclusion: It's ok to wait for a millisecond instead of burning the CPU to wait for 50µs!

But you have to make your own conclusions…

07-09-2014 07:21 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@GerdW wrote:

Do you account for latencies in data handling inside your instrument?

Let me put it this way. I have had to wait SECONDS for power supplies to settle (high current, lots of capacitance). I have had to wait 500ms for an oscilloscope to process a command before sending it another one. Every instrument is different, but the 50us just seems way too fast to me when it comes to instrument control.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

09-11-2014 12:10 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It looks like NI has recognised the need of promotion the High Resolution Relative Seconds.vi to the Timing palette:

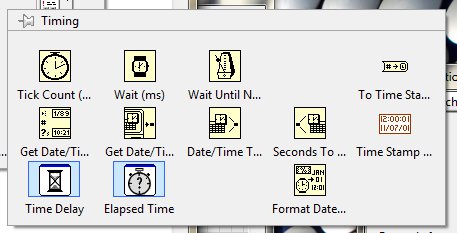

in LV2013SP1 the Timing palette looks like that:

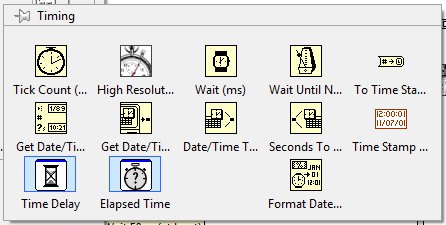

and in LV2014 it looks like that:

So now we can measure the time in interval less that 1ms. Great!

09-11-2014 12:23 PM - edited 09-11-2014 12:25 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

What does the capability of measuring sub-ms time intervals have to do with controlling a process with sub-ms precision?

09-11-2014 12:39 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@GerdW has is right - you are not even close to your "spec" unless you are looking for "approximately" 50usec from the time you issued the command to settling time. To be accurate you would need to know when the voltage is actually applied (e.g. acquire a high speed waveform and analyze or trigger your device) - if you are going by when you issued the command then the obsession with usec timing is probably moot.

09-13-2014 09:09 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@altenbach wrote:

What does the capability of measuring sub-ms time intervals have to do with controlling a process with sub-ms precision?

...a unit?

09-13-2014 09:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

GerdW, crossrulz

Of course, I 'm aware that there is a lot ingridiends of the delay in the system.

I asked the question on the forum to ask what theoratically I can have to control delays lower than 1ms.

My intention was to shape the code around the specification of the device as close as possible.

It was a surprise for me that I cannot get resolution below 1ms, now I know that is no a LV limitation.

However, thanks to Hoovath, we can go below 1ms now. And it looks like NI saw the need to expose the tool which gives better resolution that 1 ms.

09-13-2014 09:43 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I still say that the solution in post 3 is a better one, If you have a device capable of measuring at 10 uSec intervals.

Let the hardware do what the hardware is best at doing: precise sampling.

You don't have to worry about the "at least 50 uSec" problem, or any Windows uncertainties.

Culverson Software - Elegant software that is a pleasure to use.

Culverson.com

Blog for (mostly LabVIEW) programmers: Tips And Tricks

- « Previous

-

- 1

- 2

- Next »