- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Vivado CIC broken LV 2018 and 2019

06-18-2019 10:23 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I'm trying to port some code to a Kintex 7 target. One of those code pieces is a CIC filter (Xilinx IPCore).

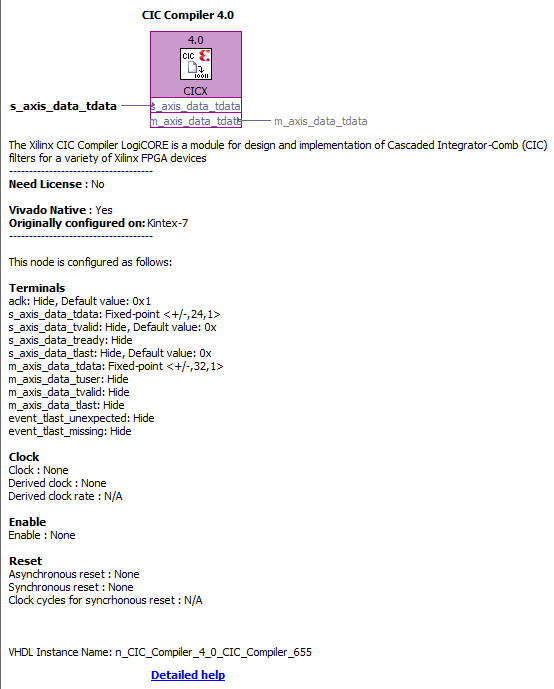

I can't get the darned thing to work. It will only present me with m_axis_data_tdata ports of 32bit width, irrespective of what is set in the dialog. Here's an image of a LV2015 node vs 2018 / 2019 node. What on earth am I doing wrong?

Both nodes were configured identically, 4-channel decimating filter (4-fold decimation), frequencies of 40MHz and 160MHz, 20 bit input width, full precision, 28 bit Output width, Latency 19.

Help?

06-20-2019 02:34 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

OK, tumbleweeds.

Assuming the CIC compiler is NOT broken, but theinterface has changed (to AXI4), how are the parameters to be defined? Why do the bit widths of the input and output not align with what I have set in the Xilinx IP?

Does anyone have an example of this IPCore from LV 2018 or 2019? Please?

06-25-2019 09:59 AM - edited 06-25-2019 10:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I have managed to get the thing working.

But there's definitely something fishy about the settings for this IPCore in LabVIEW. If I configure it for the previous settings, but with 16-bit Input width, hey presto, the input width in the Xilinx dialog and in the LabVIEW dialog match! If I go to 17 bit, the Input width jumps to 24-bit and the output width jumps to 32-bit.

It seems that the Input and Output widths only change in units of 8 bits. This is super counter-intuitive for FPGA code and makes working out how to align the data kind of tricky. For me, with +-20,1 input data but a 24-bit Input width, it works fine if I set the LV dialog datatype to +-24,5 (essentially throwing away 4 bits). Likewise, the output of width 32-bits is configured as +-32,5.

UPDATE:

I have since been able to locate the reason for this on page 43 of THIS document for the IPCore. Apparently, it's an AXI "feature" that everything is handled in blocks of 8 bits.

09-16-2019 03:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

Can you please explain why you have changed integer word length to (5) instead of word length in LV dialog datatype? If my desired input length of xilinx IP is 20,1 and because of AXI protocol it gives 24,1. how can i compensate it