- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- « Previous

-

- 1

- 2

- Next »

Unwanted delay when using DAQ in Labview

01-08-2020 08:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I think you're seeing a *latency* effect as your device's on-board FIFO gets filled up and the new waveform has to work its way through the FIFO before being generated as a real-world signal.

Dunno what device you have, but I know some common MIO boards have an 8k FIFO. At your 1k sample rate, that'd give you 8 seconds of latency. There's also a software task buffer that can add a little more latency.

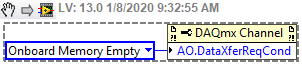

To reduce latency, there's an advanced DAQmx Channel property you can set once you switch over to using the standard DAQmx API instead of the DAQ Assistants.

The above setting makes the board keep its FIFO nearly empty, leading to min latency but highest risk of buffer underflow errors. (The default is for the board to keep its FIFO either full or half-full, leading to more latency but lower underflow risk.) I'm not sure how suitable this setting alone would be if you're using a USB device, I've only used it with desktop devices.

-Kevin P

01-08-2020 08:52 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you for the suggestion, but I am completely new at using Labview, and I am very unsure of how I should implement the thing you are suggesting. Could you maybe show it, or maybe even implement in my circuit.

If of course you have the time and generosity to do so 🙂

01-08-2020 08:54 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

A few other things to think about here:

Calculate one phase of your signal into a double array. Make the AO loop iterate through the array over and over to generate that signal. When something changes recalculate the phase and build a new array. This way you do not need to continuously generate the signal information. You only generate the double array if something changes.

If you are iterating through the double array and you want to change something it will also give you a way to stop immediately and go to the new signal.

Try to get rid of the wizards. They are a good starting point but are not efficient. Make sure that you put a wait in your loops so you do not bog down the processor.

GHSP

01-08-2020 09:09 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I really (REALLY) appreciate your replies guys, but I have no idea of how to implement these things you tell me. Can you please show me how I should do it 🙂

Thank you

01-08-2020 10:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

This should get you started.

GHSP

01-08-2020 10:37 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I will try sir. Thank you very much 🙂

01-08-2020 11:02 AM - edited 01-08-2020 11:07 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

EDIT: Oops, too slow. Looks like Tim's posting had a similar starting point but he took it quite a bit farther. Study it well.

----------------------------------------------------------------

Just as a starting point...

I right-clicked on the DAQ Assistant for your analog output task and selected "Generate NI DAQmx code". Then I did some very minimal cleanup on the auto-generated code. The result is attached.

I *highly* recommend the following 2 exercises:

1. In your code, open the DAQ Assistant for analog output. Start cross-referencing all the settings and properties there to the DAQmx code I posted. Do you find some things that aren't accounted for in one place or the other? Dig into the detailed help for the DAQmx functions and then come here with further questions.

2. On your DAQ Assistant, do the same right-click and "Generate...". Ignore the advice (like I did) about modifying the DAQmx Timing setup. Now carefully compare that raw result to what I posted, notice what cleaning up I did, try to understand it, then ask questions as needed.

-Kevin P

01-08-2020 12:23 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@BirgerBrosa wrote:

Thank you for the suggestion, but I am completely new at using Labview, and I am very unsure of how I should implement the thing you are suggesting. Could you maybe show it, or maybe even implement in my circuit.

If of course you have the time and generosity to do so 🙂

By the way, it is not a "circuit". A circuit implies wires and actual electricity. In LabVIEW, we call the visual representation of the program code a block diagram.

- « Previous

-

- 1

- 2

- Next »