- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Trying to read back TDMS file with offset

Solved!11-13-2019 08:15 AM - edited 11-13-2019 08:16 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

I am trying to read back the data from a tdms file at the same time as it is being read.

As the amount of data gathered is rather large I am trying to reduce the data to smaller chunks. As such I am trying to always read back the last 100'000 entries of the file and show those in a graph. The process seems to be working fine however after about 2-4 hours (different every time I run the program) I get the error that the amount of data I am trying to handle is too large.

Specifically the error looks like this:

Possible reason(s):

The application is not able to keep up with the hardware acquisition.

Increasing the buffer size, reading the data more frequently, or specifying a fixed number of samples to read instead of reading all available samples might correct the problem.

Property: RelativeTo

Corresponding Value: Current Read Position

Property: Offset

Corresponding Value: 0

The way I interpret the error message it says the file is too large to determine where the offset is set anymore and it returns an error. Do I read this correctly and how do I correct this issue? When I run the program without reading the data it has no issue.

Attached I have the complete program (Measurement_TDMS_2.vi) as well as only the subset of loops i use to read back the data (Measure and read.vi)

Thank you for your help

Solved! Go to Solution.

11-13-2019 09:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

OK There is a lot going on in your code.

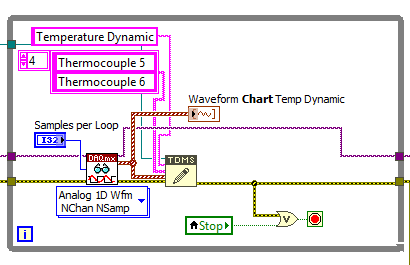

Why are there two analog loops measuring temperature? The second loop (analog group) does not appear to be doing anything other than taking up resources. I am surprised that you are not getting errors when trying to start these two loops at the same time.

Look at the producer consumer system. You should have one loop generating all of the data. Then you have a separate loop that records that data. You might even have a third loops that reads and displays the data.

You should be able to read the daq loop in one loop. It should not require you to have three different loops.

Get this suff fixed and then come back for the next round of help.

GHSP

11-13-2019 10:28 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Further, that's a DAQmx error related to one of your data acquisition tasks, not a TDMS file access error.

Also of note: you have multiple parallel loops running as fast as they possibly can, each accessing the same TDMS file that multiple loops are simultaneously trying to write to. Frankly, I'm kinda surprised you don't get errors long before 2 hours.

If you change your graphs into charts, they're designed around the "show recent history" behavior you want. You won't even need to read back from the TDMS file. Just feed your data to the chart(s) and it'll take care of the rest.

(Note: you'll need to right-click the front panel chart control to set up the history length properly. Take special care if feeding the chart with waveform data -- the length refers to the # of distinct waveform chunks you write, NOT the total # samples. Happy accident: the default length of 1024 works well with your 100-sample waveform chunks to result in 102400 most-recent samples being charted.)

-Kevin P

11-13-2019 10:52 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

That error has to do with your DAQ Read VI timing out, not the TDMS Read not working. "The application is not able to keep up with the hardware acquisition." Something is causing your DAQ Read VIs to not be able to keep up with the reading. The DAQ buffers are filling up, then overflowing.

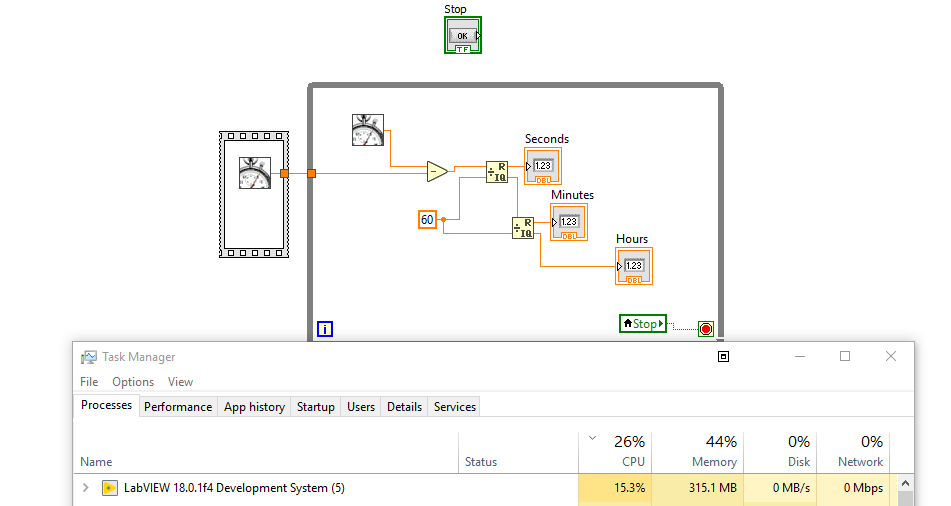

First thing, that clock loop you have at the top should really have a Wait in it. Running that loop alone uses up like 16% of my CPU. Is it only used for telling how long the program has run? If so, throw a Wait 1000ms in there. Loops without a Wait run as fast as the CPU will allow, so you're using up a huge portion of your CPU just to tell the time. Slow this loop down.

Next, why are you reading back from the files? Do you just want to display the last 100k points that have been read from each loop? If so, why not use Waveform Charts instead of Graphs? You can update the Chart History Length to save the last 100k data points. That way, you don't need to constantly read the same data from the file over and over and over.

There's also no Wait in the TDMS Read Loops, so you're trying to read the same data over and over again as fast as your computer could possibly allow. The DAQmx Read / TDMS Write loops could getting backed up because the TDMS read loops are simply taking too long. If you absolutely must read the same 100k points over and over, why not throw a Wait Until Next 1000ms? If it's running for 2-4 hours, do you really care if you don't see the last 1> second of data?

Another solution would be to have a producer-consumer loop. Instead of writing the data to the TDMS file then reading it back from the TDMS file: write the data to the TDMS file and send the exact same data through a Queue to a consumer loop. In the consumer loop, dequeue that data and display it in a chart or graph.

What's your CPU and disk usage while this program is running? All these parallel read and writes could simply be too much for the hdd. Fix the clock loop, and either slow down your read loops to reasonable speeds, use charts to show historic data, or use a producer-consumer architecture.