- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

The top-level VI was stopped at SelfRefNode on the block diagram

04-19-2013 01:22 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Memory usage in LV... is a topic for another time.

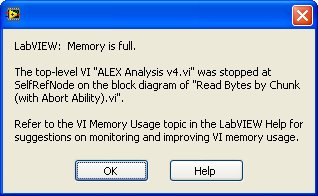

Usually, when I run out of memory in LV, it will stop and pop-up this friendly title-less window with the mysterious header: "LabVIEW: Memory is full", followed by some indication of where the problem was encountered. See this snapshot for a (non-typical, see later) example:

When pressing OK, the incriminated diagram is usually opened and the VI/Primitive where the problem has occurred is selected.

This time, I got a cryptic source: SelfRefNode.

Google it and you will probably end up on this thread (at least for the LV related links).

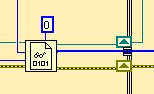

So where did the memory problem occur? My guess is probably here:

The concatenating tunnel could indeed result in a very large array (I can actually see this happening watching the memory usage in Task Manager).

I'd suggest replacing the "SelfRefNode" description by "Concatenating Tunnel" and highlight it.

I am not labeling this as a bug report, but a CAR would be welcome.

- Tags:

- X_Question

04-19-2013 01:49 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The SelfRefNode is an element of a diagram, it owns the terminals of the diagram (tunnels and the like). Imagine you wrap the contents of a diagram in a subVI, then the SelfRefNode is this imaginary VI (virtual VI?). You are almost certainly correct about the ultimate source of the problem. The error warning probably is built to indicate the Node which caused the error (often a subVI or function), not an individual tunnel. If the error is meant for public consumption (sometimes I wonder), then I think it makes more sense to go one step up the hierarchy in this case (a SelfRefNode) and highlight the diagram. Pinpointing the exact terminal may be hard for the runtime system, but it is probably easy for you to do if the diagram is flagged.

06-02-2020 10:20 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hey guys,

I am gravedigging this topic because i am having the exact same problem (SelfRefNode "no memory" issue and i am concatenating a large array) and i already tried lots of modifications to solve it, but they were in vain...

Thing is that i am reading a very large .txt (~80million numbers in string that will be converted to U32 elements) and i am reading 4 lines (4 strings that i convert in U32 then) per iteration, convert them and concatanate to a big array. But it seems, just when the while loop read all the data, converted it and is about to step out of the loop, i get this error message.

06-02-2020 10:54 AM - edited 06-02-2020 10:56 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@lucaseduardoml wrote:

... i already tried lots of modifications to solve it, but they were in vain...

Thing is that i am reading a very large .txt (~80million numbers in string that will be converted to U32 elements) and i am reading 4 lines (4 strings that i convert in U32 then) per iteration, convert them and concatanate to a big array. But it seems, just when the while loop read all the data, converted it and is about to step out of the loop, i get this error message.

I think you get much better help if you start a new thread, tell us the exact problem, tell us what you tried, and show us some example code. Most likely you are causing a lot of memory fragmentation. It looks like you know the exact final array size when the VI starts, so constantly growing an array is ill advised because arrays are required to be contiguous in memory. What is the structure of the file (formatted? Binary?) What kind of "conversion"? (scan? unflatten?). What is the line delimiter? Are the "lines" fixed or variable in length? Is the big data mostly contained in wires or is it also connected to indicators?

06-02-2020 12:21 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thank you for the answer ! I will definetely take your advice ;D