- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

SYSCFG operations interfere with COUNTER channel measurements

12-08-2016 10:25 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

LV2013, LVRT2013, Win7, PXI-8196

I have a system where the host program communicates (TCP) with a program on the PXI.

The PXI measures all manner of channels and reports to the host at 10 Hz.

The CPU on the PXI normally runs at 5-7% utilization.

HOWEVER

it is apparently being overburdened periodically by an operation, that I instigate from the host, every 10 seconds.

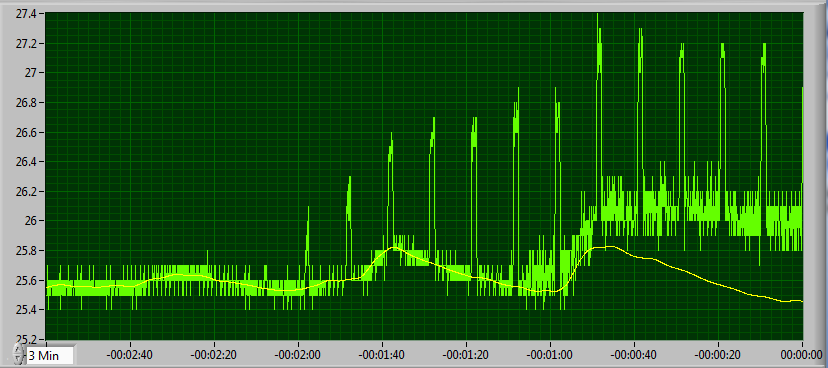

Here is a 3-minute data record. I now have a switch where I turn off the 10-sec status request.

I turned it off about 45 sec before this picture was taken, and you can see the disruptions at 10-sec intervals, which stop about -00:00:45.

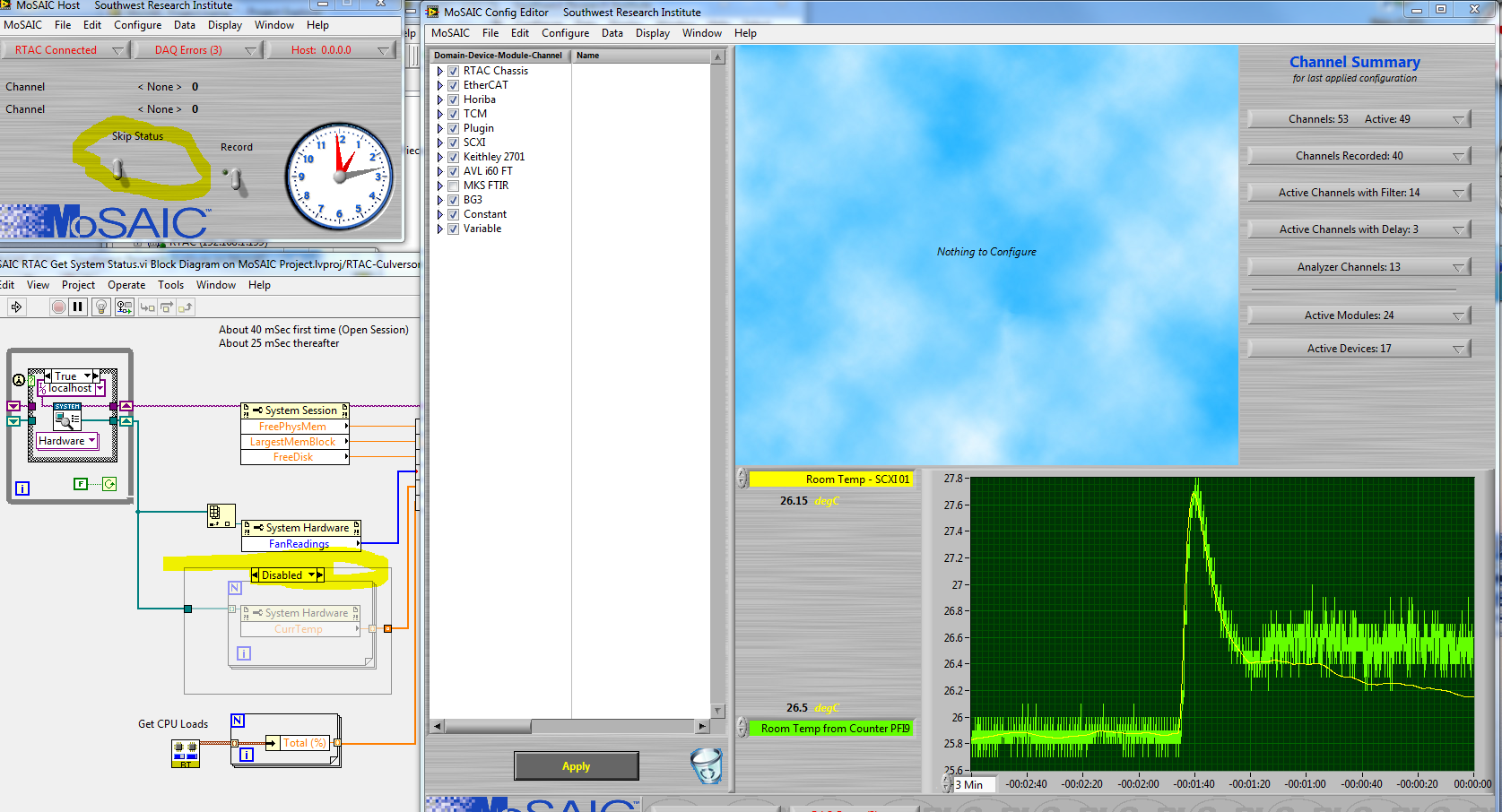

Here is the code that executes on the PXI when a status request comes in:

I'm using the SYSCFG operations to obtain data about CPU loads, and internal temperatures.

So, am I doing something wrong? The PXI is running a real-time OS (PharLap, I think), but this is hardly behavior I would expect from an RTOS.

I have ALSO seen the same thing in another instance - when conducting a test of high-volume data communication between the PXI and the host, I am measuring the data rate. That data rate takes a dive every 10 seconds if the status request is there, and stays stable if the status request is not there.

Any ideas?

Culverson Software - Elegant software that is a pleasure to use.

Culverson.com

Blog for (mostly LabVIEW) programmers: Tips And Tricks

12-08-2016 10:50 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Here is the code that measures the frequency (on the PXI box).

It is called at 10 Hz (barring disruption).

There is a buffer for counter readings, and I subtract the oldest from the newest and scale that into frequency.

Culverson Software - Elegant software that is a pleasure to use.

Culverson.com

Blog for (mostly LabVIEW) programmers: Tips And Tricks

12-08-2016 11:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

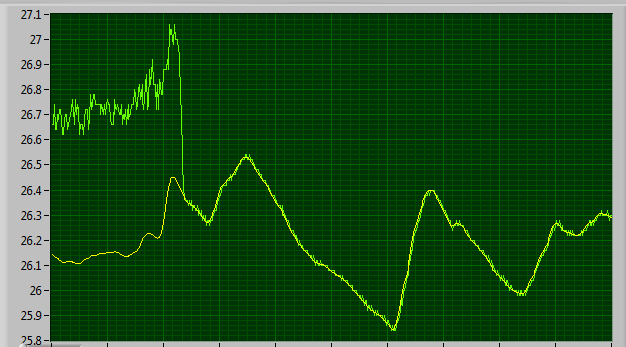

Here is a pic of the data soon after PXI startup (reboot):

The yellow signal is an analog temperature measurement.

that signal drives a frequency OUTPUT, which I am then measuring with a counter INPUT.

Notice the good agreement up to a point, and then the disruptions start (I didn't flip a switch here).

After 60 sec of disruptions getting larger and larger, the base signal starts to diverge.

Culverson Software - Elegant software that is a pleasure to use.

Culverson.com

Blog for (mostly LabVIEW) programmers: Tips And Tricks

12-08-2016 12:05 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Disabling the TEMP reading part gets rid of the every-10-sec disruptions.

Culverson Software - Elegant software that is a pleasure to use.

Culverson.com

Blog for (mostly LabVIEW) programmers: Tips And Tricks

12-08-2016 12:13 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The discrepanacy between the analog reading and the frequency reading IS caused by my PXI box's air filters being dirty - the CPU was apparently protecting itself by slowing down, and so the counter operation wasn't consistent. That's why data was good for a while after a reboot.

You can probably tell where I took the filter cover off:

Culverson Software - Elegant software that is a pleasure to use.

Culverson.com

Blog for (mostly LabVIEW) programmers: Tips And Tricks

12-08-2016 12:27 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

OK, cleaning the air filters changes the nature of the disruptions.

They're not as long-lasting, but somtimes there are multiple ones now.

CONCLUSIONS:

1... Keeping the AIR FILTERS clean is important. I have tripped over that before.

2... The SYSTEM HARDWARE - CURRENT TEMP function is faulty somehow - it apparently uses a LOT more CPU than expected.

Culverson Software - Elegant software that is a pleasure to use.

Culverson.com

Blog for (mostly LabVIEW) programmers: Tips And Tricks