- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Number precision conversion problem

Solved!09-22-2019 07:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Dear All,

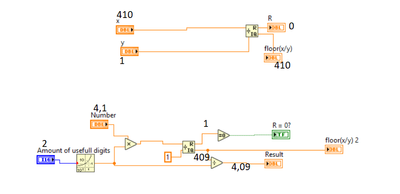

I have encountered an unexpected behaviour, which I cannot understand. I am trying to convert a float number into float with specified amount of digits, checking whether the precision in the original number was too big.

I do it by multiplying a number by 10*NofDigits taking Quotient&Remainder from division by 1 and dividing the number back by 10*NofDigits. For most of the inputs it works fine.

But when, for example, the number is 4.1 and the amount of digits is set to 2 I get a Quotient = 409, instead of 410. If I just plug 410 and 1 into Quotient&Remainder from Controls, it works as expected.

I can not understand why this is the case and also how to overcome the problem. Any alternative ways to achieve the same functionality are welcome

Solved! Go to Solution.

09-22-2019 09:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Have you ever heard about "rounding", and has anyone (in any programming class) discussed the issue of representation of decimal fractions using floating point numbers (to a first approximation, no fraction, other that ones whose denominators are powers of 2, can be exactly represented)?

The Quotient and Remainder function is designed for integers. If you wire in a Float, it gets rounded down (as this is the nature of the Mod function. Try inserting a "Round to nearest integer" before you use the Mod function, or, even better, simply use "Round to nearest integer" instead of the Mod (Quotient & Remainder) function.

Bob Schor

09-22-2019 10:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The problem with "Round to nearest" is that it does not give a feedback, whether all the numbers after the comma are 0, whether I use it instead or before "Quotient&Remainder". The essence of the VI is not only to get the specified amount of digits but also to determine whether some digits were discarded or not.

I hope I understood your reply correctly.

09-22-2019 10:37 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The only way to represent 4.1 with exactly two digits of precision (namely 4 + 0.1) is as a string. Floats are represented as an Integer times a power of 2. For example, 4.5 would be represented as 9 * 2^-1 or 9/2 = 4.5. However, there is no finite representation of 4.1, so no (simple) way to tell if the number is supposed to be 4.0985, as opposed to 4.1047, particularly if you are displaying it with three digits of significance. Here is a Snippet showing this behavior -- both will display as 4.1 (3 digits of significance).

Bob Schor

09-22-2019 10:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Let's take a step back. Before going down a path to do something, let's ask why you need to do something. Often there is a better way.

You said, "I am trying to convert a float number into float with specified amount of digits, checking whether the precision in the original number was too big."

Why do you need to know if the precision of the original number was too big?

09-22-2019 11:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@RavensFan wrote:

Let's take a step back. Before going down a path to do something, let's ask why you need to do something. Often there is a better way.

You said, "I am trying to convert a float number into float with specified amount of digits, checking whether the precision in the original number was too big."

Why do you need to know if the precision of the original number was too big?

Instrument (in this case magnet power supply) has a limited precision. I want to warn the users, that they have entered the number with more digits, than experimentally achievable precision. In my case, one numeric control corresponds to two different possible entries (it can be either power supply current or magnetic field) and they have different exp. precision.

09-22-2019 12:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi fun11,

I want to warn the users, that they have entered the number with more digits, than experimentally achievable precision.

Possible options coming to my mind:

- Make that input a string control and check the number of digits on your own. (That might get more tricky once you allow scientific notation.)

- Round the numeric input to the needed precision. (Most often external devices expect string commands, so you need to round and convert the number to a string anyway!)

09-22-2019 03:26 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

What determines if the one numeric control needs to be a power supply current or the magnetic field?

Forget the fact it might be confusing that you have one control that means two different things. Just set the control to the required precision. If the control needs to be the other mode, then use a property node to set it for the precision for that. Let your program control what the displayed precision is based on the mode, and let the control itself show the actual precision. Not, it would still be possible to enter a number like 1.1111111 in a control when the precision setting will show it as 1.11, but the underlying value still has the extra digits. So you may still need to round the entered value to the required precision anyway.

09-22-2019 11:45 PM - edited 09-23-2019 12:16 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@RavensFan wrote:

What determines if the one numeric control needs to be a power supply current or the magnetic field?

Forget the fact it might be confusing that you have one control that means two different things. Just set the control to the required precision. If the control needs to be the other mode, then use a property node to set it for the precision for that. Let your program control what the displayed precision is based on the mode, and let the control itself show the actual precision. Not, it would still be possible to enter a number like 1.1111111 in a control when the precision setting will show it as 1.11, but the underlying value still has the extra digits. So you may still need to round the entered value to the required precision anyway.

Thanks everyone for your help. I will use this approach this time + rounding the input when the control is read (that means, no warning for the user, during the execution). Although, in general, in future, I will try to avoid such situation spliting the control in 2 or using string.