- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

NI visa read serial looses a lot of data

Solved!11-28-2018 03:18 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

I am sending a continuous serial data from a micro-controller to the PC via serial port at a baud rate of 1562500.

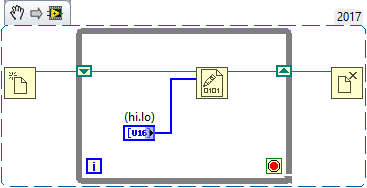

I noticed that my lab-view read vi attached below looses lots of the data packets that I am sending. When I went through the saved data I notice that the data in the receive buffer has been flashed in the middle of the transmission and all the synchronization is lost.I tried using various buffer sizes but it seems the program behaves the same.

The problem I have is quite similar to the following.

https://forums.ni.com/t5/LabVIEW/VISA-Read-continuous-serial-data-drops-packets/td-p/3580333

Here I have attached the vi I am using at the moment.

Solved! Go to Solution.

11-28-2018 03:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I think you read too slow.

you get only 1+2+18 bytes and write it into file with expressVI. In this case every iteration you open and close file. That takes much time.

try to measure loop period, I think it will less than need.

Or remove "Write to file " vi and check speed

11-28-2018 04:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

Thanks for the reply. The problem is I need to log the data into a file, how can I log the data into file without slowing the read?

11-28-2018 04:54 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You should use open-save-close functions

Also you have 1000 bytes buffer. You can read longer data packets, but rare

11-28-2018 06:33 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Loses

Lose = not find

Loose = not tight

11-28-2018 06:55 AM - edited 11-28-2018 06:56 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@M.z779243 wrote: how can I log the data into file without slowing the read?

Also look into the Producer/Consumer architecture. The idea is you have a second loop that does the logging. The data is sent to this logging loop via a queue. This way your serial port loop can just do its process and let other (parallel) threads process the data however they see fit.

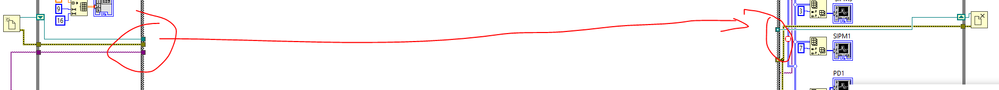

Now where to begin with the rest of your code...

1. No need for any of your local variables. You can store the data in the shift registers you already have set up (but aren't using).

2. Get rid of that "Stop VI" function. It is just like hitting the abort button and no clean up of your references (serial port, file, etc).

3. Even when you don't want to process the data, you really should be going through the read process to avoid the serial port buffer from filling up. Just have the case structure around the queue I mentioned earlier to no pass the data on to the logging loop.

4. The FOR loop makes no sense to me. Why not just constantly read the packets instead of forcing groups of 16 before writing to the chart? Charts have a history, so you will still see all of the last X samples (X = buffer size, default is 1024) in the chart.

5. No need for so many Simple Error Handlers. Just one at the end of the loop should more than suffice.

6. Your data conversion can all be done with a simple Unflatten From String.

7. Your structure in general is just a mess. Here is a cleaned up version.

8. You can put all of your data into a single chart.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

11-28-2018 07:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

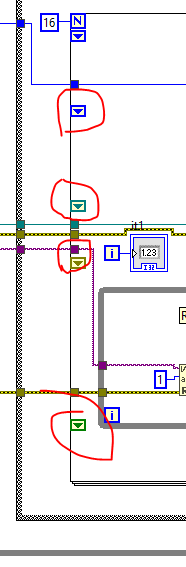

I tried your suggestion but the problem still persists. Even though the saving happens much faster, I still have exactly the same UN-synchronization and lose a lot of data . The pattern seems to be first it reads 48 packets of data and looses a lot of packets thousands of them, afterwards it reads 772 packets correctly followed by a lose of 48 packets, After that it reads only every other 48 packets of data.

In addition to this the save function gives GpiB error after reading for a while.

11-28-2018 08:03 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You should study the shift registers.

You lost file reference after pause

Also you still read only 18 bytes. If you can't read more, use Producer/Consumer as crossrulz has said

11-28-2018 08:45 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

My suggestion to you would be to read all data and save it to a separate file then go and review that data against your code. I see parts of your code where you expel data that doesn't match your search criteria. You may wish to keep that data and append the newly acquired samples to the end.

If it helped - KUDOS

If it answers the issue - SOLUTION

11-28-2018 09:21 AM - edited 11-28-2018 09:25 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

What's with all the "sync byte" stuff? I have never seen anything like that before...

What instrument are you communicating with? What is the format of the incoming data? Is there a termination character of any kind?

But as others have already said receiving data at such high baud rates you really need to use a producer/consumer architecture. The "producer" is a loop that will just receive the data place it in a queue (a buffer) and send it to the "consumer" loop. Now the consumer can take its own sweet time de-queueing the data and analyzing it as incoming data will simply stack up in the queue.

=== Engineer Ambiguously ===

========================